David Cox

21.7K posts

David Cox

@neurobongo

VP, AI Foundations @IBMResearch, IBM Director, @MITIBMLab. Former prof @Harvard, and serial/parallel entrepreneur.

Red Hat CEO @MattHicksJ says open sourcing model weights is just the start: "Openness should begin with model weights, but be further supported by an open ecosystem of tools and platforms that prevent vendor lock-in." Read his argument for genuine open source AI via @Forbes: bit.ly/4pvTCjF

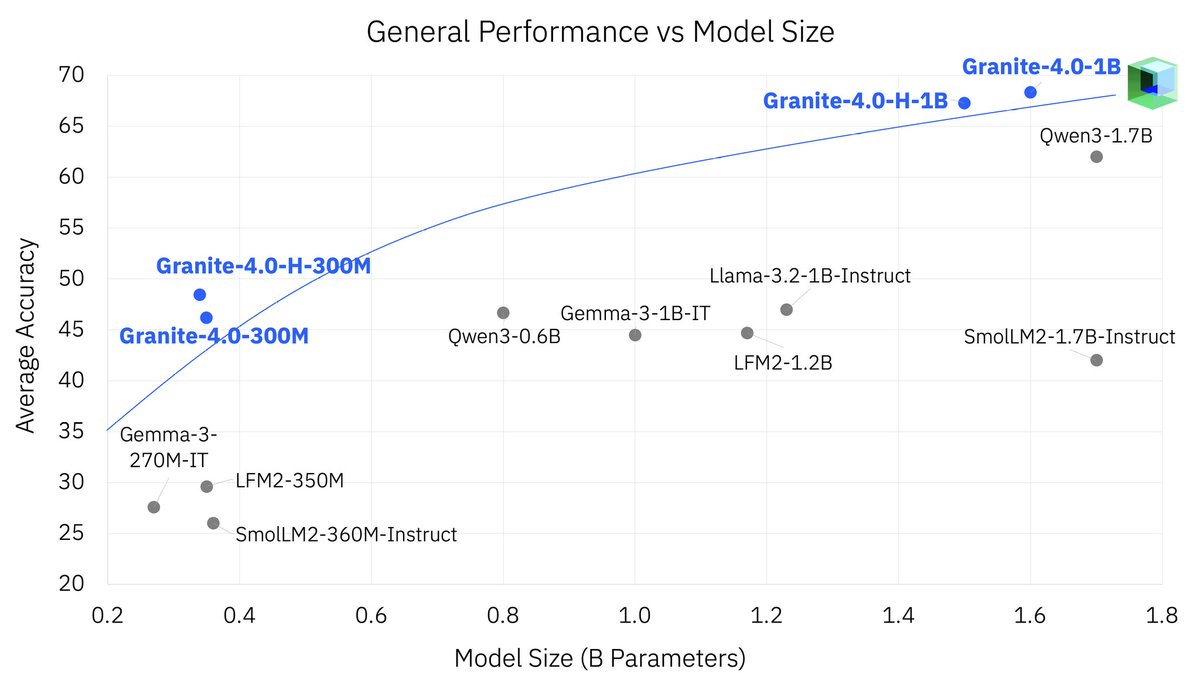

IBM AI Team Releases Granite 4.0 Nano Series: Compact and Open-Source Small Models Built for AI at the Edge Small models are often blocked by poor instruction tuning, weak tool use formats, and missing governance. IBM AI team released Granite 4.0 Nano, a small model family that targets local and edge inference with enterprise controls and open licensing. The family includes 8 models in two sizes, 350M and about 1B, with both hybrid SSM and transformer variants, each in base and instruct. Granite 4.0 Nano series models are released under an Apache 2.0 license with native architecture support on popular runtimes like vLLM, llama.cpp, and MLX.... Full analysis: marktechpost.com/2025/10/29/ibm… Model weights: huggingface.co/collections/ib… @IBM @IBMwatsonx @IBMResearch @IBMData @IBMcloud @IBMDeveloper @IBMNews

Introducing Granite 4.0 Nano, compact and open-source models built for AI at the edge. Available in 350M and 1B, for building AI on laptops and mobile devices: ibm.co/6013Bzpt7