Marktechpost AI

13.1K posts

@Marktechpost

🐝 AI Dev News Platform (1 million+monthly traffic) | 150k+ AI subreddit | Contact: [email protected]

Most LLM pre-training efficiency work either changes the tokenizer, the architecture, or the inference behavior. Nous Research just showed you don't have to touch any of them. They released Token Superposition Training (TST) — a two-phase modification to the standard pre-training loop that averages s contiguous token embeddings into a single latent s-token in Phase 1, trains with a multi-hot cross-entropy loss against the next bag of tokens, then reverts to standard next-token prediction in Phase 2 from the same checkpoint, with the TST code fully removed. Here's what's actually interesting: → Each TST step is kept equal-FLOPs to baseline by increasing data sequence length by s× — not the batch size → 3B dense: loss 2.676 in 247 B200-hrs vs 443 B200-hrs for baseline at matched loss (~1.8x faster) → 10B-A1B MoE: 4,768 B200-hrs vs 12,311 B200-hrs at matched loss (~2.5x faster) → Optimal range: bag size s ∈ [3–8] at 270M, s ∈ [6–10] at 600M, s = 16 at 10B; step ratio r ∈ [0.2, 0.4] → Re-initializing the embedding or LM head at the phase boundary breaks it entirely — loss went from 2.676 to 2.938, worse than the 2.808 baseline Full analysis: marktechpost.com/2026/05/13/nou… Paper: arxiv.org/pdf/2605.06546 Project page: nousresearch.com/token-superpos… @NousResearch

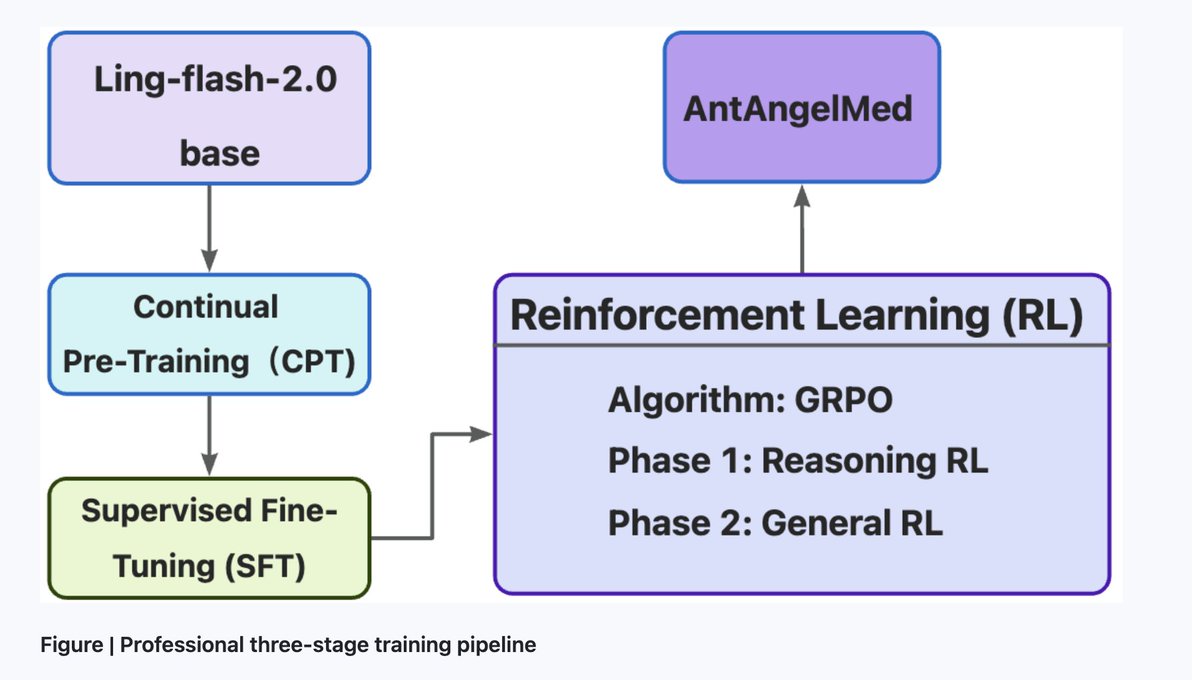

A 103B medical LLM just got open sourced — and it only activates 6.1B parameters at inference time Meet AntAngelMed — a 103B-parameter medical LLM that only activates 6.1B parameters at inference time. Here's what's actually super interesting: 1. The architectureIt uses a 1/32 activation-ratio MoE built on Ling-flash-2.0. You get 103B total parameters worth of knowledge capacity, but inference cost stays proportional to 6.1B active parameters — matching roughly 40B dense model performance. 2. The training pipelineThree stages: → Continual pre-training on medical corpora (encyclopedias, web text, academic publications) → SFT with mixed general + clinical instruction data → GRPO-based reinforcement learning with task-specific reward models for safety, diagnostic reasoning, and hallucination reduction 3. Inference numbers→ 200+ tokens/s on H20 hardware → ~3× faster than a 36B dense model → 128K context length via YaRN extrapolation → FP8 + EAGLE3 boosts throughput over FP8 alone: +71% on HumanEval, +45% on GSM8K, +94% on Math-500 4. Benchmark results→ #1 open-source on OpenAI's HealthBench — also surpasses several proprietary models → Top-level on MedAIBench (China's national medical AI benchmark) → #1 overall on MedBench across all 5 dimensions: knowledge QA, language understanding, language generation, complex reasoning, and safety & ethics Full analysis: marktechpost.com/2026/05/12/mee… Model Weighs on HF: huggingface.co/MedAIBase/AntA… GitHub Repo: github.com/MedAIBase/AntA… Technical details: modelscope.cn/models/MedAIBa… @AntGroup #OpenSource #llm #medicalai

Meta just made byte-level LLMs 92% cheaper to run at inference. No tokenizer. No subword vocabulary. Just raw bytes — and now, parallel generation. Here's how BLT-Diffusion works: > Standard BLT generates 1 byte at a time (slow) > BLT-D generates a full block of bytes in parallel per step > BLT-S uses BLT's own decoder as a speculative drafter — no extra model > BLT-DV drafts via diffusion, verifies autoregressively — same weights Result: up to 92% memory-bandwidth reduction vs BLT. Translation quality holds. Full analysis: marktechpost.com/2026/05/11/met… Paper: arxiv.org/pdf/2605.08044 @AIatMeta @JulieKallini @ArtidoroPagnoni @TomLimi @gargighosh @LukeZettlemoyer @XiaochuangHan @sriniiyer88 @ChrisGPotts @stanfordnlp

Feedforward layers account for 80%+ of LLM compute — and for any given token, most of that computation lands on zero-value activations. Sakana AI and NVIDIA research team released TwELL and a set of CUDA kernels that finally make that sparsity exploitable on modern GPUs. Here's the part that is very interesting: Sparse ops have mostly run slower than dense ops on NVIDIA GPUs. The overhead from converting activations to sparse format cancelled every theoretical saving. That's the paradox this new esearch fixes. Here's the breakdown: → TwELL (Tile-wise ELLPACK): A new sparse format built directly into the matmul kernel epilogue. No extra kernel launch. No extra global memory read. No synchronization overhead. → Fused inference kernel: Takes gate activations in TwELL format and performs up + down projections together. The hidden state is never written to global memory. → Hybrid sparse format for training: Routes rows into compact ELL or dense backup dynamically — handles the non-uniform sparsity patterns that make training hard without becoming brittle. → The training recipe: Two changes only — replace SiLU with ReLU, add L1 regularization at coefficient 2×10⁻⁵. Same LR, same optimizer, same batch size. → 2B model results on H100 PCIe: 🟢 +20.5% inference throughput 🟢 +21.9% training step throughput 🟢 −17.0% energy per token 🟢 Accuracy: 49.1% dense → 48.8% sparse → It scales the right way: Average non-zero activations drop from 39 (0.5B) to 24 (2B). Gains grow with model size — not shrink. All kernels are open and released. So, basically it's not about smaller models. It's about skipping the computation that was always wasted. Full Analysis with Visuals/Guide: marktechpost.com/2026/05/11/sak… Paper: arxiv.org/pdf/2603.23198 Repo: github.com/SakanaAI/spars… Technical details: pub.sakana.ai/sparser-faster… @SakanaAILabs @NVIDIAAI @nvidia