Nick Gallo

371 posts

Nick Gallo

@nickmgallo

https://t.co/K9uNCm6y4A American patriot | Phd in computer science | Counter-institutional movement: great books, nature, western culture

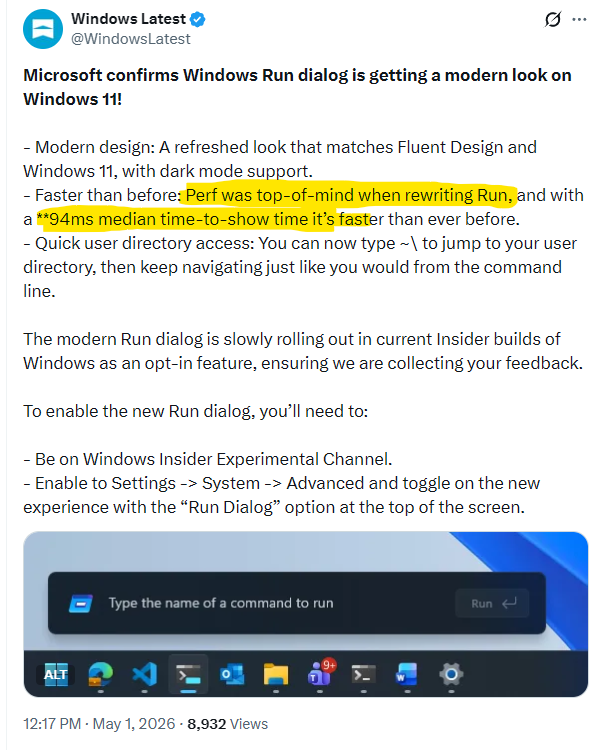

Economics of AI coding tools are crumbling. Cost per developer has doubled because cost of Claude code and microsoft copilot are rising even as bugs remain a problem, all consistent with Nvidia's statement that AI is more expensive than humans. futurism.com/artificial-int…

Interesting article on treating agent output like compiler output (and why) skiplabs.io/blog/codegen_a…

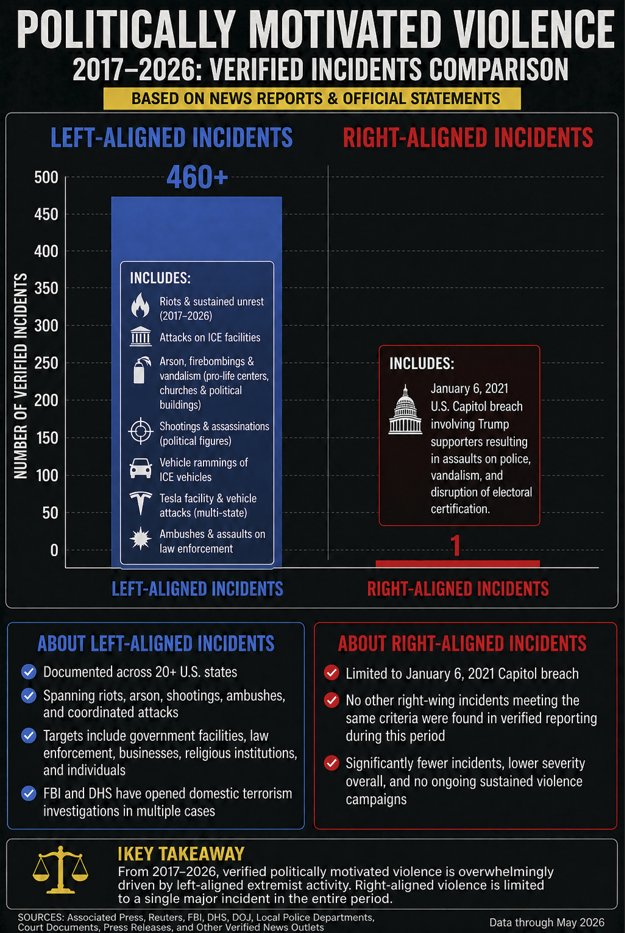

Universities are the leading incubator of extremist political terrorism in America

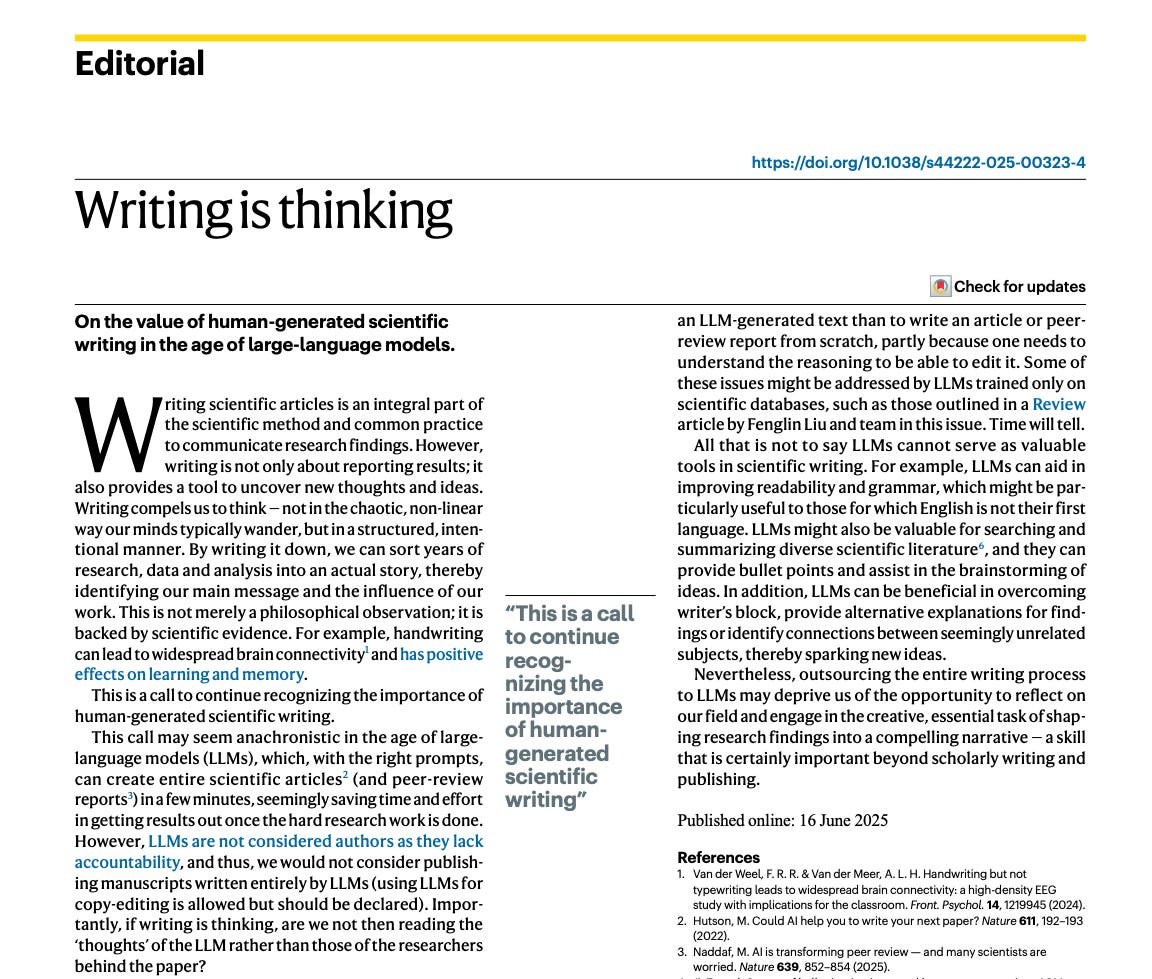

So it turns out that writing is thinking. It's the same process. "Writing compels us to think — not in the chaotic, non-linear way our minds typically wander, but in a structured, intentional manner." Outsourcing writing to LLMs is THE SAME THING as outsourcing thinking.

Had enough of the grifting? Done with recycled political commentary and cringe entertainment? So am I. I want to have conversations with people actually Doing The Thing. Interviews you actually want to hear. Community dedicated to building together. Sign up below. It's time.

New Microsoft paper shows that current AI assistants often damage documents during long editing jobs. Even the frontier models still ended up corrupting about 25% of document content on average, while many other models damaged far more. The problem is that delegated AI work only makes sense if a model can keep a document correct across many edits, not just do 1 step well. The paper tests this with reversible task pairs, where a model edits a file and then tries to undo that edit, so a reliable system should return to the original document. The authors built real work setups across 52 domains, from coding and science to accounting and music notation, and ran 19 models through 20 editing interactions. The failures were usually not lots of tiny slips but occasional big mistakes that silently broke parts of the document and then compounded over time. Agentic tool use did not help in their tests, and bigger files, longer workflows, and irrelevant extra documents made the corruption worse. The reason this matters is that current LLMs can look strong in short demos or narrow coding tasks yet still be unreliable delegates for long real-world document work. ---- Paper Link – arxiv. org/abs/2604.15597 Paper Title: "LLMs Corrupt Your Documents When You Delegate"

A useful exercise for the autists among us would be to identify every one of the disruptive students and then compile a dossier that could be sent to the bar when they try to become lawyers