Dimitriadis Nikos

47 posts

Dimitriadis Nikos

@nikdimitriadis

student researcher at Google DeepMind working on post-training. PhD @ EPFL.

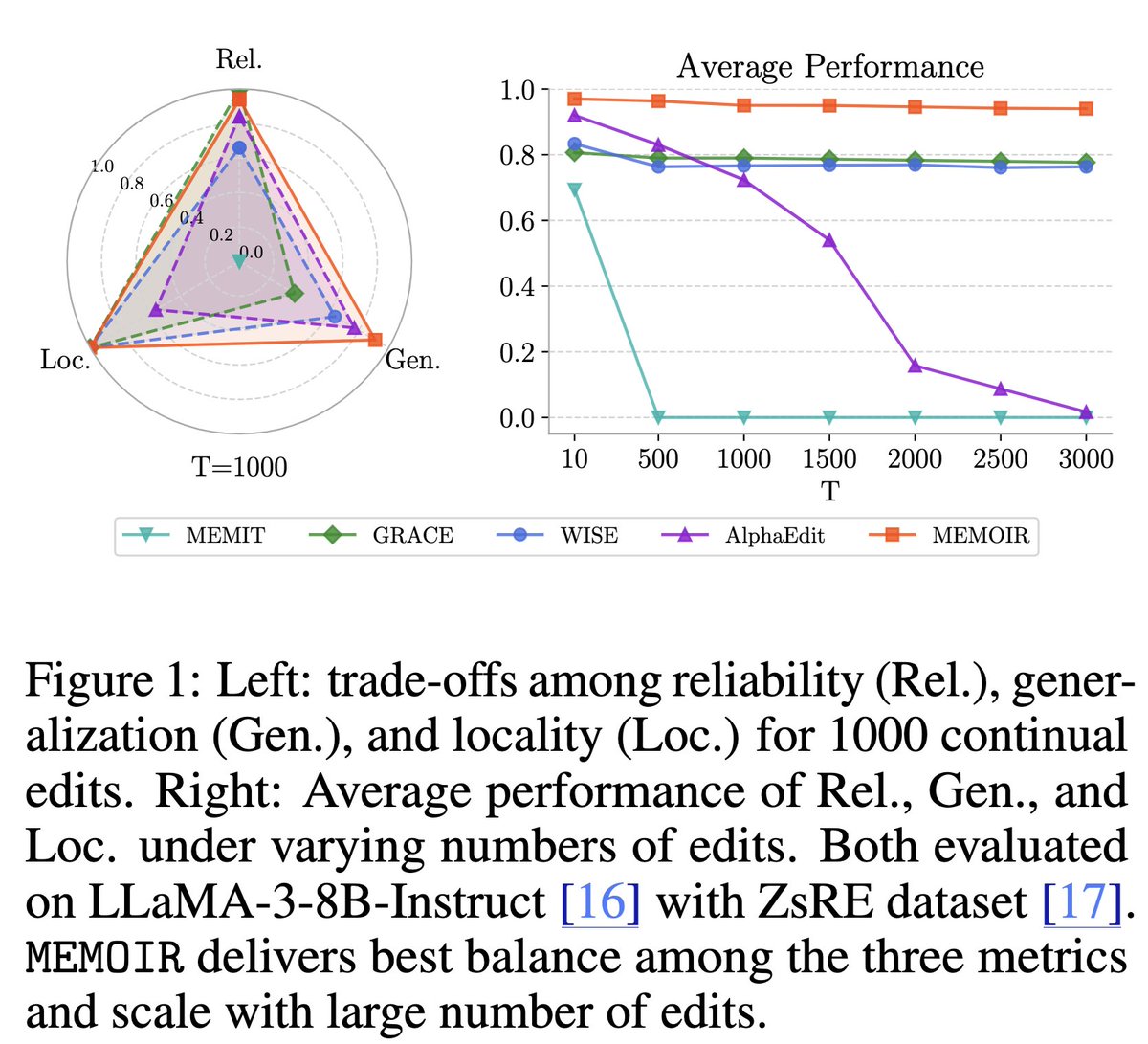

How can we inject new knowledge into LLMs without full retraining, forgetting, or breaking past edits? We introduce MEMOIR 📖— a scalable framework for lifelong model editing that reliably rewrites thousands of facts sequentially using a residual memory module. 🔥 🧵1/7

New Online! Decoding the interactions and functions of non-coding RNA with artificial intelligence bit.ly/4kNblk6

How can we inject new knowledge into LLMs without full retraining, forgetting, or breaking past edits? We introduce MEMOIR 📖— a scalable framework for lifelong model editing that reliably rewrites thousands of facts sequentially using a residual memory module. 🔥 🧵1/7

Are you interested in graph generation, from molecular discovery 🧪 to social networks 🌐? You’ll love DeFoG 🌬️😶🌫️, our new framework that delivers state-of-the-art performance in diverse graph generation tasks with unmatched efficiency! 🤩 📄: arxiv.org/abs/2410.04263 🧵1/9

Happy to share that DeFoG: Discrete Flow Matching for Graph Generation will be showcased as a Spotlight Poster at #ICML2025 ! -> Explore the paper: arxiv.org/abs/2410.04263 -> Open-source code: github.com/manuelmlmadeir… Looking forward to your feedback on our repository!

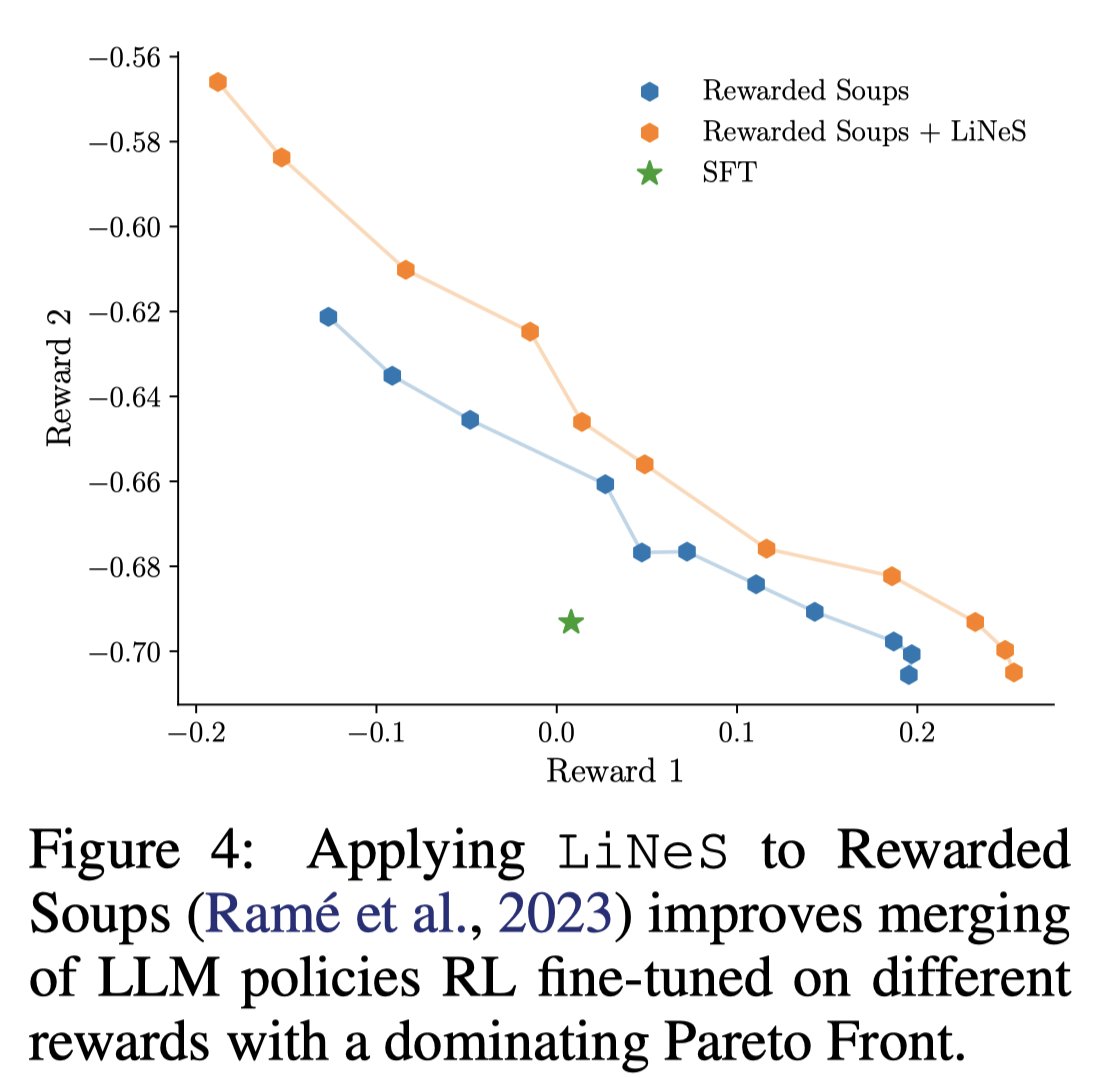

Fine-tuning pre-trained models leads to catastrophic forgetting, gains on one task cause losses on others. These issues worsen in multi-task merging scenarios. Enter LiNeS 📈, a method to solve them with ease. 🔥 🌐: lines-merging.github.io 📜: arxiv.org/abs/2410.17146 🧵 1/11

@francoisfleuret I am just starting to work on Task Arithmetic for multi-task meta learning and this paper is a huge step forward! I'll certainly use LiNeS. Btw, why is all your research so cool? Really, cool is the word.

Wouldn't it be great if we could merge the knowledge of 20 specialist models into a single one without losing performance? 💪🏻 Introducing our new ICML paper "Localizing Task Information for Improved Model Merging and Compression". 🎉 📜: arxiv.org/pdf/2405.07813 🧵1/9

Excited to share Lottery Ticket Adaptation (LoTA)! We propose a sparse adaptation method that finetunes only a sparse subset of the weights. LoTA mitigates catastrophic forgetting and enables model merging by breaking the destructive interference between tasks. 🧵👇

Fine-tuning pre-trained models leads to catastrophic forgetting, gains on one task cause losses on others. These issues worsen in multi-task merging scenarios. Enter LiNeS 📈, a method to solve them with ease. 🔥 🌐: lines-merging.github.io 📜: arxiv.org/abs/2410.17146 🧵 1/11