Alessandro Favero

133 posts

@alesfav

Physics-AI fellow @Cambridge_Uni explaining the scientific principles behind AI. Formerly @EPFL, @Amazon AI Labs.

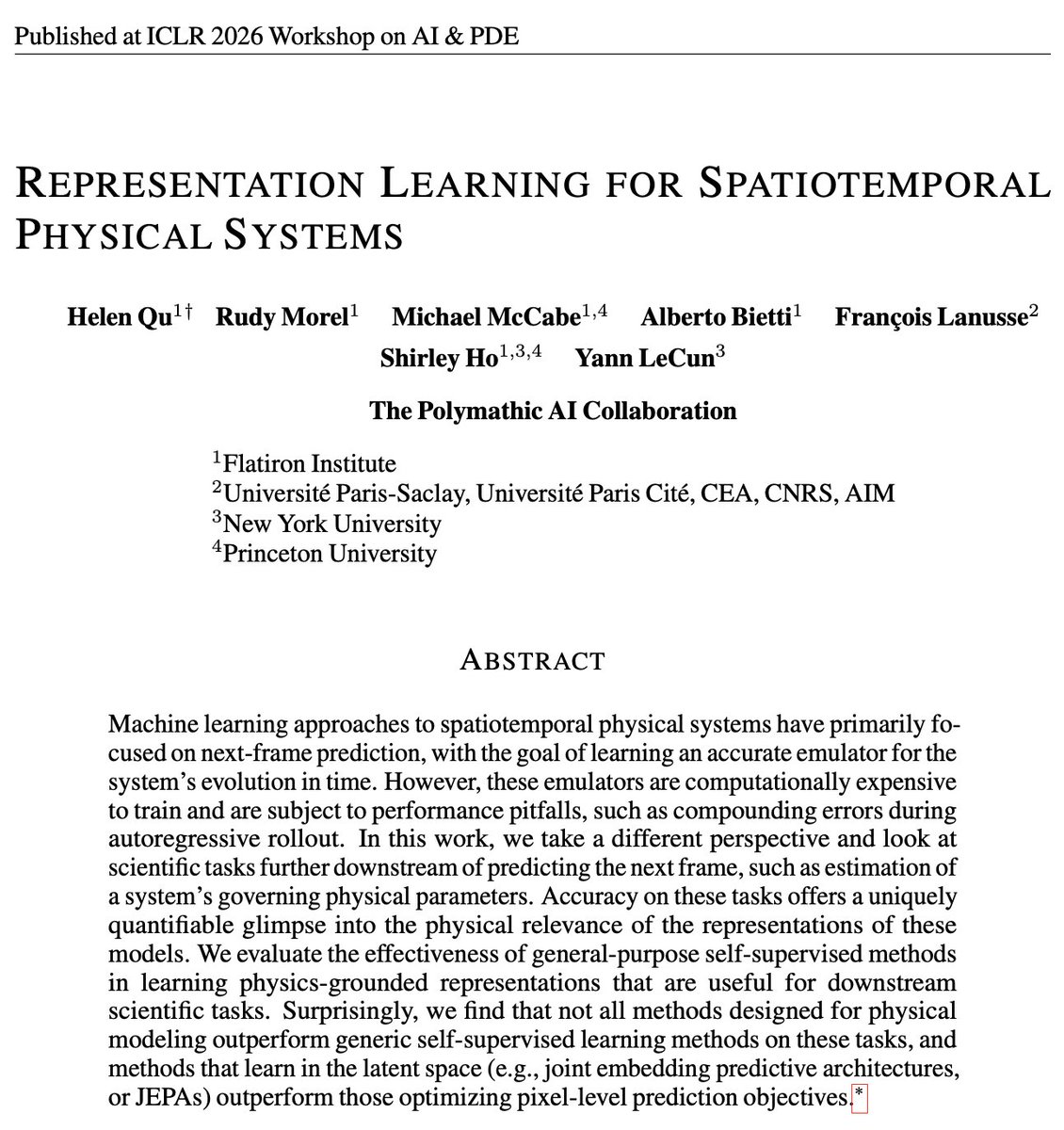

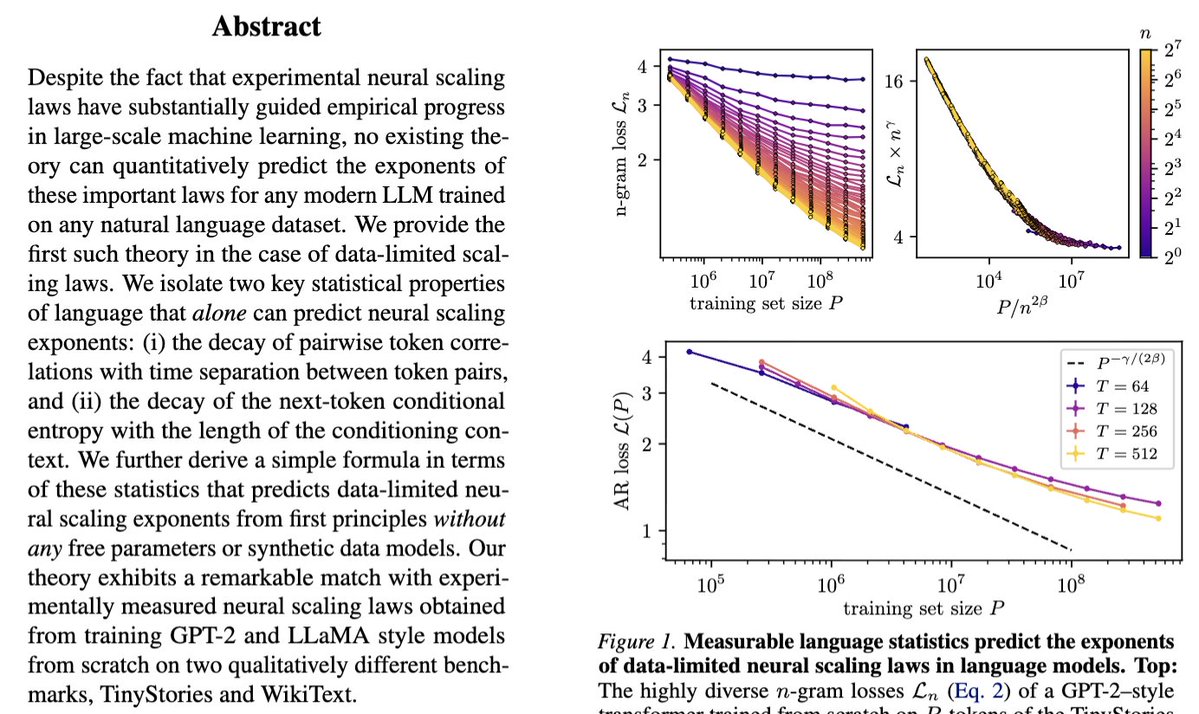

Our new paper "Deriving neural scaling laws from the statistics of natural language" arxiv.org/abs/2602.07488 lead by @Fraccagnetta & @AllanRaventos w/ Matthieu Wyart makes a breakthrough! We can predict data-limited neural scaling law exponents from first principles using the structure of natural language itself for the very first time! If you give us two properties of your natural language dataset: 1) How conditional entropy of the next token decays with conditioning length. 2) How pairwise token correlations decay with time separation. Then we can give you the exponent of the neural scaling law (loss versus data amount) through a simple formula! The key idea is that as you increase the amount of training data, models can look further back in the past to predict, and as long as they do this well, the conditional entropy of the next token, conditioned on all tokens up to this data-dependent prediction time horizon, completely governs the loss! This gets us our simple formula for the neural scaling law!

❓ How do LLMs learn hierarchical structure from sentences alone? 🚨 We build PCFG-like synthetic datasets with two knobs---hierarchy + ambiguity---and derive a correlation-based learning mechanism that predicts the sample complexity of deep nets. Results 👇

🚨 We derive data-limited neural scaling exponents directly from measurable corpus statistics. No synthetic data models, only two ingredients: -decay of token-token correlations with separation; -decay of next-token conditional entropy with context length.

❓ How do LLMs learn hierarchical structure from sentences alone? 🚨 We build PCFG-like synthetic datasets with two knobs---hierarchy + ambiguity---and derive a correlation-based learning mechanism that predicts the sample complexity of deep nets. Results 👇

Tired to go back to the original papers again and again? Our monograph: a systematic and fundamental recipe you can rely on! 📘 We’re excited to release 《The Principles of Diffusion Models》— with @DrYangSong, @gimdong58085414, @mittu1204, and @StefanoErmon. It traces the core ideas that shaped diffusion modeling and explains how today’s models work, why they work, and where they’re heading. 🧵You’ll find the link and a few highlights in the thread. We’d love to hear your thoughts and join some discussions! ⚡ Stay tuned for our markdown version, where you can drop your comments!

The Physics of Data and Tasks: Theories of Locality and Compositionality in Deep Learning ift.tt/9B0HFnC

How can we inject new knowledge into LLMs without full retraining, forgetting, or breaking past edits? We introduce MEMOIR 📖— a scalable framework for lifelong model editing that reliably rewrites thousands of facts sequentially using a residual memory module. 🔥 🧵1/7

@EPFL , @ETH_en and #CSCS today released Apertus, Switzerland's first large-scale, multilingual language model (LLM). As a fully open LLM, it serves as a building block for developers and organizations to create their own applications: cscs.ch/science/comput… #Apertus #AI