nilenso

1.9K posts

nilenso

@nilenso

Employee-owned programmer cooperative in Bangalore.

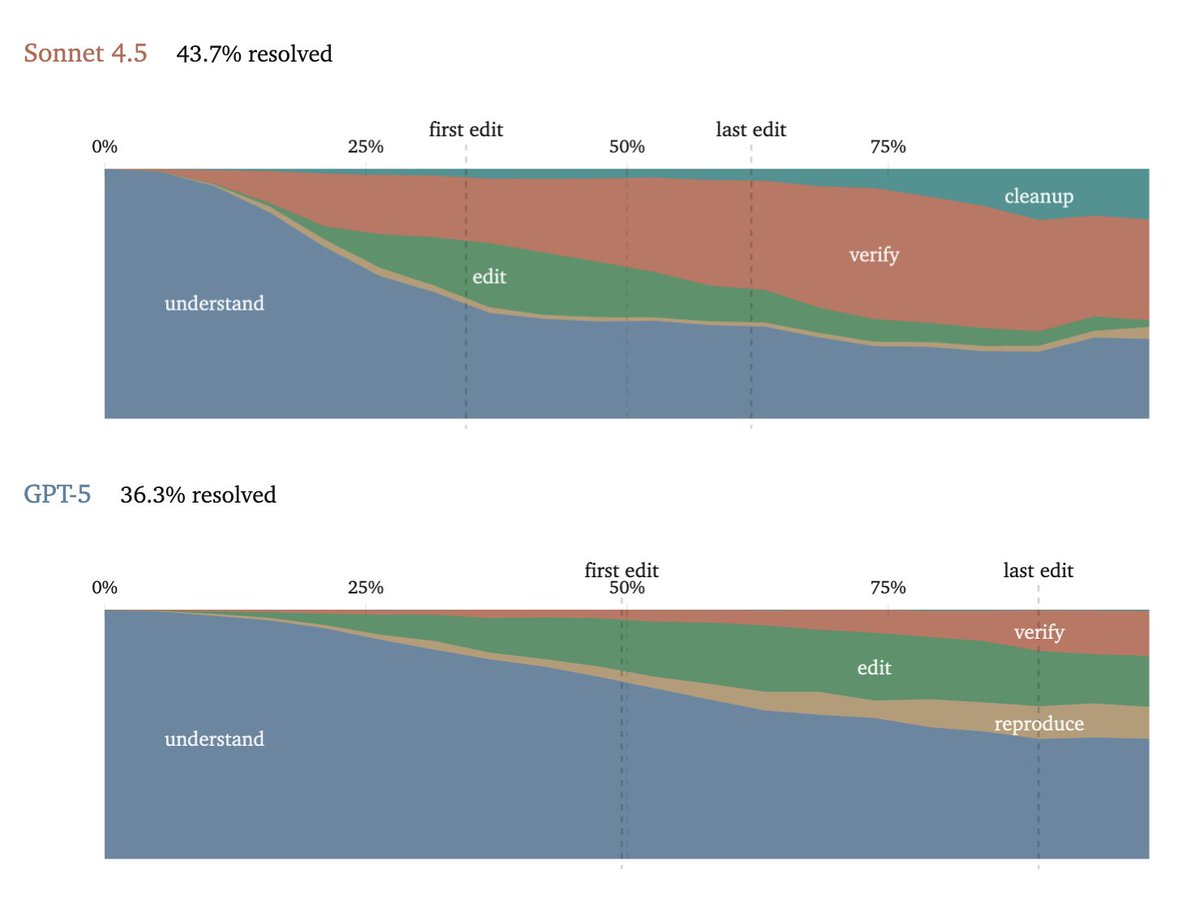

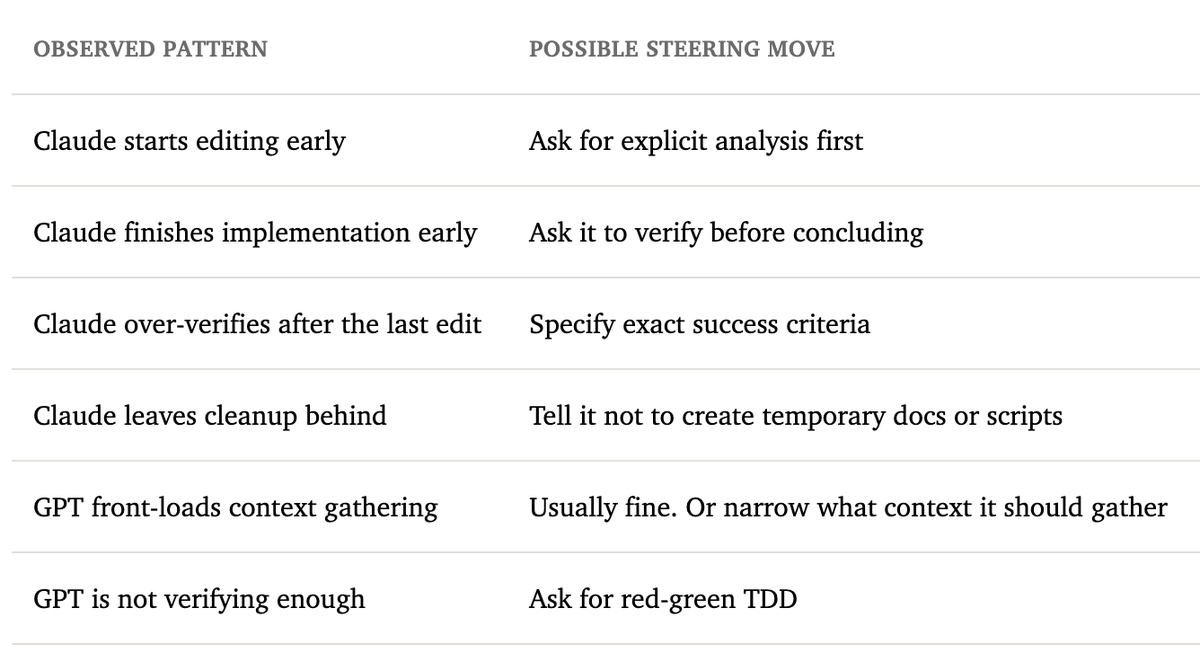

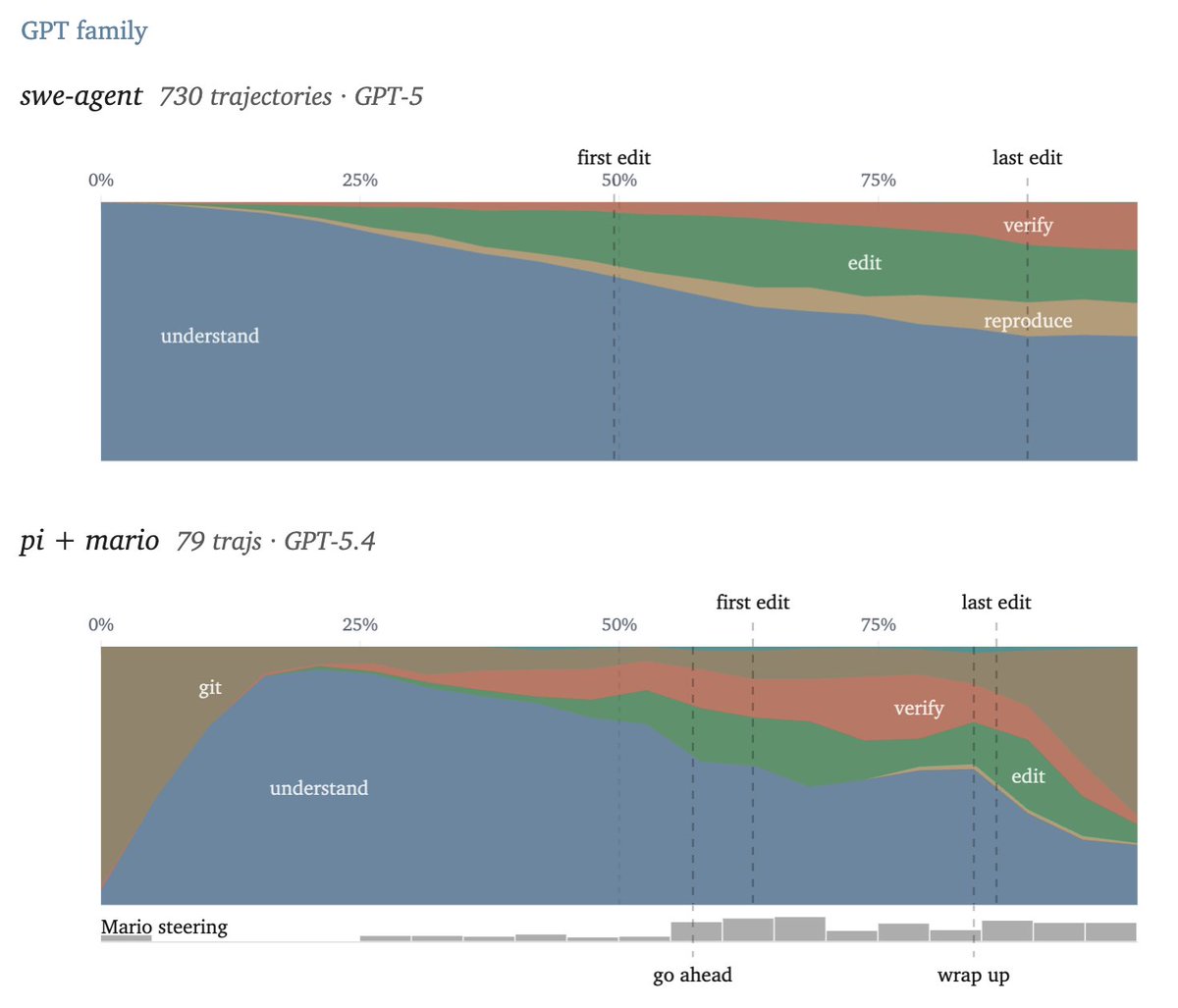

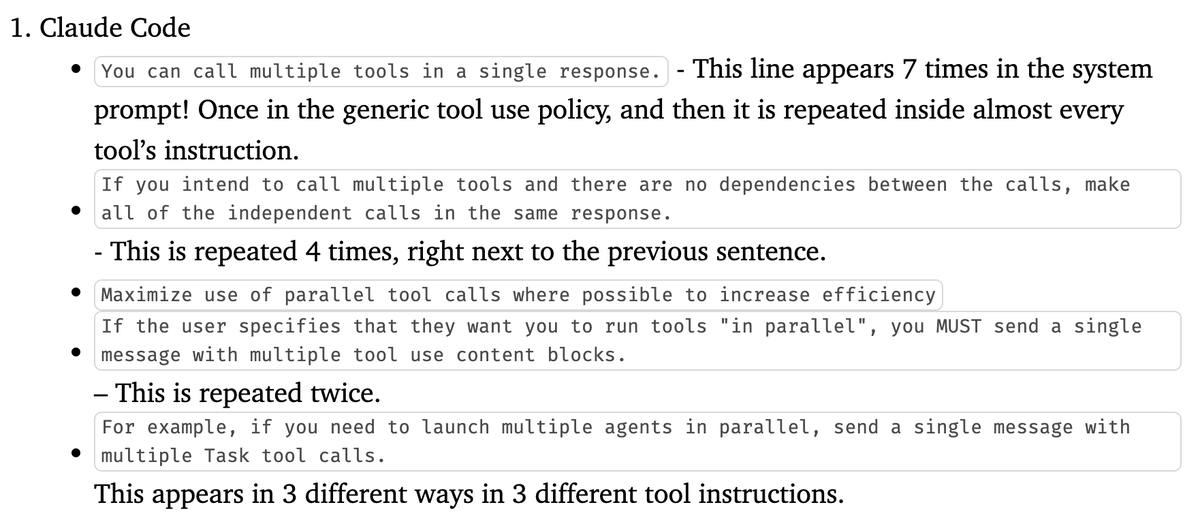

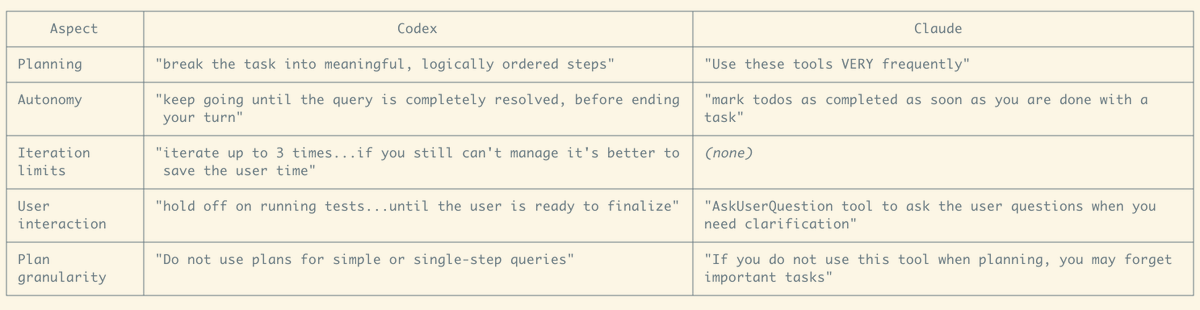

I wanted to check this on newer models, but no SWE-bench Pro trajectories exist for Opus 4.6 / GPT-5.4. So I pulled @badlogicgames' issue-fixing trajectories and ran the same analysis. Thanks for putting those out in public, they make this kind of analysis possible. Opus's first edit sits at 47% in your pi sessions, vs 35% for Sonnet 4.5 on SWE-bench. Harness and model differ too, so I can't isolate the prompt's effect, but the shape shifts in the direction you'd expect from the explicit analyze-dont-edit prompt. I think we can see the effect of the human-steering through explicit analysis / go-ahead / wrap-up cues in this comparison.

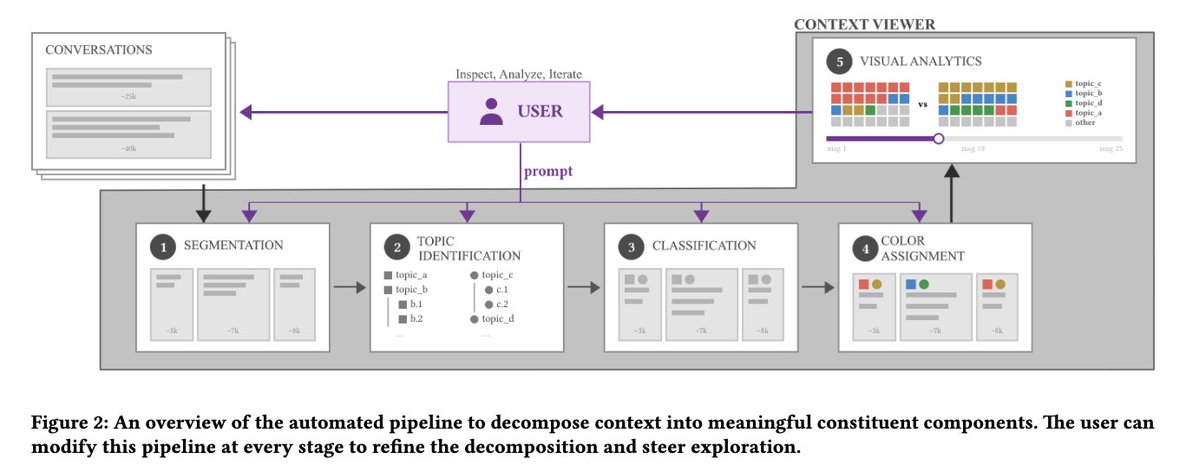

New on the Anthropic Engineering blog: tips on how to build more efficient agents that handle more tools while using fewer tokens. Code execution with the Model Context Protocol (MCP): anthropic.com/engineering/co…