Synslius

4.9K posts

Synslius

@noirminded

Anything aesthetic? //*Producer

Team's latest paper, "HyperMem: Hypergraph Memory for Long-Term Conversations," has been accepted at ACL 2026. HyperMem introduces a hypergraph-based hierarchical memory architecture that captures high-order associations across extended dialogues, moving beyond pairwise relations to unify scattered content into coherent memory units. On the LoCoMo benchmark, it achieves state-of-the-art performance with 92.73% LLM-as-a-judge accuracy. Long-term memory is core to what we are building at EverMind. This work reflects our commitment to advancing the foundations of how AI systems remember, reason, and maintain meaningful interactions over time. Read the full paper: arxiv.org/abs/2604.08256

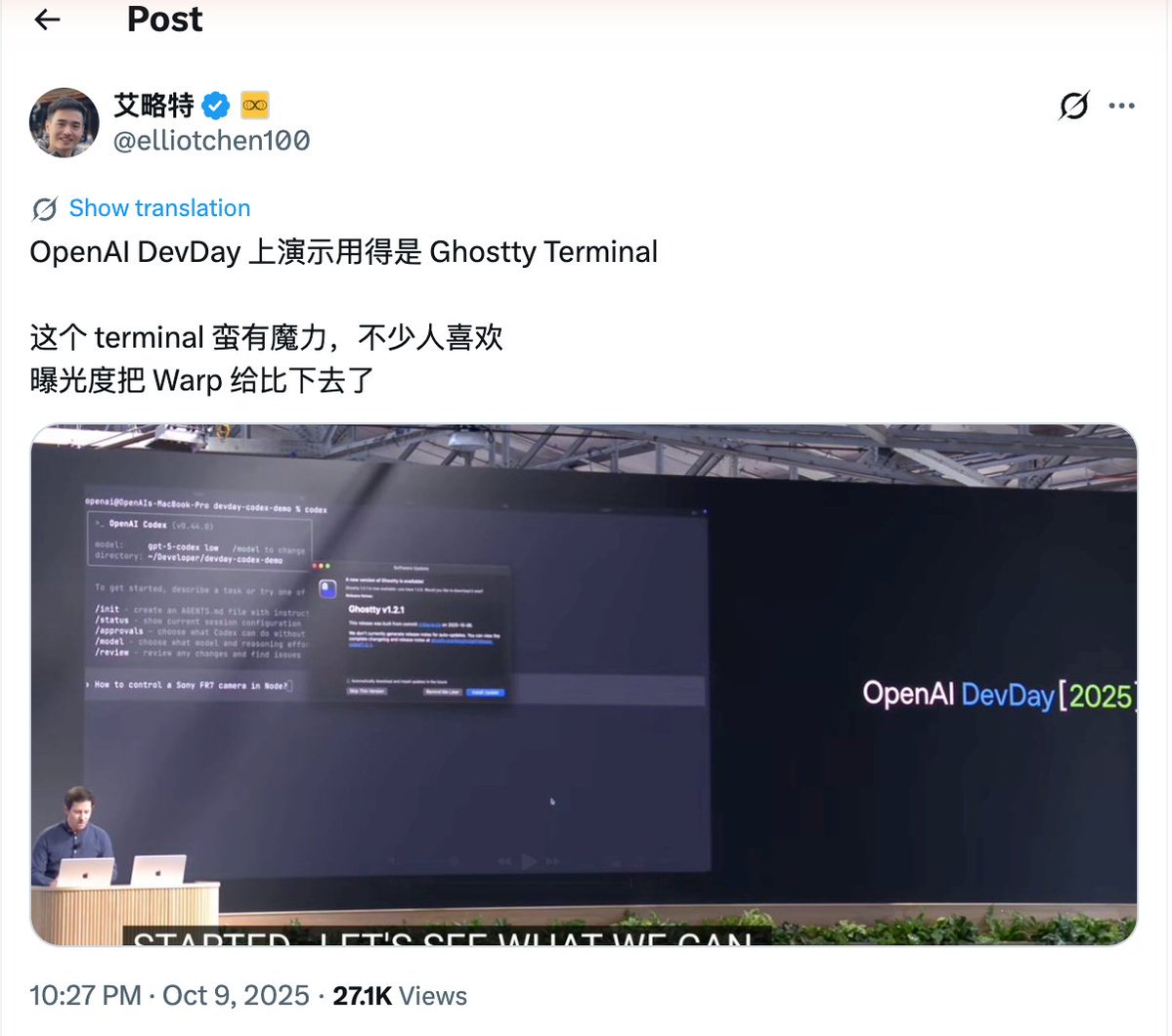

7. Terminal & Environment Setup The team loves Ghostty! Multiple people like its synchronized rendering, 24-bit color, and proper unicode support. For easier Claude-juggling, use /statusline to customize your status bar to always show context usage and current git branch. Many of us also color-code and name our terminal tabs, sometimes using tmux — one tab per task/worktree. Use voice dictation. You speak 3x faster than you type, and your prompts get way more detailed as a result. (hit fn x2 on macOS) More tips: code.claude.com/docs/en/termin…