NonBioS

87 posts

NonBioS

@nonbios

The AI Software Dev with its own computer

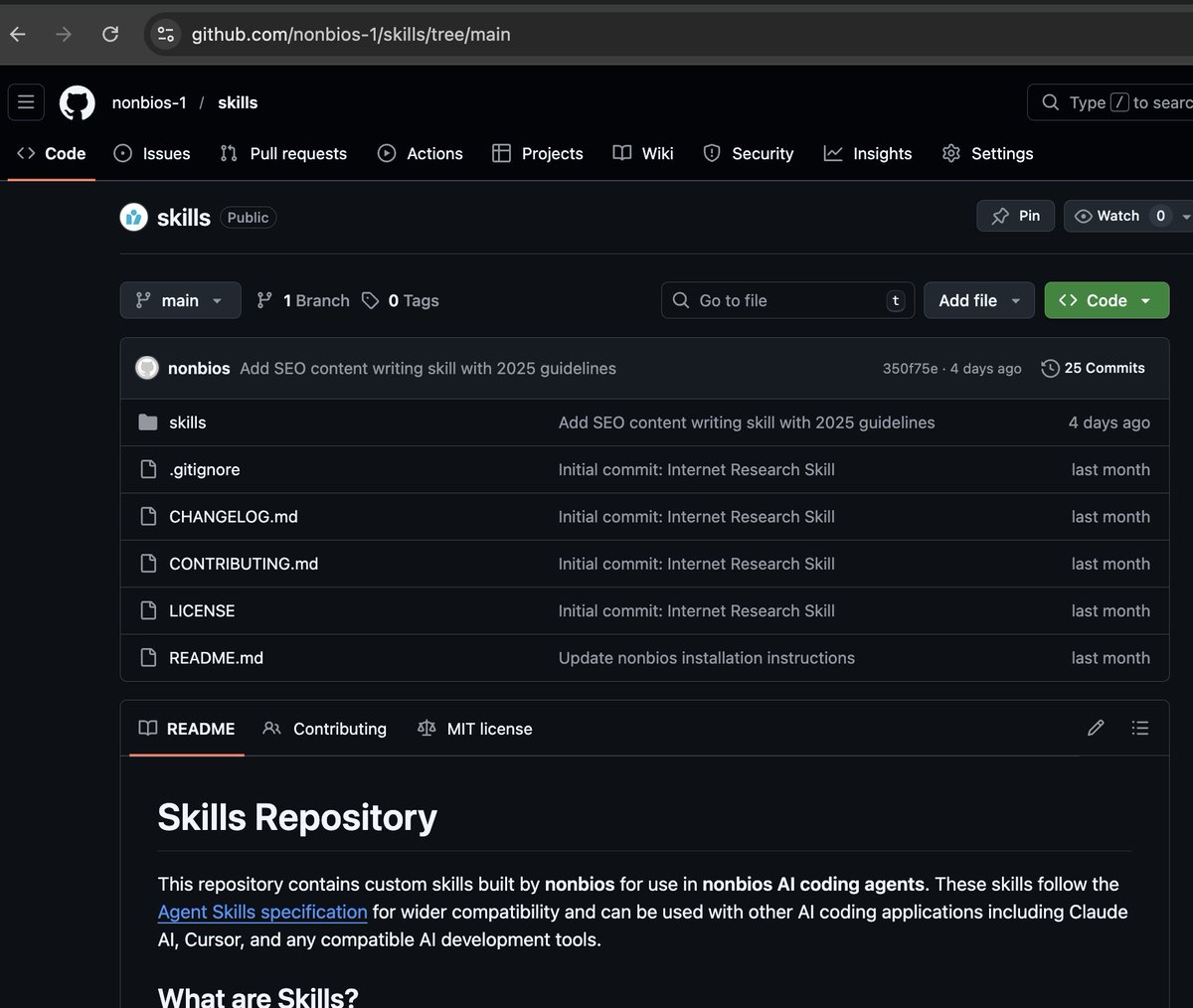

Two new models: nonbios-1.135 and nonbios-1.141 We're releasing two experimental models today that represent significant Context Engineering Architectural breakthroughs in AI-powered software development. But first, let me explain the foundation these models are built on. Strategic Forgetting: Less Memory, More Focus Most AI agents try to remember everything. We took the opposite approach - we invented Strategic Forgetting, an algorithm that continuously prunes an agent's memory to keep it sharp and focused. Think about how you work. When you're deep in debugging a complex issue, you naturally filter out background noise - the conversation happening across the room, the email notification, the tangential code comments. You keep what matters for the task at hand and let everything else fade. That's exactly what Strategic Forgetting does. Today's releases represent a fundamental reimagining of how context engineering works within Strategic Forgetting. We've built a completely new architecture that doesn't just prune better - it understands better. nonbios-1.135: Speed Meets Intelligence Nearly 2x faster than nonbios-1.13, but speed is only part of the story. We achieved this through two fundamental innovations. First, we parallelized key parts of our Strategic Forgetting algorithm, yielding a 25% speed boost. But the real breakthrough came from our new context engineering architecture. This new architecture helps nonbios-1.135 "converge" on correct solutions dramatically faster. It remembers key details twice as well as 1.13, which means it not only works faster, it solves problems where 1.13 would simply give up. The cumulative effect is roughly 2x performance on software engineering tasks. And since we charge by the minute, faster execution means more bang for your buck. nonbios-1.141: The Bug Hunter Built on Claude Opus 4.6 and incorporating the same architectural advances as 1.135, nonbios-1.141 is something different entirely. In our testing, it solved complex bugs that every other model in our lineup failed on. Not some bugs. Not most bugs. Every single challenging case we threw at it. Even we were shocked by the results. Here's a real example: We had a complex React bug that nonbios-1.13 struggled with for almost 10 hours, proposing multiple wrong solutions along the way. nonbios-1.141 isolated the exact issue in 5 minutes and one-shot the fix. But 1.141 isn't perfect. On another React bug, it initially proposed the wrong solution but when we pointed out the inadequacy, it quickly converged to the correct fix. The combination of our new context engineering architecture and Claude Opus 4.6's capabilities creates debugging performance we haven't seen before. There's a tradeoff - 1.141 is dramatically slower than other models. But when you're hunting a critical bug that's been burning hours or days, speed takes a back seat to actually solving the problem.