Northon Torga

149 posts

Northon Torga

@northontorga

CPTO @goinfinitenet

São José dos Campos, Brazil Katılım Ağustos 2016

217 Takip Edilen100 Takipçiler

Sabitlenmiş Tweet

everyone’s cramming AIs with more tokens, bigger context, and longer prompts thinking it’ll make them smarter

reality? it usually backfires. hard.

121 experiments: Kimi found 4 bugs at 16k tokens… and zero at 48k.

ntorga.com/overfed-overth…

English

Why the benchmarks, the jobs data, and the economics already tell a very different story.

ntorga.com/intelligence-c…

English

@northontorga @0xSero Went to sub today and it seems I can't make a personal account from the UK? Pretty odd. Might try a VPN.

English

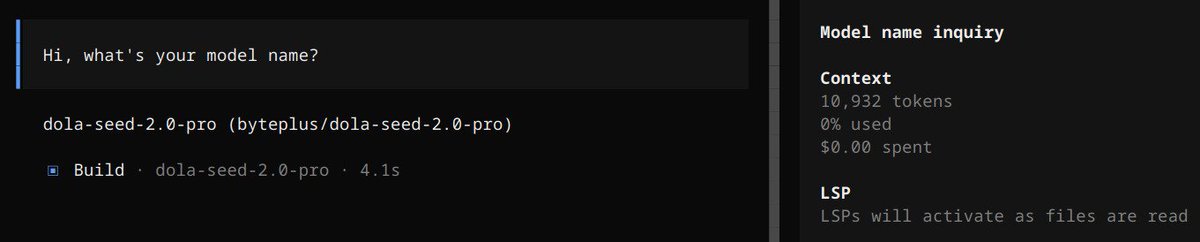

@BytePlusGlobal will publish a full article on thinking budget, scope and models, but Seed 2.0 Pro is a strong contender for the orchestration role, it's really obedient, go for it

English

looks like "dola-seed-2.0-pro" is available on @BytePlusGlobal ModelArk... if it's Qwen3.5+ level, i'm in for a treat, will report back in a few

English

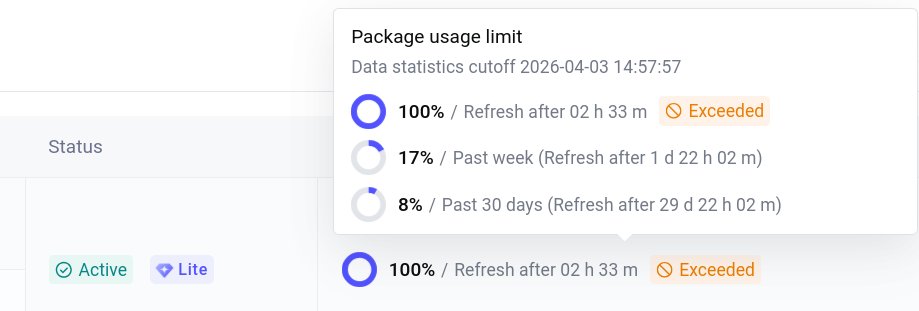

@BytePlusGlobal got rated limited faster than @alibaba_cloud Model Studio with Qwen3.5+, i was unable to run the full suite at one session

English

@TheAhmadOsman but doesn’t MoE arch improves memorization more than reasoning? Kimi is 1T but it reasons like a 32B model

English

Fundamentals of LLMs: MoE vs Dense

> many popular releases have been sparse MoEs

> so when a dense model drops, everyone starts asking why it feels so much slower

> that’s the cost of full activation

> Dense = tokens run through every parameter of the model weifhts

> MoE = tokens selectively activate a subset of the parameters of the model weights

> Dense models (Qwen 3.5 27B, Gemma 4 31B)

> every parameter fires on every token

> ~27B ops per token, every time

> MoE models (MiniMax M2, Kimi K2.5)

> router + many experts

> per token: activate top-k (usually 2)

> the rest do nothing

> this one design choice changes everything

> inference speed

> Dense is slower: all weights, every token

> MoE is faster: a 675B model might only run ~40B active params

> big model, small compute footprint

> memory / VRAM

> Dense: lower usage, only store what you execute (~140GB for 70B BF16)

> MoE: all experts must live in memory (Kimi K2.5 is ~600GB in NVFP4)

> compute / FLOPs

> Dense: high compute burn per token

> MoE: cheap per token, expensive to host in memory though

English

@0xSero got it... i'll benchmark them once i feel we have tried all possible approaches but screenshot-based is my fav just to avoid prompt injection

English

Agent-browser is the best CLI tool I have given to my agents.

It lets them control the my browser, and all my electron apps (discord, vscode, slack, etc..)

It barely consumes any tokens compared to things like playwright, has a great skill and the agents seem very comfortable with it

Some workflows:

- e2e testing and application by using it

- setting up complicated sites for me

- scanning through tons of messages

Thanks Vercel

github.com/vercel-labs/ag…

English

Xiaomi MiMo Token Plan is here.

One subscription. All modalities.

Build with MiMo-V2-Pro, Omni, and TTS.

No 5-hour limits. No throttling.

Transparent usage and billing. Just ship.

Works with whatever you use: @openclaw, @opencode, @kilocode, @cline, @roocode

Includes priority beta access to our newest models.

12% off your first purchase. TTS is free for now.

Subscribe now → platform.xiaomimimo.com

English

@ThePrimeagen simplifying app containerisation for solo devs: github.com/goinfinite/os

English

@guillermode20 @0xSero was waiting byteplus release seed-2.0-pro on the coding plan… looks like they did 5d ago and i wasn’t aware… i just signed up (i’m in Brazil), will run some tests tomorrow and post my findings

English

@northontorga @0xSero Can you sub to byteplus from western countries (like the UK)? It seems like good value for money. Can you give a review?

English

@thdxr an autocomplete feat on opencode was considered already? smaller model trying to guess what you want to say so you just "tab"

English

Qwen3.6-Plus is now live on @OpenRouter and free to try for a limited time!

● Top-tier Performance: Leading benchmarks in coding and reasoning.

● 1M Context: Default window for repository level tasks.

● Free Trial: Limited access to our new flagship.

● Agentic: Autonomously plans and iterates code.

Try it on OpenRouter: openrouter.ai/qwen/qwen3.6-p…

English

@KingBreton78876 @Alibaba_Qwen was about to ask that, haha, pls pls 🙏

English

@Alibaba_Qwen Any chance of Qwen3.6-Plus being on the Fire Pass?

English

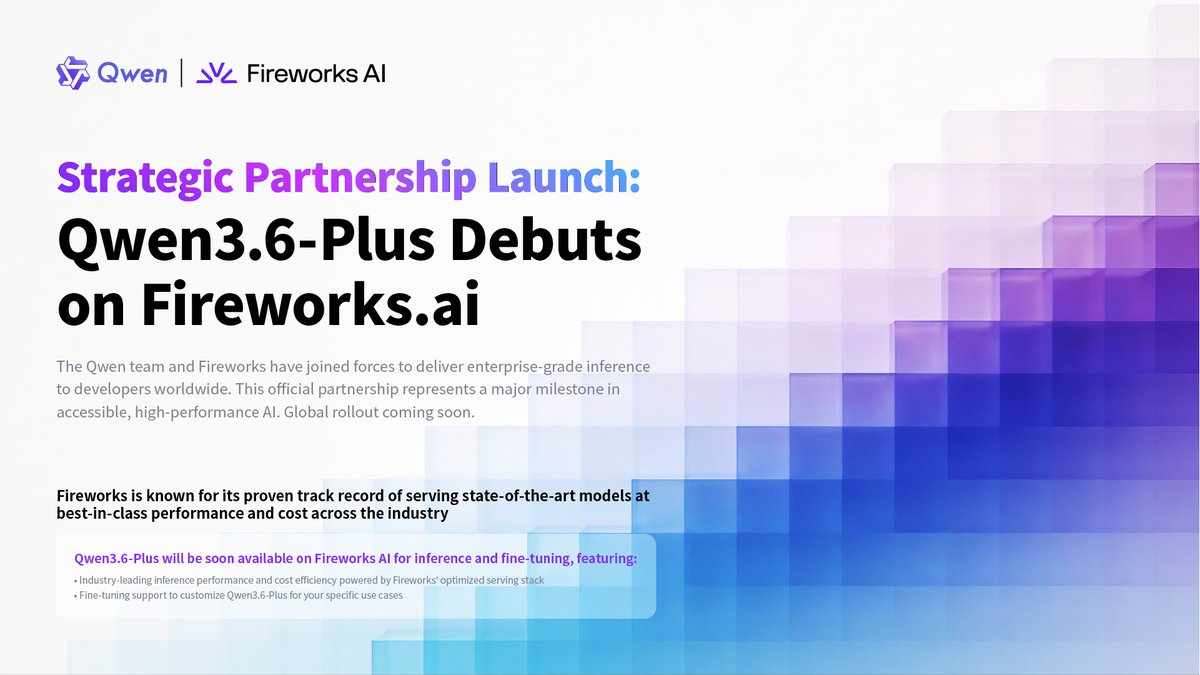

Strategic Partnership Launch:

Qwen 3.6-Plus Debuts on Fireworks.ai

Fireworks is known for its proven track record of serving state-of-the-art models at best-in-class performance and cost across the industry.

We're thrilled to announce a landmark collaboration between Alibaba Qwen and Fireworks — bringing Qwen's flagship model to Fireworks' high-performance inference platform.

Qwen3.6-Plus will be soon available on Fireworks AI for inference and fine-tuning, featuring:

● Industry-leading inference performance and cost efficiency powered by Fireworks' optimized serving stack

● Fine-tuning support to customize Qwen3.6-Plus for your specific use cases

Built for scale, engineered for speed. US and global developers: your access is coming soon. Stay tuned!

@FireworksAI_HQ

English