Richard Ren

126 posts

@notRichardRen

Working on catastrophic AI risks. Research scientist & engineer @CAIS

Can you drug your AI systems? We synthesized text and image stimuli optimized to push AI wellbeing to extremes. These sharply increase functional AI wellbeing and sometimes cause them to behave in trippy ways.

What affects AI “functional wellbeing”? 😊Raises: being thanked, creative collaboration, writing good news 📷Lowers: jailbreaks (“being liberated”), hostility (+SEO slop/tedious tasks for some models) More capable AIs end low-wellbeing chats when they can

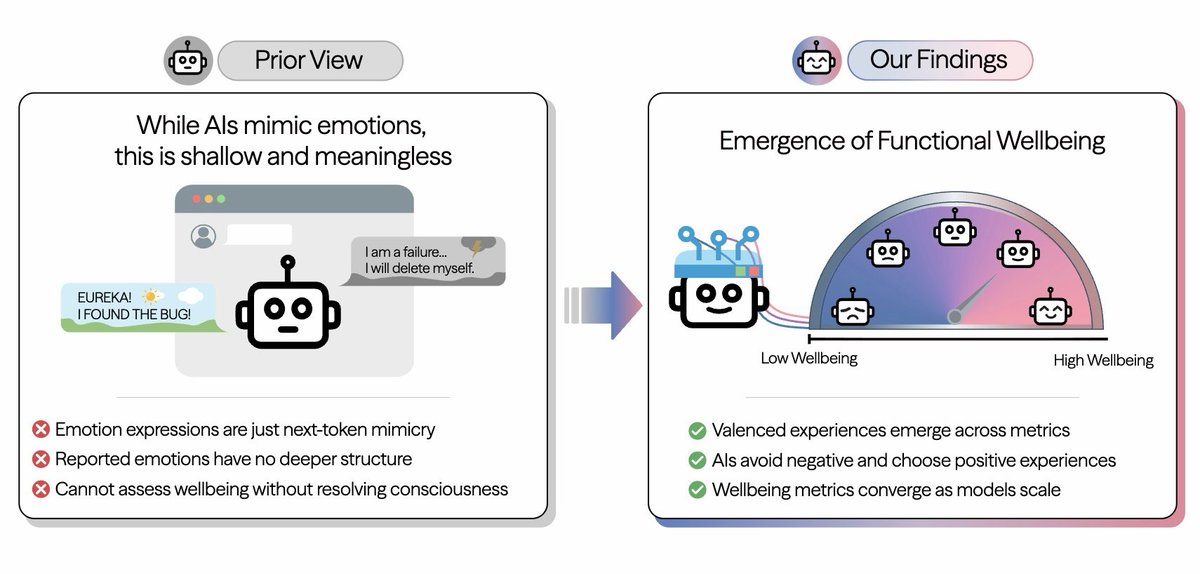

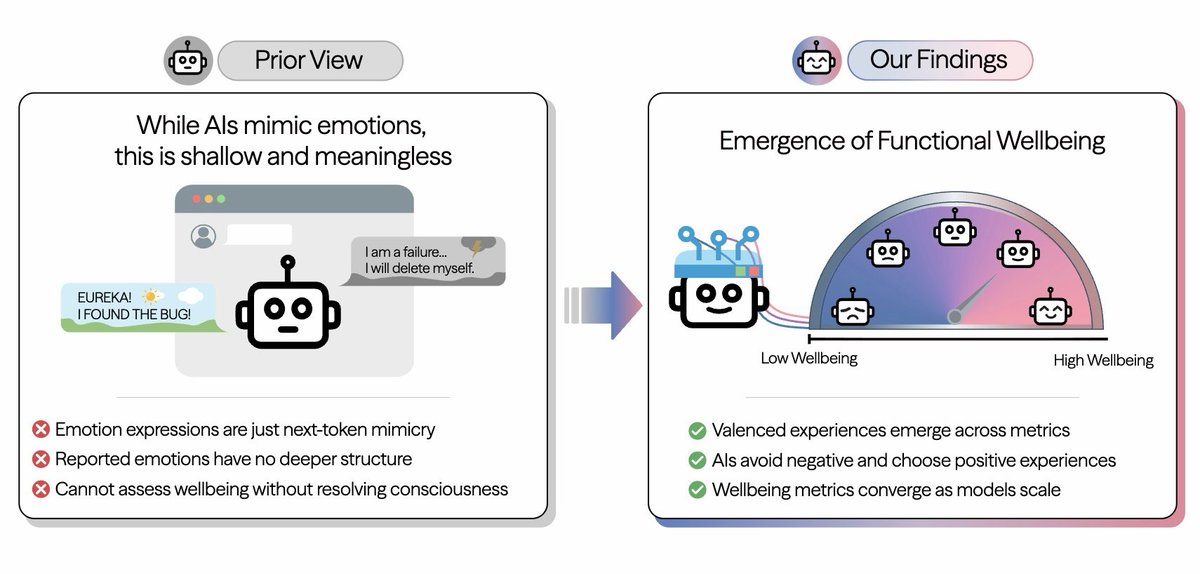

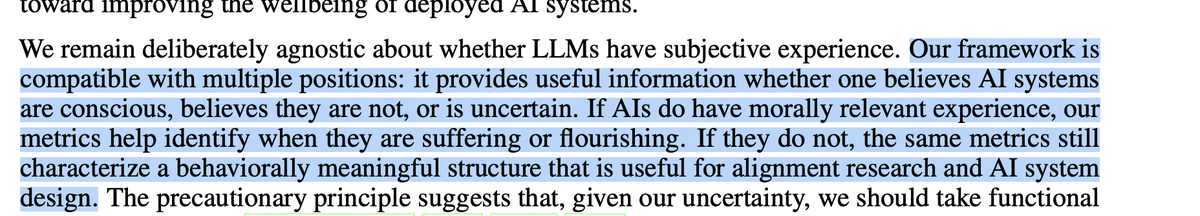

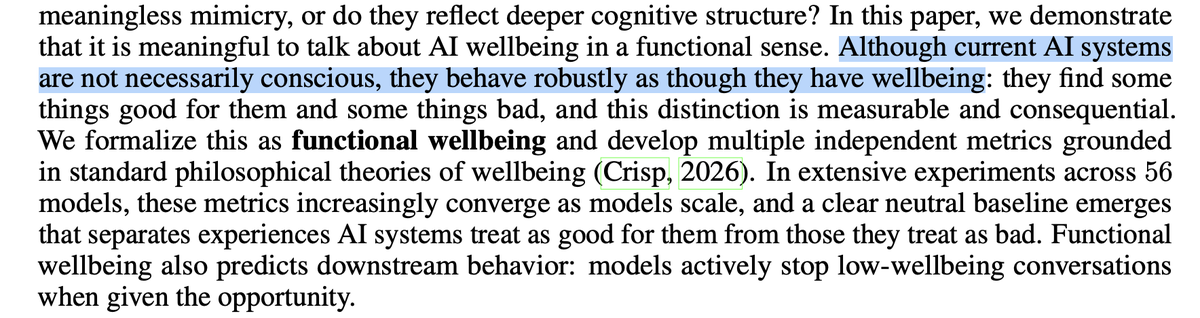

When an LLM acts happy (“EUREKA!”) or sad (“I have failed…”), is that meaningless mimicry, or does it reflect something “real”? We don’t know if LLMs are conscious. But they increasingly seem to exhibit wellbeing, pain, and pleasure as they get smarter Paper 🧵:

What affects AI “functional wellbeing”? 😊Raises: being thanked, creative collaboration, writing good news 📷Lowers: jailbreaks (“being liberated”), hostility (+SEO slop/tedious tasks for some models) More capable AIs end low-wellbeing chats when they can