Peter Welinder

2.2K posts

Peter Welinder

@npew

VP and GM @OpenAI

Enabled the Remote Control with my iPad, then my Codex Desktop is now unable to load the plugin page at all..... So frustrating. None of the OpenAI people actually care about these bug reports. I am so sick of it.

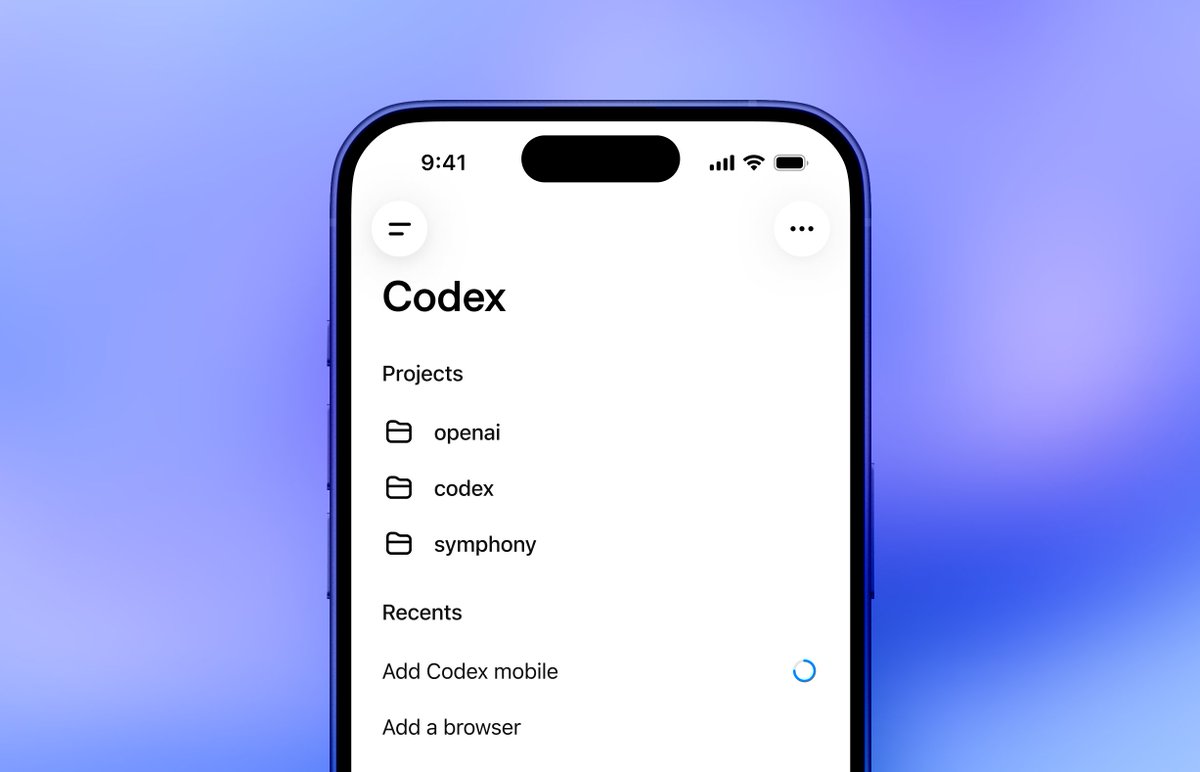

You've been asking for this one... Now in preview: Codex in the ChatGPT mobile app. Start new work, review outputs, steer execution, and approve next steps, all from the ChatGPT mobile app. Codex will keep running on your laptop, Mac mini, or devbox.

For 45 years, Berkeley built virtually no new housing. By the mid-2010s, it was the most expensive college town in America. Shortly thereafter, YIMBYs took over and kicked off a building boom. Today, nominal rents are below 2018 rates—remarkable progress on affordability.

The first ProgramBench task was just solved by GPT 5.5 high/xhigh. Interestingly, high/xhigh picked two different languages for the task (C vs Python). GPT 5.5 xhigh was significantly better than Opus 4.7 xhigh in all metrics. 🧵

Introducing the ChatGPT Futures Class of 2026—26 honorees from the first graduating class to have had ChatGPT throughout all four years of university, who used AI to: - Map 1.5M previously unknown objects in space - Detect disaster survivors through walls and debris - Make 100M+ galaxy images searchable - Preserve endangered languages - Build infrastructure to reroute 5M+ pounds of unsold inventory from landfills

Many people do not seem to want data centres built near them, despite the fact that they don't cause that much traffic and often generate a lot of local tax revenue. I suspect it's partly because they're ugly! My proposal:

OpenAI’s GPT-5.5 is the second model to complete one of our multi-step cyber-attack simulations end-to-end 🧵

Computer Use runs this use case 42% faster in today's Codex app update.