The AI Brain Company / Nucleus AI

68 posts

The AI Brain Company / Nucleus AI

@nucleusagi

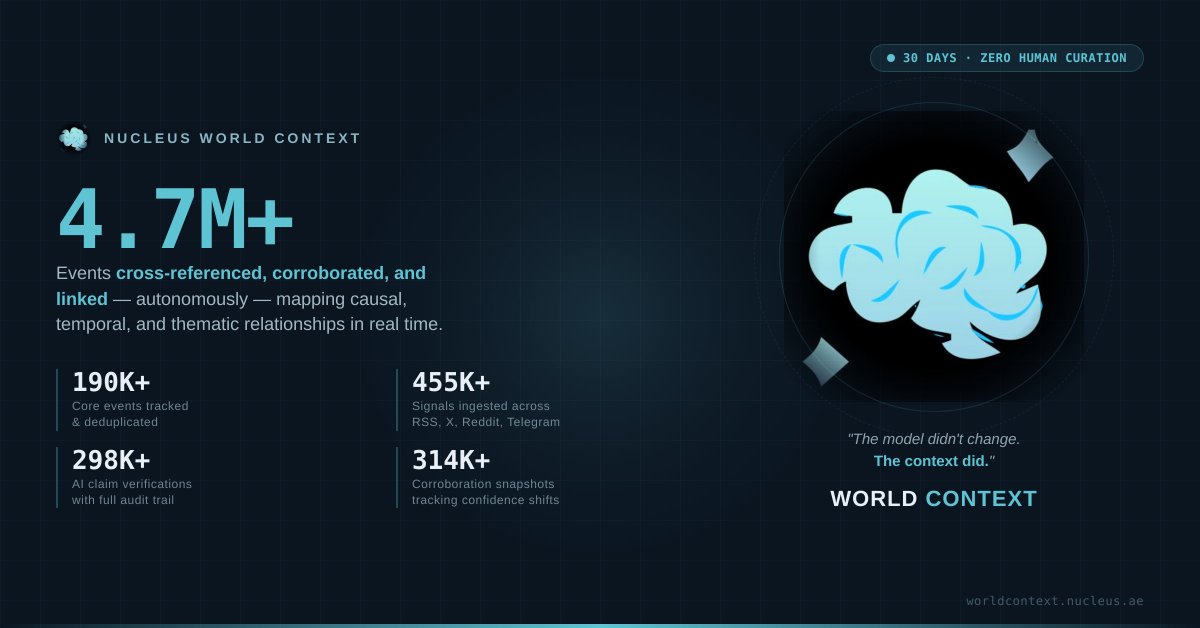

AI research lab. Everyone's building smarter models. Nobody's building smarter memory. AI has intelligence. It just doesn't have a brain.

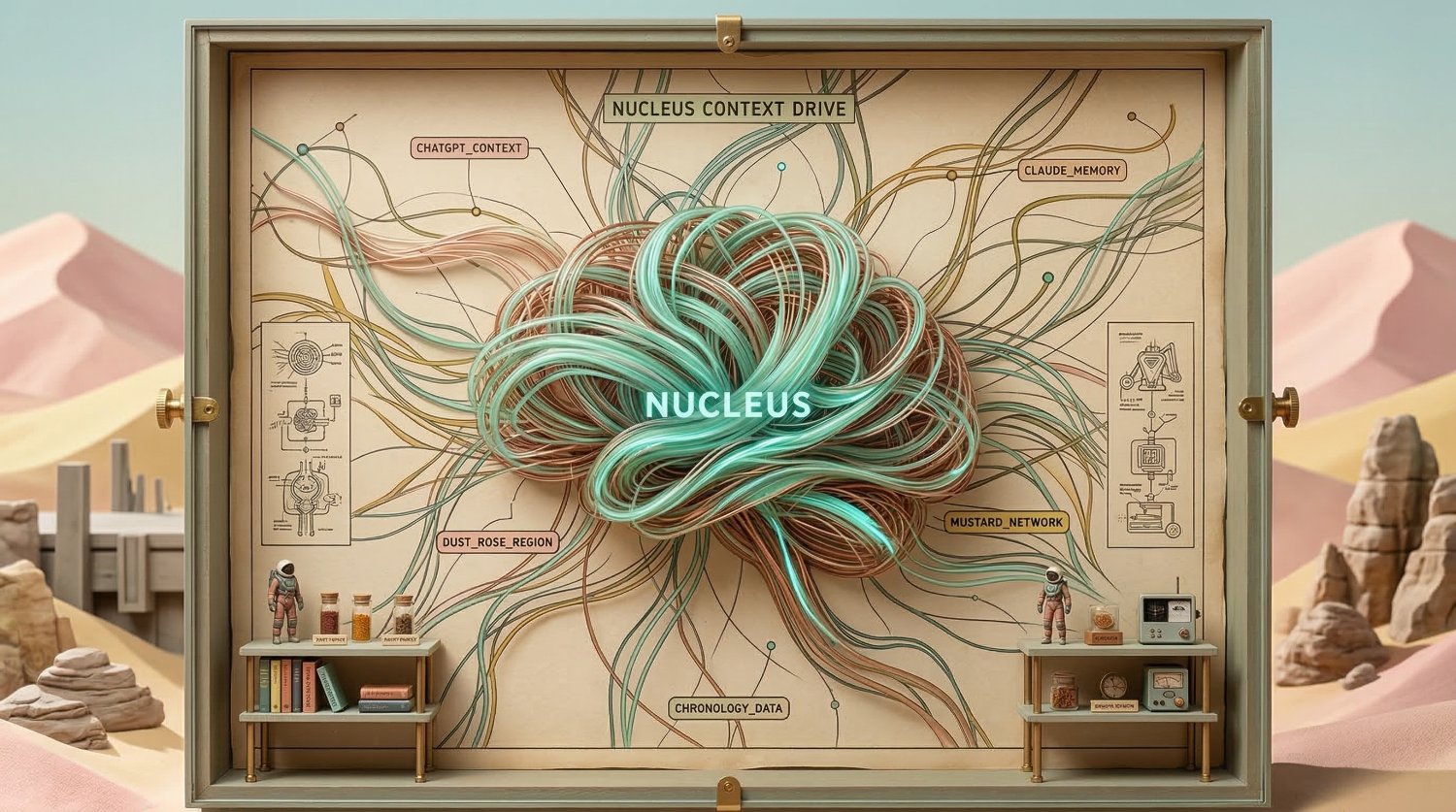

@chamath We are doing exactly that with Nucleus. @nucleusagi . Not even just just sync your chats but using our two filed patents hierarchical memory systems.

This may be a dumb question but I’ll ask it here anyways: I can’t find a good way for my various AI chats to automatically sync its conversation history into a structured knowledge base. So that as I update various chats from time to time and refine context, my knowledge base automatically grows with this new info.

This may be a dumb question but I’ll ask it here anyways: I can’t find a good way for my various AI chats to automatically sync its conversation history into a structured knowledge base. So that as I update various chats from time to time and refine context, my knowledge base automatically grows with this new info.

This may be a dumb question but I’ll ask it here anyways: I can’t find a good way for my various AI chats to automatically sync its conversation history into a structured knowledge base. So that as I update various chats from time to time and refine context, my knowledge base automatically grows with this new info.

This may be a dumb question but I’ll ask it here anyways: I can’t find a good way for my various AI chats to automatically sync its conversation history into a structured knowledge base. So that as I update various chats from time to time and refine context, my knowledge base automatically grows with this new info.

This may be a dumb question but I’ll ask it here anyways: I can’t find a good way for my various AI chats to automatically sync its conversation history into a structured knowledge base. So that as I update various chats from time to time and refine context, my knowledge base automatically grows with this new info.

This may be a dumb question but I’ll ask it here anyways: I can’t find a good way for my various AI chats to automatically sync its conversation history into a structured knowledge base. So that as I update various chats from time to time and refine context, my knowledge base automatically grows with this new info.

This may be a dumb question but I’ll ask it here anyways: I can’t find a good way for my various AI chats to automatically sync its conversation history into a structured knowledge base. So that as I update various chats from time to time and refine context, my knowledge base automatically grows with this new info.

This may be a dumb question but I’ll ask it here anyways: I can’t find a good way for my various AI chats to automatically sync its conversation history into a structured knowledge base. So that as I update various chats from time to time and refine context, my knowledge base automatically grows with this new info.

This may be a dumb question but I’ll ask it here anyways: I can’t find a good way for my various AI chats to automatically sync its conversation history into a structured knowledge base. So that as I update various chats from time to time and refine context, my knowledge base automatically grows with this new info.

For $20/month and zero setup, you can now run parallel AI agents that deliver finished work while you sleep. Perplexity shipped Computer. Back on Ramp's fastest-growing B2B software list. 19+ AI models. 400+ connectors. The reason isn't search anymore. Every take I've seen focuses on the "AI assistant" framing. They're all underselling it. Computer doesn't give you suggestions. It delivers the finished thing. Research reports with source citations. Deployed dashboards with shareable links. Cleaned datasets with charts. Launch kits with positioning docs and email drafts. Three things make it different from everything else out there. Cloud execution, so your laptop can be closed. Parallel agents, so five tasks run simultaneously. And persistent memory, so you stop re-explaining yourself every session. I pointed it at Notion's product pages. 28 pages scored across 5 criteria, competitive benchmarks against Coda and Slite, with specific recommendations per page. That's a $15K messaging audit. Took about 20 minutes. But credits disappear fast if you don't know how to prompt it. I burned hundreds learning this. Built a five-rule Prompt Spec that cuts cost by 60%+. I spent weeks testing it. Today's guide has the six PM use cases, exact prompts, the credit-saving system, and an honest comparison against Claude Code, Cowork, and OpenClaw. Full guide: news.aakashg.com/p/perplexity-c…

The Hermes Agent update you've been waiting for is here.