Cliff Oliech, PhD

7.2K posts

Cliff Oliech, PhD

@olifo

Existing silently 🇰🇪

This AI text detector says Abraham Lincoln's Gettysburg Address was written by AI.

You're not depressed, you just lost your quest.

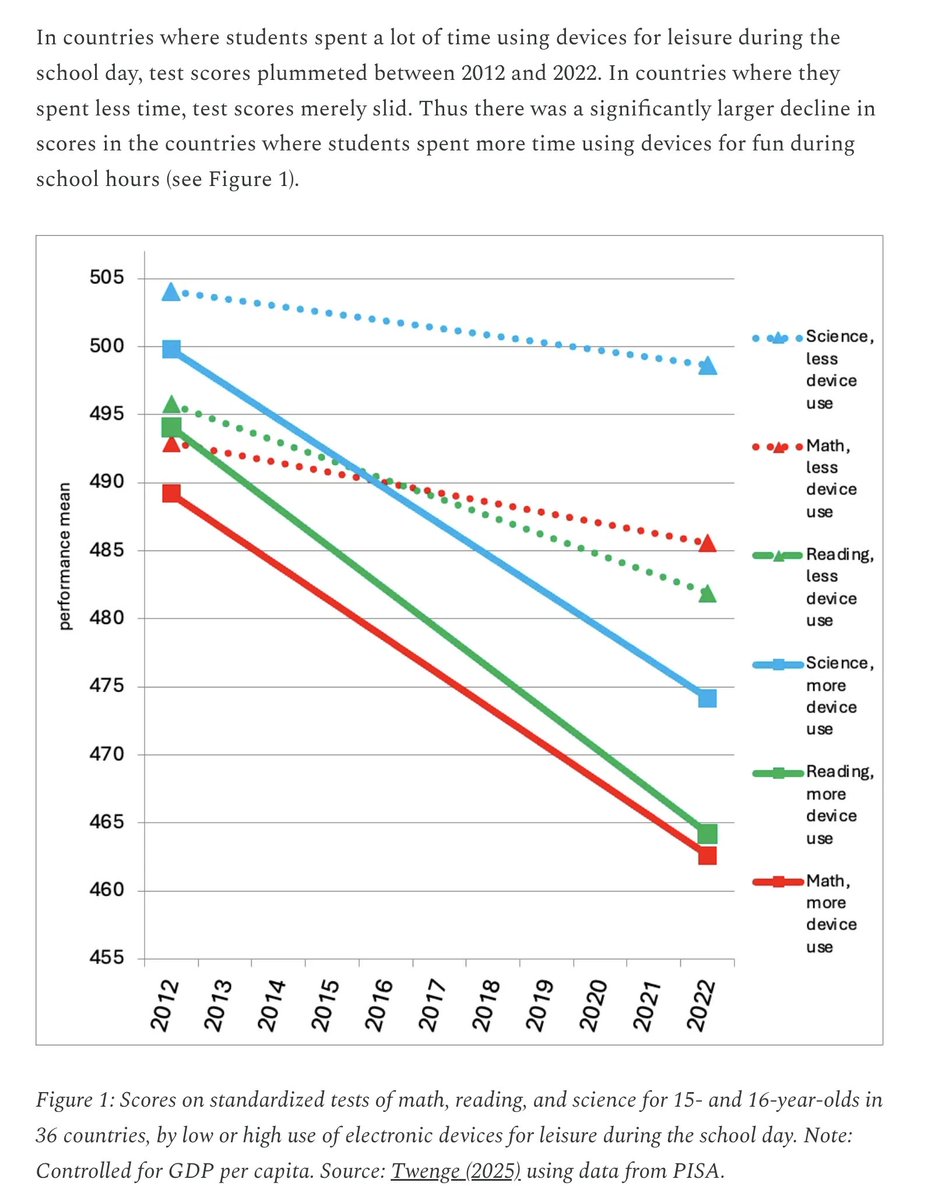

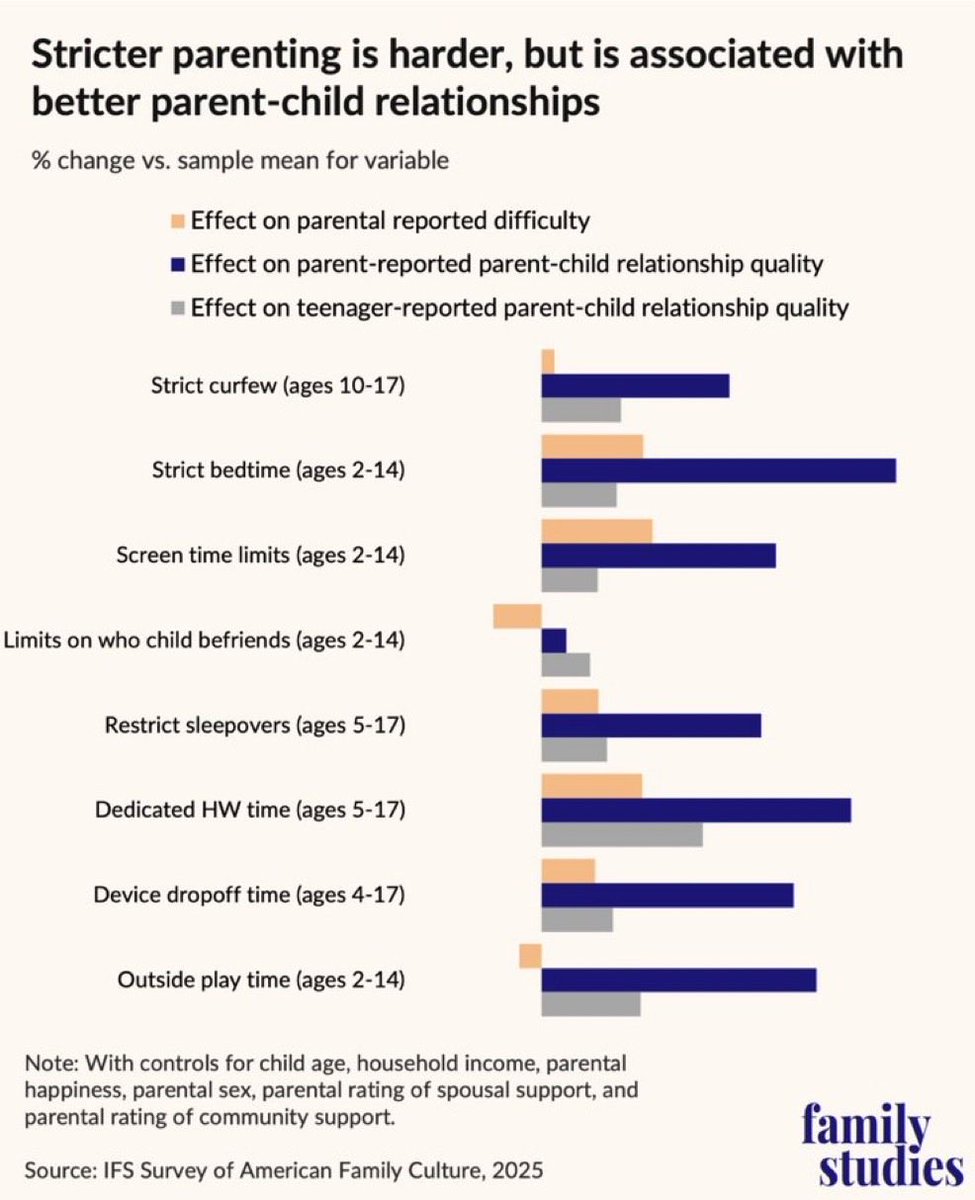

We now have evidence that gentle parenting doesn’t work. Here’s an uncomfortable truth about parenting no one wants to say out loud: The data is not kind to gentle parenting. According to teenagers, strict curfews. strict bedtimes, screen limits, device drop off times, dedicated homework blocks, and sleepover restrictions IMPROVE higher relationship quality. And yes, parenting difficulty goes up. Of course it does. Leadership is harder than appeasement. For the past decade we have been sold a watered down, Instagram friendly version of “gentle parenting” that often collapses into boundary avoidance, endless negotiation and emotional processing without enforcement. Parents terrified of saying no because they do not want to rupture connection. But connection without authority is not connection. It is dependency. When parents impose structure, the relationship improves. Teenagers report better parent child relationship quality in homes with curfews and rules. Younger kids report better relationships in homes with screen limits and bedtimes. Even device drop off times correlate positively. Why? Because structure is not cruelty. Structure is love made visible. A bedtime says: your brain matters more than your entertainment. A screen limit says: your dopamine system is not fully developed and I will guard it until it is. A curfew says: your safety matters more than your social standing. That is not authoritarianism. That is caring. Boundaries create friction. Friction creates growth. The parent absorbs the short term discomfort so the child does not pay the long term cost. Children do not experience well calibrated limits as rejection. They experience them as stability. The human brain craves predictability. Predictability reduces anxiety. Reduced anxiety strengthens attachment. That is why relationship quality goes up. Notice something else in the data. The strongest effects are around time structure. Bedtime. Homework. Devices. Outside play. These are environmental constraints. They scaffold executive function. The winning formula is not tyranny. It is high warmth plus high structure. The modern failure mode is high warmth plus low structure. That is just abdication of responsibility wrapped in empathy. Children need leadership, not negotiation. They need adults who can tolerate their anger. They need boundaries that do not move every time emotions spike. They need someone whose prefrontal cortex is fully myelinated. The harder path produces the stronger bond. Because when a child feels that someone is strong enough to hold the line, they relax. And relaxed nervous systems build durable relationships.