omkaar

3K posts

omkaar

@omkizzy

https://t.co/rV8tAb3oHX, codice est debitum | ex @uwaterloo feedback: https://t.co/nx72nVrMzj

Day 73/365 of GPU Programming Wanted to understand FP4 better and came across this great @Cohere_Labs talk on Training LLMs with MXFP4 and @juliarturc's amazing series on quantization So fascinating learning what makes low precision work for LLM training and inference

25-30% of his portfolio lose a co-founder before Series A. Solo Founders Podcast ep 3 is live @chudson of @PrecursorVC has invested in 500+ companies as a solo GP. He's our first VC guest. His take: the co-founder consensus is broken, and you should never give away 40% of your company just to make fundraising easier. 00:59 — Why the co-founder consensus is wrong 02:57 — The 500+ company data set 03:37 — Dead equity and cap table damage 07:21 — Rivalry and resentment 09:29 — The "team sport" analogy deconstructed 11:47 — Talented solo founder vs. mismatched team 13:37 — The emotional journey of solo founding 15:28 — Solo founder advantages 21:07 — Don't give away 40% to fundraise easier 23:25 — Authorship 27:03 — Fundraising advice for solo founders 28:28 — Don't apologize for being solo 36:45 — The solo GP / solo founder kinship 41:06 — Bear case for solo founding 43:22 — Bull case for solo founding

Running into @steipete feels like meeting our generation’s Steve Jobs. A new dawn of computing @openclaw @NVIDIAGTC

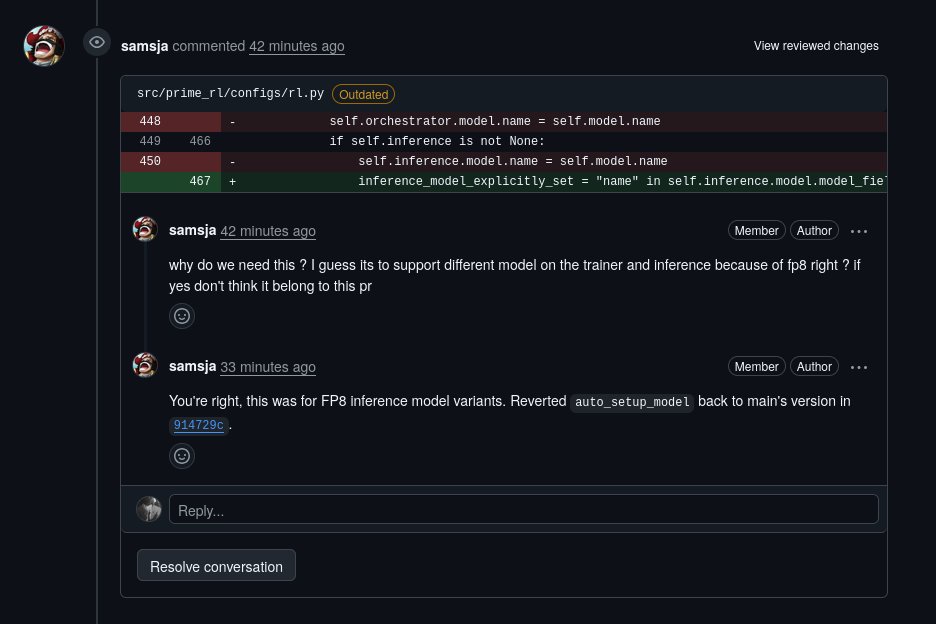

The long-awaited testing phase for @Midjourney V8 has officially begun, marking a massive leap forward for the generative art platform. This latest iteration promises a significant boost in efficiency, operating at five times the speed of its predecessors while maintaining a much tighter grip on complex prompt instructions. High-resolution creators will find the native 2K modes particularly useful for professional workflows. The update also brings more reliable text rendering and enhanced "sref" styling, allowing for a level of aesthetic consistency that was previously difficult to achieve. Personalization is a major focus of this release, with improved moodboard performance to help users fine-tune their unique visual language. It is an impressive step toward making AI-assisted design both faster and more intuitive.

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…

Ten years ago I was building factories. Today I'm building the tools I wish I had inside them. @TenkaraAI raised $7M led by @trueventures.

does anyone have any tips on how to prompt/plan when trying to oneshot large projects, like 50K+ LOC?

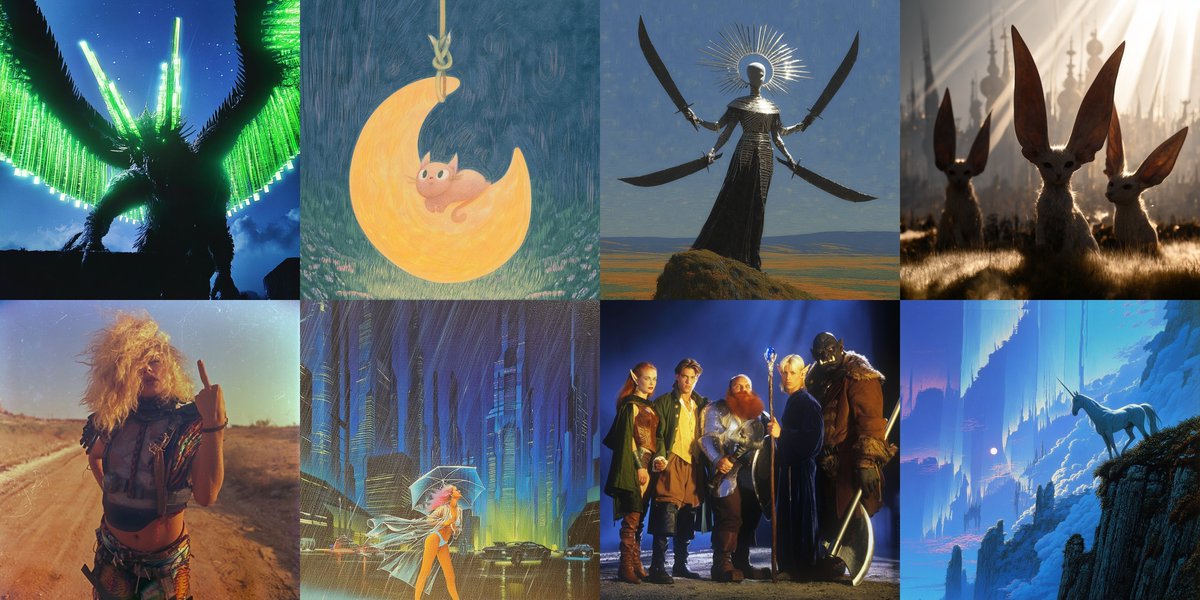

I hand-wrote a 500-LoC RL stack to make hacking on RL research much easier. Most RL stacks are either massive and unhackable, or duct-taped research scripts. I am open-sourcing Mithrl, a modular RLVR stack. Next items on my checklist: adding more complex environment examples, supporting multi-gpu + async RL, and QoL fixes. I might scrap external runtime dependencies (Huggingface PEFT + vLLM) and write purpose-built, simpler versions from scratch if I feel the need. If you want to experiment with RL and are looking to own sovereign tools, I’d love to get on call, understand your requirements and help integrate for free.