Ruixiang Zhang

99 posts

Ruixiang Zhang

@onloglogn

Research @Apple MLR, PhD @Mila_Quebec. Prev @GoogleDeepMind @preferred_jp

Apple Research just published something really interesting about post-training of coding models. You don't need a better teacher. You don't need a verifier. You don't need RL. A model can just… train on its own outputs. And get dramatically better. Simple Self-Distillation (SSD): sample solutions from your model, don't filter them for correctness at all, fine-tune on the raw outputs. That's it. Qwen3-30B-Instruct: 42.4% → 55.3% pass@1 on LiveCodeBench. +30% relative. On hard problems specifically, pass@5 goes from 31.1% → 54.1%. Works across Qwen and Llama, at 4B, 8B, and 30B. One sample per prompt is enough. No execution environment. No reward model. No labels. SSD sidesteps this by reshaping distributions in a context-dependent way — suppressing distractors at locks while keeping diversity alive at forks. The capability was already in the model. Fixed decoding just couldn't access it. The implication: a lot of coding models are underperforming their own weights. Post-training on self-generated data isn't just a cheap trick — it's recovering latent capacity that greedy decoding leaves on the table. paper: arxiv.org/abs/2604.01193 code: github.com/apple/ml-ssd

1/6 The "Self-Improvement" Paradox Can an LLM get smarter using only its own raw, unverified outputs? No verifiers. No teachers. No RL. We found the answer is an emphatic YES. Introducing SimpleSD: Embarrassingly Simple Self-Distillation. By simply sampling solutions from a model with specific temperature and truncation settings and then fine tuning the model on those exact samples, Qwen3-30B jumped from 42.4% to 55.3% (30% improvement) on LiveCodeBench v6 just by training on its own samples! 🚀 The gain is universal across different model sizes (4B, 8B, 30B) and model families (Llama, Qwen). The harder the problem is, the larger the gain. 📈 Kudos to my amazing colleagues @onloglogn, @richard_baihe, @UnderGroundJeg, Navdeep Jaitly, @trebolloc. Check out the paper and code below! 👇 paper: arxiv.org/abs/2604.01193 code: github.com/apple/ml-ssd HF models: huggingface.co/collections/ap…

1/6 The "Self-Improvement" Paradox Can an LLM get smarter using only its own raw, unverified outputs? No verifiers. No teachers. No RL. We found the answer is an emphatic YES. Introducing SimpleSD: Embarrassingly Simple Self-Distillation. By simply sampling solutions from a model with specific temperature and truncation settings and then fine tuning the model on those exact samples, Qwen3-30B jumped from 42.4% to 55.3% (30% improvement) on LiveCodeBench v6 just by training on its own samples! 🚀 The gain is universal across different model sizes (4B, 8B, 30B) and model families (Llama, Qwen). The harder the problem is, the larger the gain. 📈 Kudos to my amazing colleagues @onloglogn, @richard_baihe, @UnderGroundJeg, Navdeep Jaitly, @trebolloc. Check out the paper and code below! 👇 paper: arxiv.org/abs/2604.01193 code: github.com/apple/ml-ssd HF models: huggingface.co/collections/ap…

@elonmusk Thinking in language has limited applications, largely in coding and mathematics where the language itself can help reasoning. But, as I've been saying for years, thinking manipulates mental models in abstract (continuous) representation space. Soooo, xAI gonna use JEPA now?

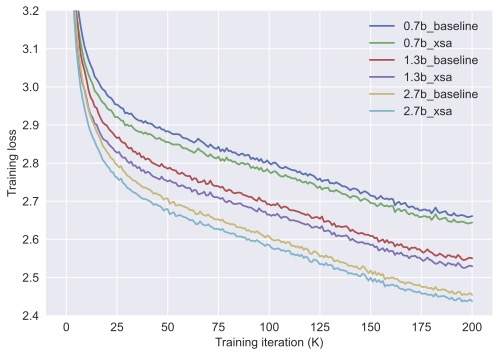

I explored the same thing 2-3 years back and got some positive results, but wasn’t convinced it was worth the overhead/complexity so I quickly put it on the shelf (maybe should have written something about it anyway). One thing the kimi paper didn’t highlight but I think is worth mentioning: the idea of treating network depth as sequence modeling is exactly what gave rise to the Highway Network, which was all about applying an LSTM along depth. In this sense taking one step further of replacing the LSTM with attention should come very natural. The only caveat here is that attention in the standard seq modeling case is fully parallelized, which makes it extremely efficient at training time; applying it along depth unfortunately looses this benefit, and computation overhead could become a real concern (but it appears not as bad as I originally thought based on the new paper’s large scale results).

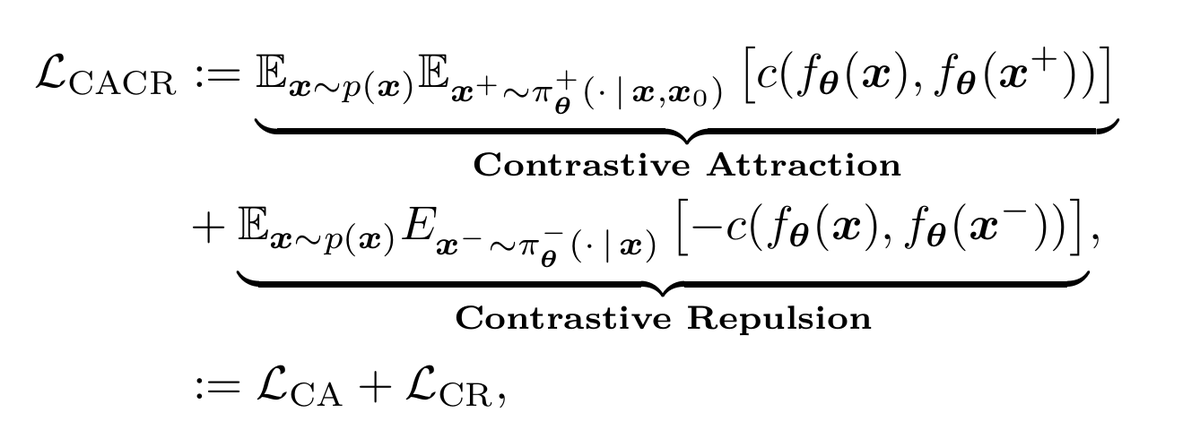

Thanks for pointing out the similarity between drifting and Implicit Maximum Likelihood Estimation! I worked out the mathematical connection - the crux is that drifting fields are similar to the gradient of a soft version of the IMLE loss. So drifting is defined in terms of the gradient, whereas IMLE is defined in terms of the objective, but the behaviour should be similar. It's reminiscent of the formulation of classical mechanics vs. Lagrangian mechanics from physics. One difference is that in drifting the weights on the positive samples and the negative samples are different, whereas they are the same in IMLE. It'd be interesting to see if the negative weights can be replaced with positive weights.

1/9 Softmax is the enemy of diversity in reward-maximization RL like GRPO. 📉 Recent analysis reveals: As RL boosts a "correct" token, Softmax automatically suppresses all others to maximize reward. This mechanism aggressively drives down entropy. This is Mode Elicitation: trading creativity for a local optimum. To fix this, we need to escape the discrete space. 🧵👇

New preprint & open-source! 🚨 “SimpleFold: Folding Proteins is Simpler than You Think” (arxiv.org/abs/2509.18480). We ask: Do protein folding models really need expensive and domain-specific modules like pair representation? We build SimpleFold, a 3B scalable folding model solely built on general-purpose transformers + flow matching, and is trained on 9M structures. SimpleFold supports easy deployment and efficient inference on consumer-level hardware with PyTorch/MLX (try it on your MacBook!) (1/n)

New preprint & open-source! 🚨 “SimpleFold: Folding Proteins is Simpler than You Think” (arxiv.org/abs/2509.18480). We ask: Do protein folding models really need expensive and domain-specific modules like pair representation? We build SimpleFold, a 3B scalable folding model solely built on general-purpose transformers + flow matching, and is trained on 9M structures. SimpleFold supports easy deployment and efficient inference on consumer-level hardware with PyTorch/MLX (try it on your MacBook!) (1/n)

STARFlow gets an upgrade—it now works on videos🎥 We present STARFlow-V: End-to-End Video Generative Modeling with Normalizing Flows, a invertible, causal video generator built on autoregressive flows! 📄 Paper huggingface.co/papers/2511.20… 💻 Code github.com/apple/ml-starf… (1/10)

New preprint & open-source! 🚨 “SimpleFold: Folding Proteins is Simpler than You Think” (arxiv.org/abs/2509.18480). We ask: Do protein folding models really need expensive and domain-specific modules like pair representation? We build SimpleFold, a 3B scalable folding model solely built on general-purpose transformers + flow matching, and is trained on 9M structures. SimpleFold supports easy deployment and efficient inference on consumer-level hardware with PyTorch/MLX (try it on your MacBook!) (1/n)

STARFlow gets an upgrade—it now works on videos🎥 We present STARFlow-V: End-to-End Video Generative Modeling with Normalizing Flows, a invertible, causal video generator built on autoregressive flows! 📄 Paper huggingface.co/papers/2511.20… 💻 Code github.com/apple/ml-starf… (1/10)