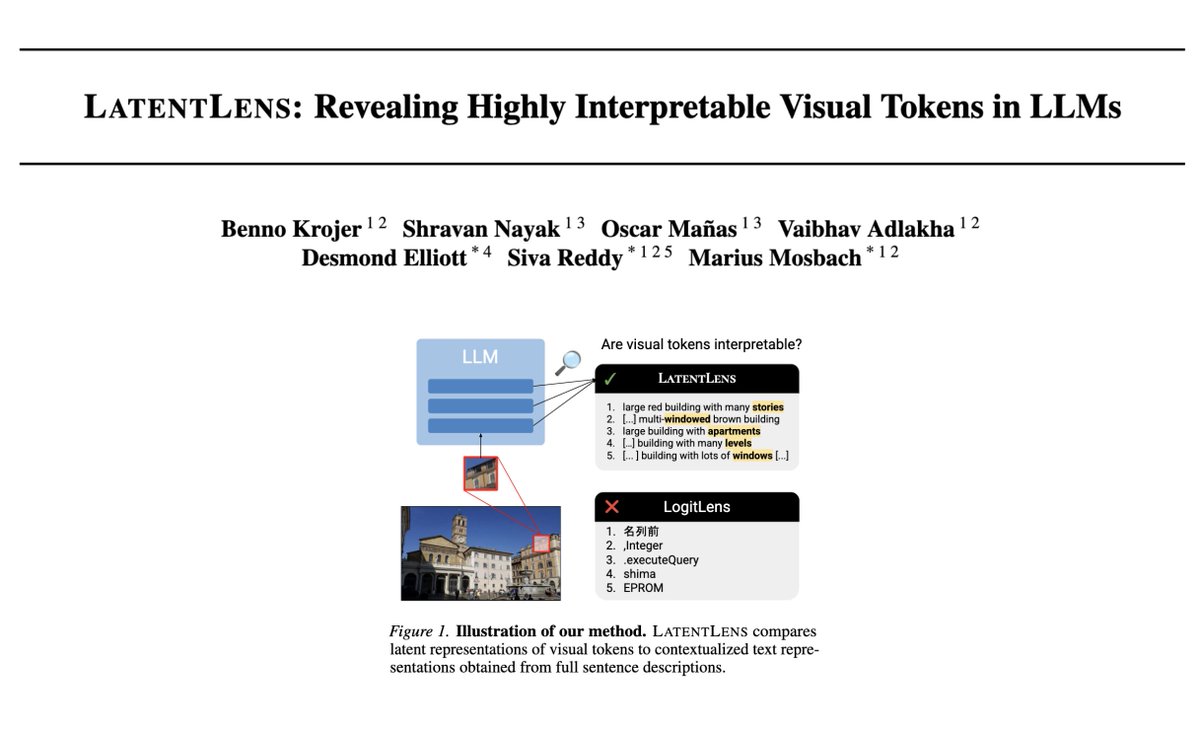

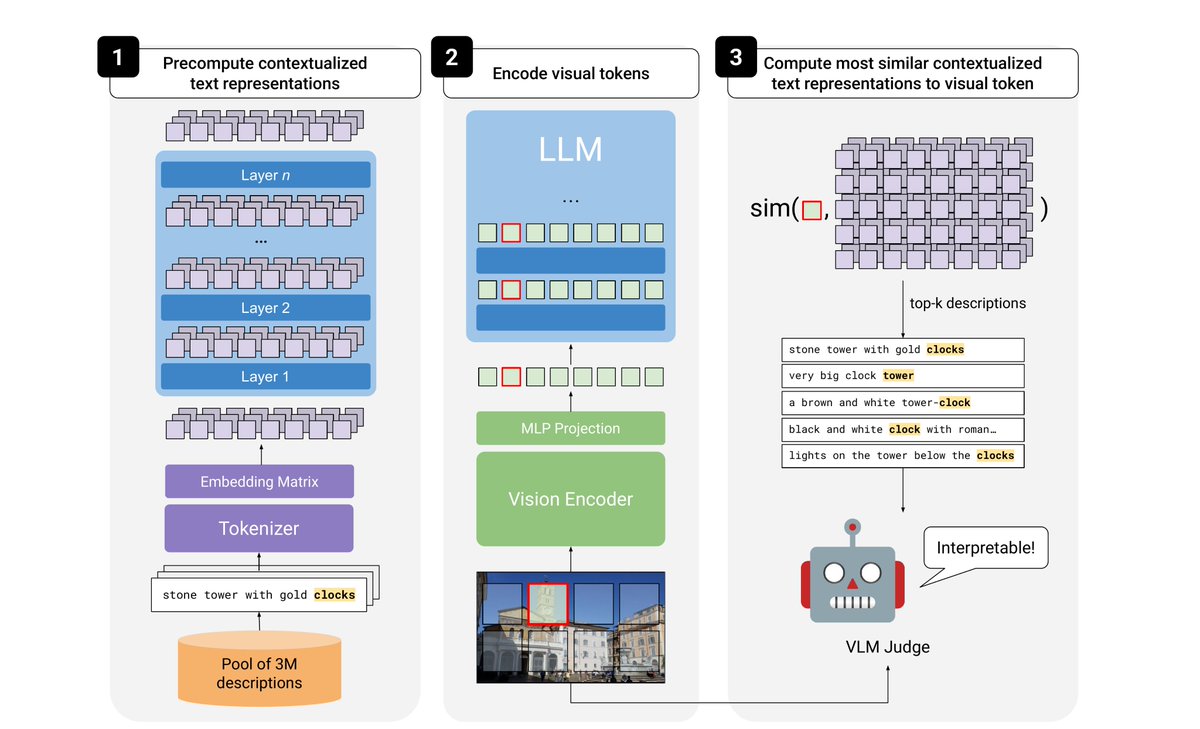

Oscar Mañas

1.1K posts

Oscar Mañas

@oscmansan

Research scientist at @AIatMeta, PhD from @Mila_Quebec @UMontrealDIRO. Working on multimodal vision+language generation. Català a Zúric.

I’d like to hire strong data engineers to join our Microsoft Super Intelligence (MSI) team. I am interested in people who are good at processing PDFs and other documents at billion scale, and people good at parsing the web at trillion scale. If you dream of processing all of human knowledge to advance science and engineering, this is for you. Also looking for strong evaluation and post-training engineers. Be part of our first launches this year 🚀 We have all the resources in the world to support you, working in startup mode, while powering a large organisation with billions of users. Hiring in London, Zurich, New York, Boston, Toronto, Seattle and SF. Please send your CV to JoinAITeam@microsoft.com

great feeling to wake up in the morning and see how much progress codex made overnight

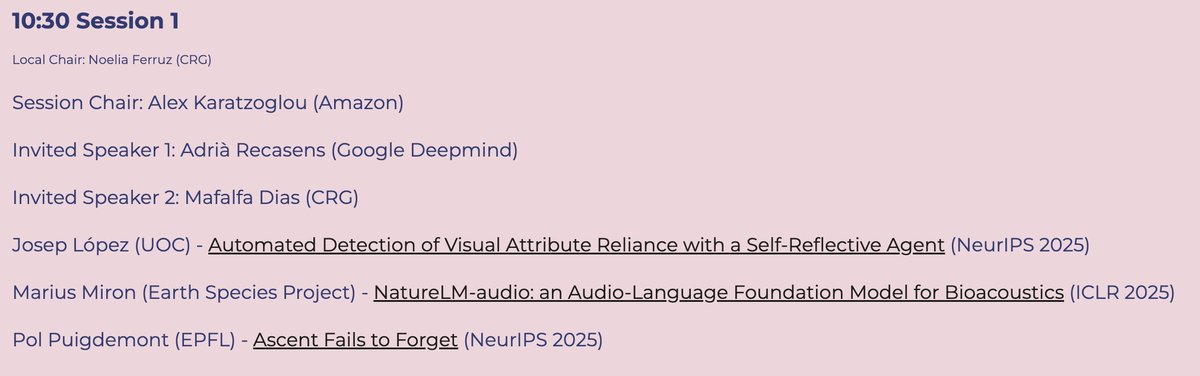

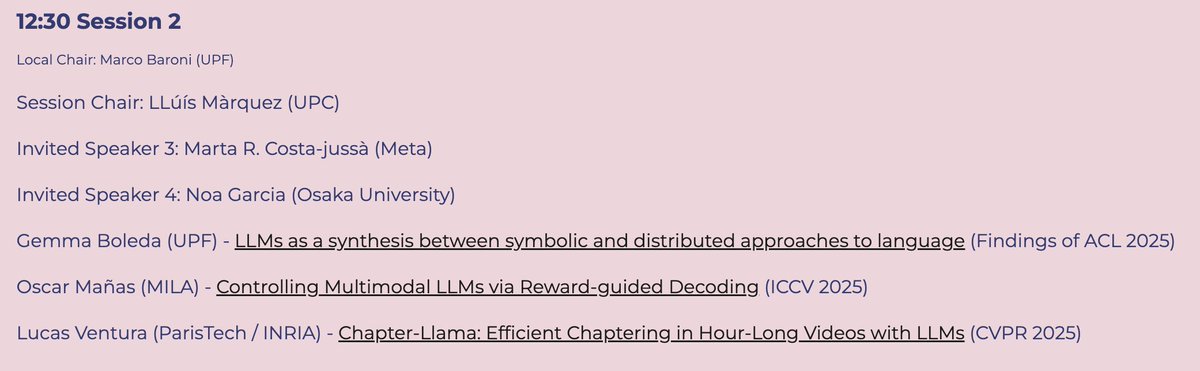

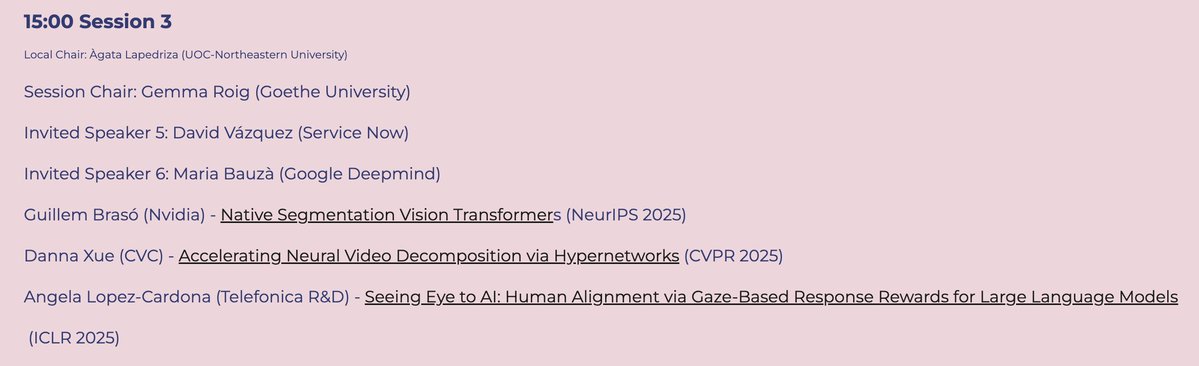

Happening in ~2 hours! Come say hi :)

🌺 Attending @ICCVConference in Honolulu this week! I'll be presenting our work on multimodal reward-guided decoding. Come check it out on October 21 (morning), poster #122. If you’re around, I’d love to connect and chat about multimodal models and real-time video generation!

I’m happy to share that our paper "Controlling Multimodal LLMs via Reward-guided Decoding" has been accepted to #ICCV2025! 🎉 w/ @proceduralia, @koustuvsinha, @adri_romsor, @michal_drozdzal, and @aagrawalAA 🔗 Read more: arxiv.org/abs/2508.11616 🧵 Here's what we did: