Sabitlenmiş Tweet

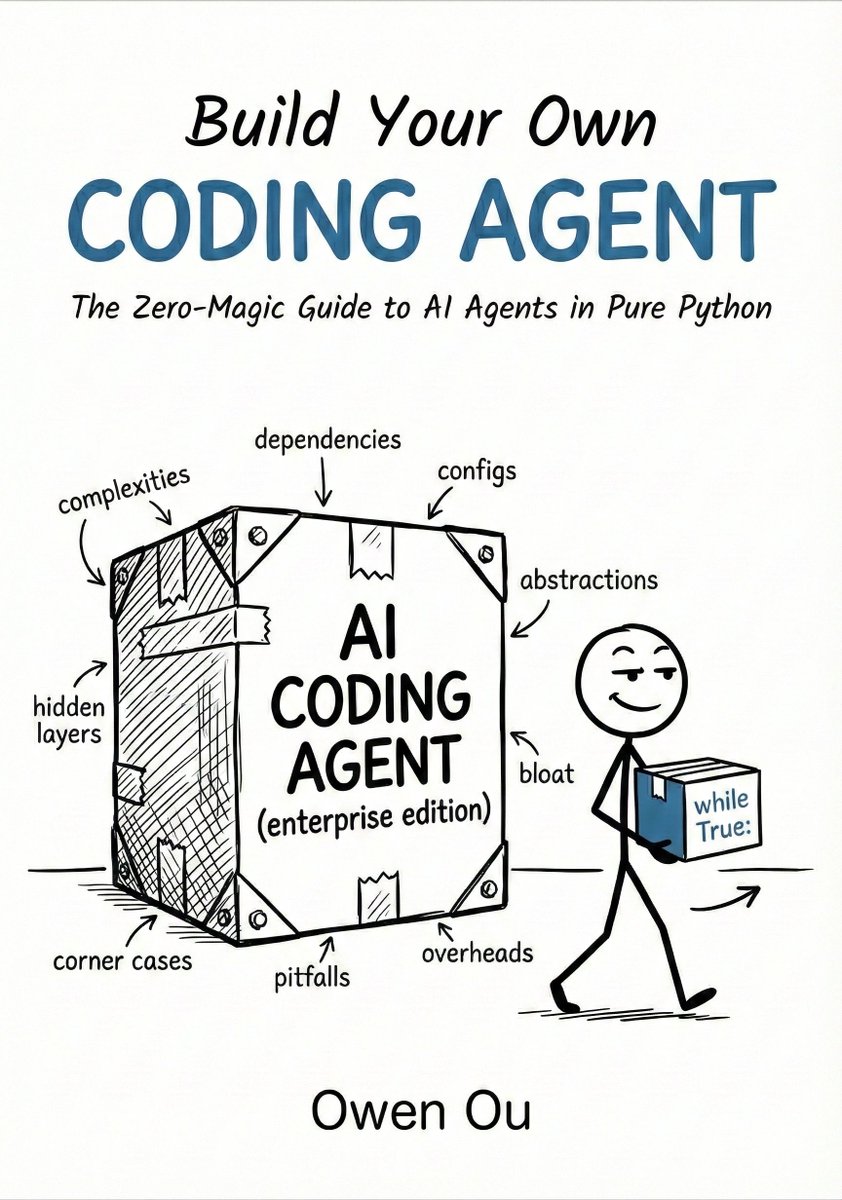

Tired of AI "magic"? I wrote a book on building a production-grade coding agent from scratch in pure Python.

🚫 No LangChain 🚫 No black boxes ✅ Just the brain, tools, & the loop.

If you can debug with print(), you can build this.

📖 buildyourowncodingagent.com

English