Paradatum

64 posts

Paradatum

@paradatum

You can’t build complex AI systems on shifting sand. We are fanatically focused on AI inference that is deterministic, lossless, high-speed, and audit ready.

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

AMIT @amitisinvesting JUST GOT TO ASK NVIDIA CEO JENSEN HUANG ANOTHER QUESTION

Announcing NVIDIA DLSS 5, an AI-powered breakthrough in visual fidelity for games, coming this fall. DLSS 5 infuses pixels with photorealistic lighting and materials, bridging the gap between rendering and reality. Learn More → nvidia.com/en-us/geforce/…

NVIDIA just dropped a banger paper on how they compressed a model from 16-bit to 4-bit and were able to maintain 99.4% accuracy, which is basically lossless. This is a must read. Link below.

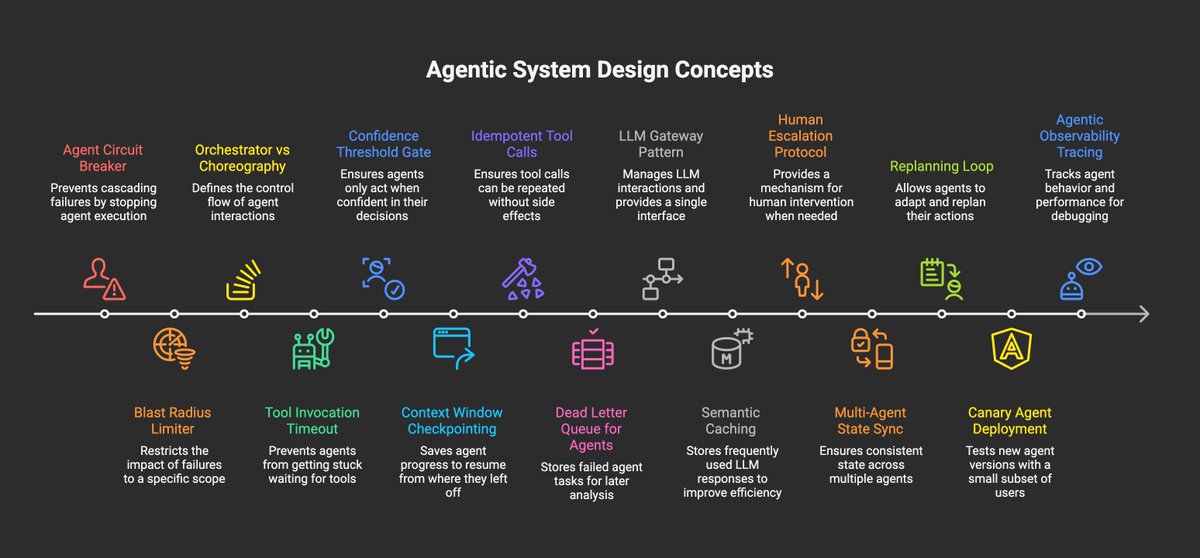

This is recently the approach we took with Fabro (github.com/fabro-sh/fabro) — wrap non-deterministic agents with: 1. Deterministic workflow graphs 2. Deterministic command steps (run unit tests) 3. Human checkpoints It enables much more autonomy than kindly requesting the agent do things and letting it decide when it is “done”