Sabitlenmiş Tweet

Paras

785 posts

Paras

@parasdevlife013

Building @Neatlogs | Write. Break. Debug. Repeat.

India Katılım Eylül 2023

121 Takip Edilen40 Takipçiler

Love the design that feels first? Want to solve a real problem with an amazing team? Then, come build with us

neatlogs@neatlogs

we’re hiring for a UI/UX designer (remote) someone who is: • design + feel first • strong craft, ships fast • solid with tools (figma/framer) • uses AI in their workflow bonus: • experience designing devtools products drop your best work or DM @BetterSayAJ your portfolio

English

Paras retweetledi

@RoundtableSpace I don't think deepseek v3.2 special matches the gemini 3.1 pro level

English

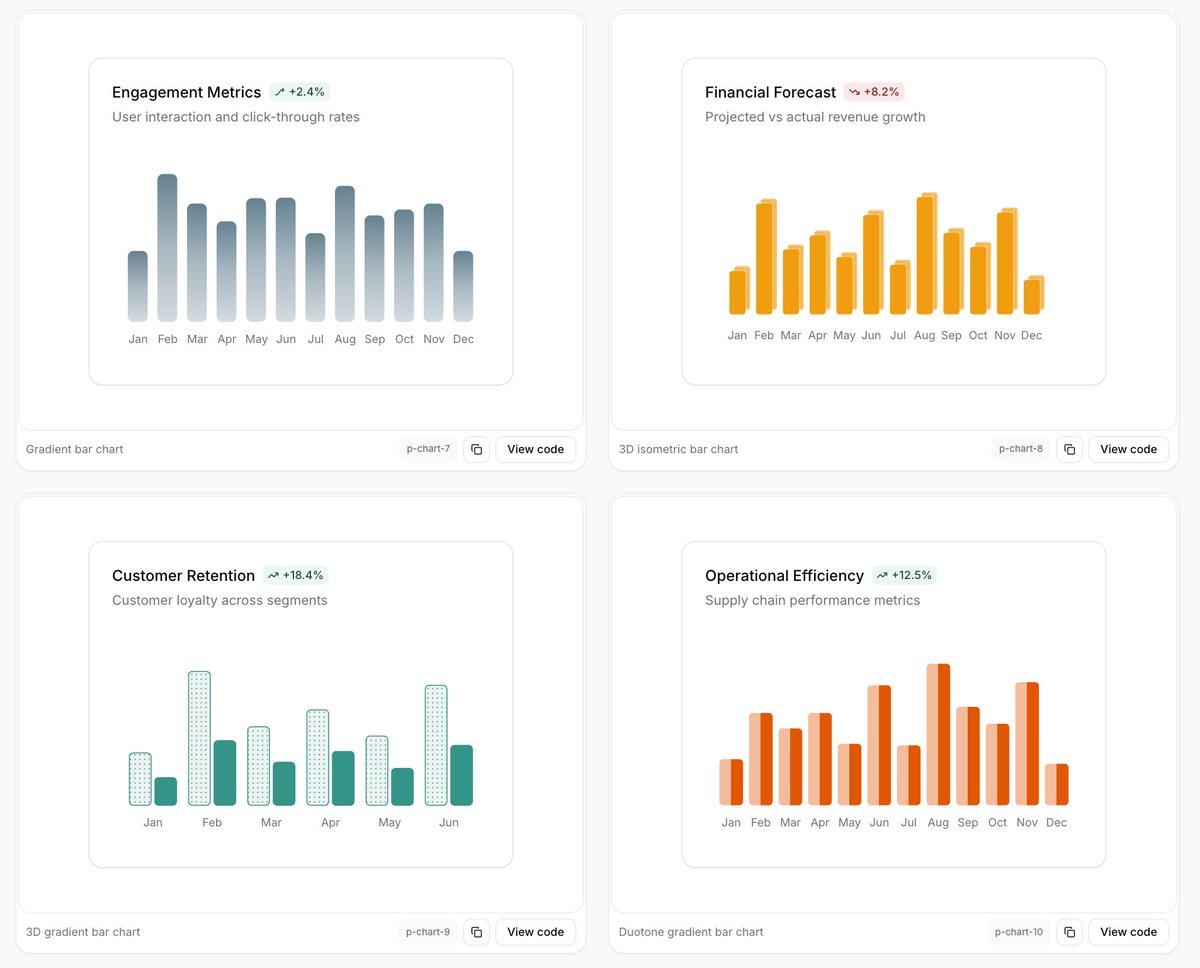

The @shadcn ecosystem just got stronger ⚔️

New open source library: shadcnspace.com

Really impressive blocks & templates.

Definitely trying this out in a real project soon.

English

We just released 𝚛𝚎𝚊𝚌𝚝-𝚋𝚎𝚜𝚝-𝚙𝚛𝚊𝚌𝚝𝚒𝚌𝚎𝚜, a repo for coding agents.

React performance rules and evals to catch regressions, like accidental waterfalls and growing client bundles.

How we collected them and how to install the skill ↓

vercel.com/blog/introduci…

English

I literally laugh when I see people comparing TanStack Start and Next.js like one is automatically better. Most devs follow hype, most humans follow hype

disclaimer : I love both @tan_stack start and @nextjs and daily I use it but comparing them superficially is nonsense here I want to talk on both sides of the coin around both the frameworks

let me make a hardcore and naked rant around this firstly people compare it with

Bundle size. People always start here. Stop. That is surface-level nonsense. TanStack Start gzipped is smaller, 100–120 kB. Next.js bigger, 150–176 kB. Smaller doesn’t automatically mean faster.

If your SSR isn’t optimized, your tiny bundle doesn’t help at all. Next.js ships more JS because it runs the RSC runtime, streaming, and hydration logic. That’s intentional, not bloat.

Comparing bundles here is like comparing a bicycle to a freight truck. One is small, one actually carries the load.

smaller bundle size in start ≠faster first paint if SSR isn’t optimized.

export default function Page() {

return (

<>

English

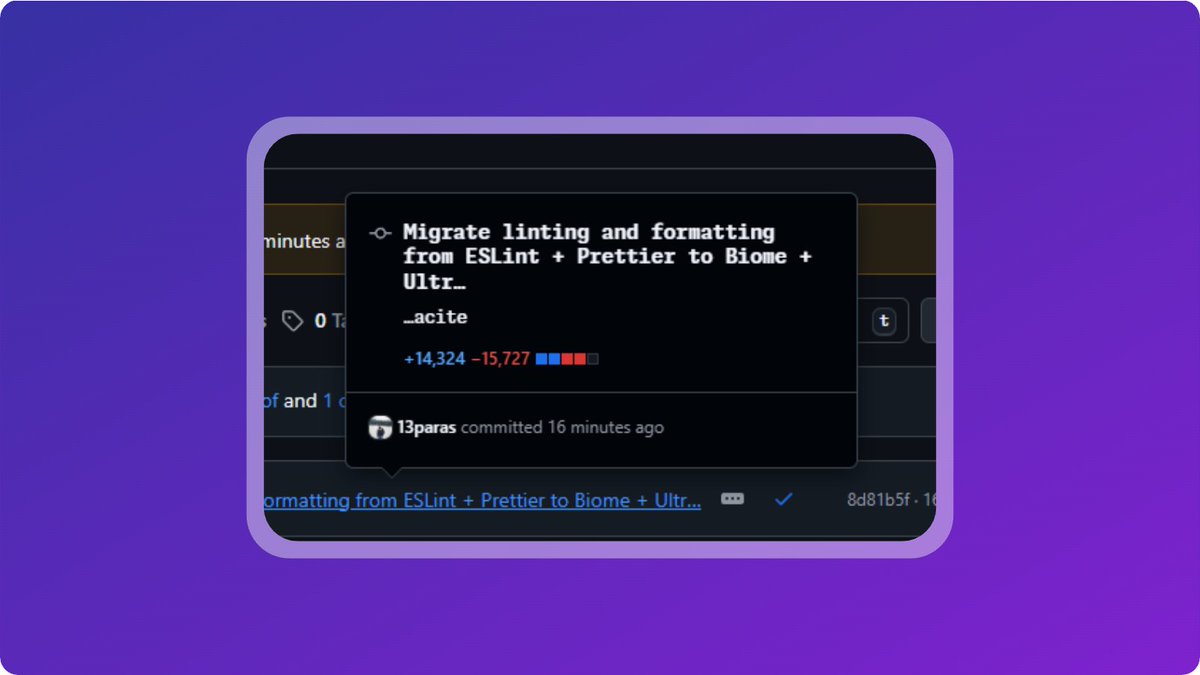

Just switched @neatlogs linting & formatting from Eslint + Prettier to @biomejs + Ultracite V7 🚀

14k+ lines of code changed, but definitely worth it.

Shoutout to @haydenbleasel for buildint Ultracite - setup was quick

English

@breathMessi21 @arpit_bhayani It's good for daily use, and I don't think he is someone who always sticks with his phone or plays games on it.

So pixel is fulfilling his priorities

English

@arpit_bhayani Mid range performance for one lakh plus phone 😂😂

English

I think going into 2026, as a developer, you'll have to accept that AI is "a thing" and won't go away.

Take advantage of it or ignore it (if you can) but don't hope for it to disappear.

I think there's a bubble that will burst at some point but that does not imply that the technology will disappear.

It does have its use and it does change things.

But just to be clear: AI is NOT that thing that will make you as a developer obsolete. Vibe coding is not the future for devs. It may be good for one-off, throwaway software but that's it.

Instead, I convinced using & controlling AI assistants is the future. Just as we've used auto-completion before AI.

Obviously, the amount of usage will differ on the problem you're tackling and it's easy to rely on AI too much.

I've said it before: Don't limit your skill level to that of the AI you're using. Instead, combine your skill & knowledge with AI assistants.

At least, that does work for me.

English