These two photographs are separated by only 66 years.

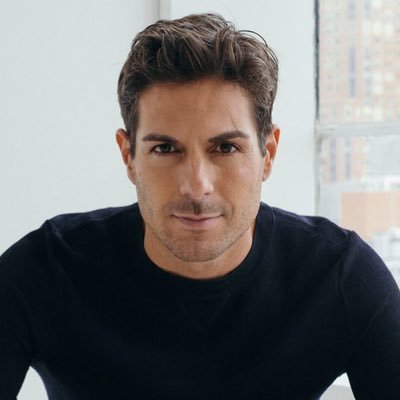

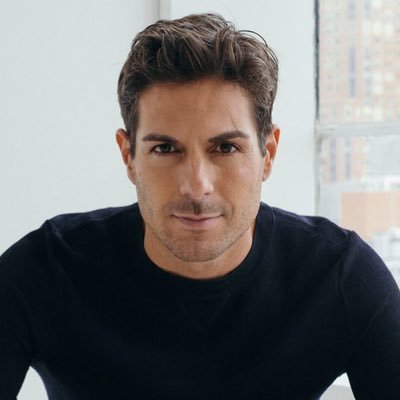

Paul Ashbourne

447 posts

@paulashbourne

agents + rl infra @openai | 💬 Opinions are my own | Made in Canada 🇨🇦

These two photographs are separated by only 66 years.

OPEN AI EXEC ⬇️⬇️⬇️

1/N I’m excited to share that our latest @OpenAI experimental reasoning LLM has achieved a longstanding grand challenge in AI: gold medal-level performance on the world’s most prestigious math competition—the International Math Olympiad (IMO).

AI privacy is critically important as users rely on AI more and more. the new york times claims to care about tech companies protecting user’s privacy and their reporters are committed to protecting their sources. but they continue to ask a court to make us retain chatgpt users' conversations when a user doesn't want us to. this is not just unconscionable, but also overreaching and unnecessary to the case. we’ll continue to fight vigorously in court today. i believe there should be some version of "AI privilege" to protect conversations with AI.

We’re rolling out a few updates to Codex today: 1. Codex is rolling out to ChatGPT Plus users today. It includes generous usage limits for a limited time, but during periods of high demand, we might set rate limits for Plus users so that Codex remains widely available.