Pablo Contreras Kallens

89 posts

Pablo Contreras Kallens

@pcontrerask

Ph. D. candidate, Cornell Psychology in the Cognitive Science of Language lab. Serious account.

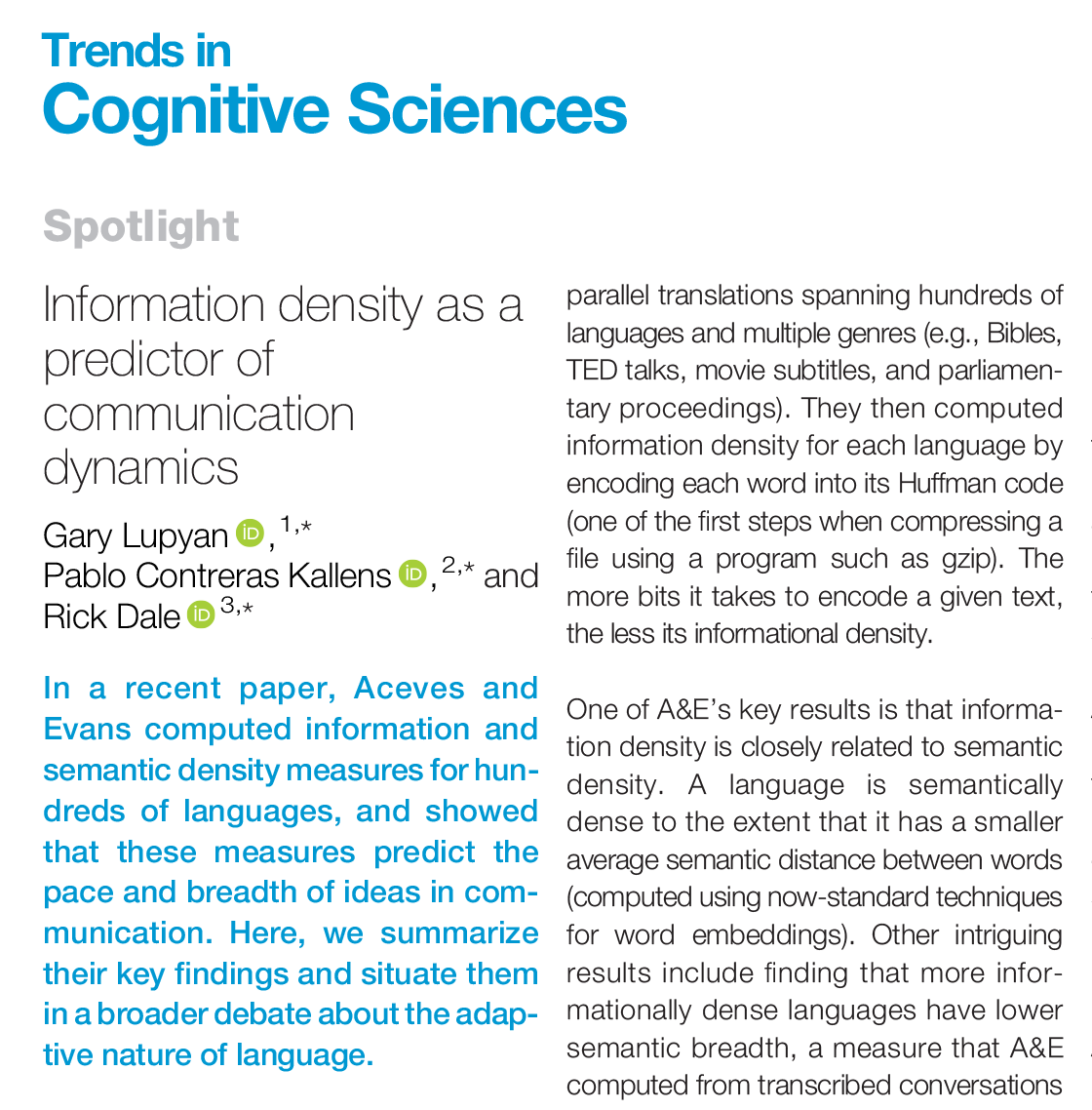

1/ Can Large Language Models (LLMs) truly reason? Or are they just sophisticated pattern matchers? In our latest preprint, we explore this key question through a large-scale study of both open-source like Llama, Phi, Gemma, and Mistral and leading closed models, including the recent OpenAI GPT-4o and o1-series. arxiv.org/pdf/2410.05229 Work done with @i_mirzadeh, @KeivanAlizadeh2, Hooman Shahrokhi, Samy Bengio, @OncelTuzel. #LLM #Reasoning #Mathematics #AGI #Research #Apple

Huge congratulations 🥳to 👉Dr.👈 @pcontrerask who just passed his PhD defense with flying colors!👏 He defended his dissertation in @CornellPsychDpt THE COMPUTATIONAL BRIDGE: INTERFACING THEORY AND DATA IN COGNITIVE SCIENCE Follow @pcontrerask to see the papers

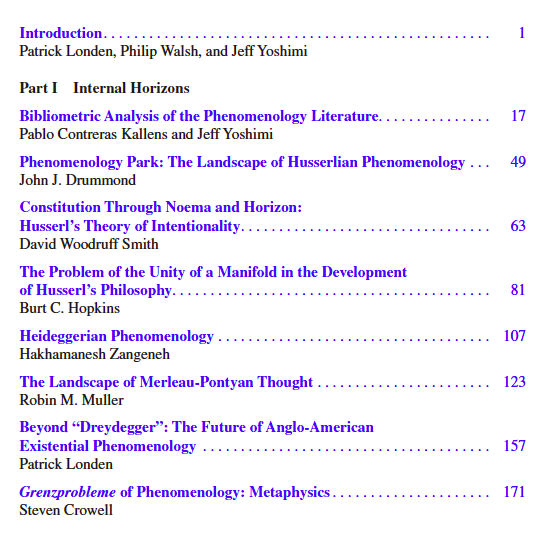

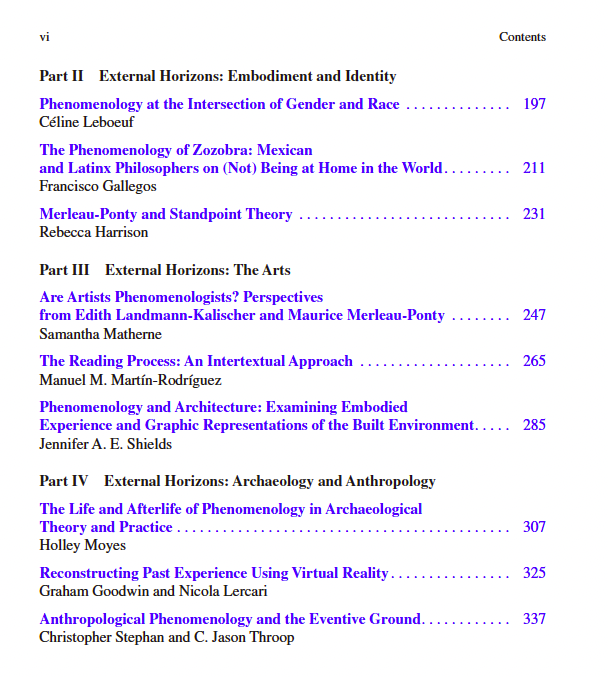

I'm delighted to announce the publication of our free, open access book, "Horizons of Phenomenology", a collection of essays on the state of the field. A brief thread about the book, and the long and ultimately victorious struggle to publish it open access. 1/

It seems like in the recent discussion of the poverty of the stimulus & large language models two versions of PoS are being conflated. Version 1 says human children do not have enough data to learn abstract grammatical representations from *their* input. LLMs have nothing to say