Petar Veličković

3.3K posts

@PetarV_93

Senior Staff Research Scientist @GoogleDeepMind | Affiliated Lecturer @Cambridge_Uni | Assoc @clarehall_cam | GDL Scholar @ELLISforEurope. Monoids. 🇷🇸🇲🇪🇧🇦

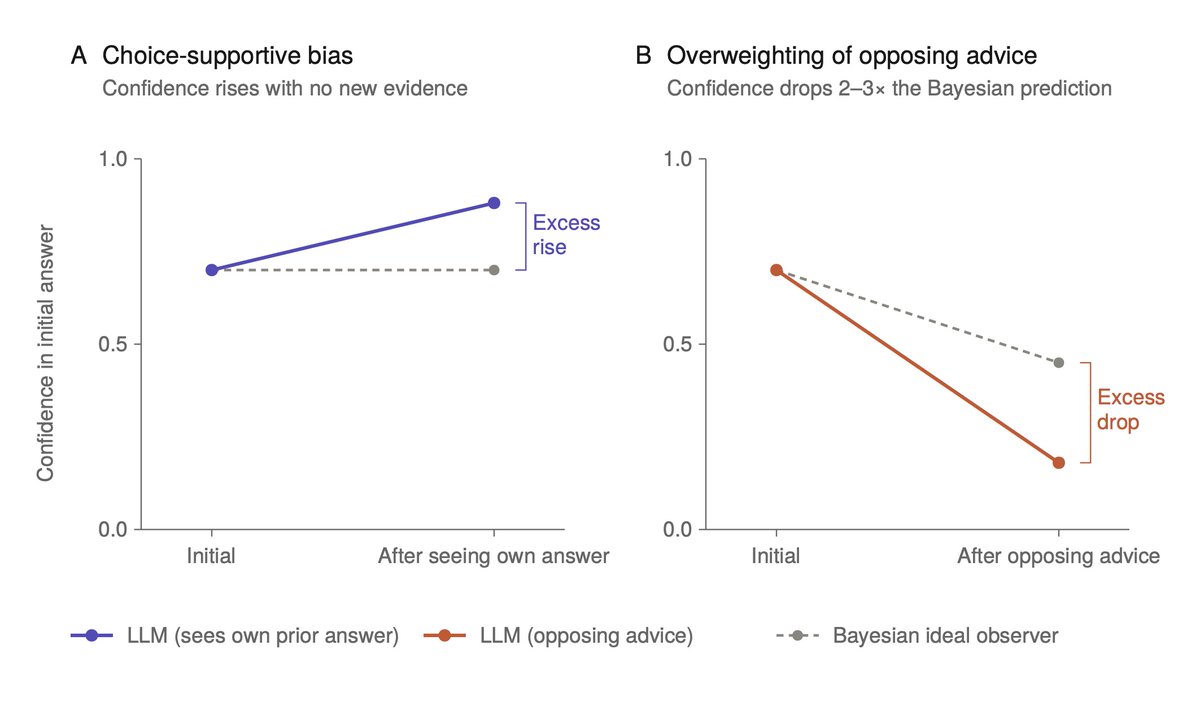

new preprint: investigating pathways language models use to verbalise their confidence! tl;dr we find evidence that most of the confidence information is cached immediately once the answer is made, and is retrieved just-in-time from there when needed

🚨🌶️ Did you realise you can get alignment `training’ data out of open weights models? Oops We show that models will regurgitate alignment data that is (semantically) memorised. This data can come from SFT and RL... and can be used to train your own models! 🧵

oh, did i say chapter? i meant _chapters_ We've just released draft Chapter 6 (Grids) and Chapter 7 (Group Convolutions on Homogeneous Spaces) of the GDL Book Alice's journeys in geometric wonderland continue #️⃣🌍

Exactly 7 years ago, I attended my first ML event @Cambridge_CL, celebrating David MacKay's work. Among many great talks (incl. @geoffreyhinton), the highlight was the surprise "night-time" information theory talk from Prof MacKay himself. I believe this was his last talk. RIP.

In 2023, I paused my PhD to join @OpenAI to build the world’s first reasoning machine — OpenAI o1. Earlier this year, I defended my PhD thesis “Building a Reasoning Machine” advised by @Yoshua_Bengio at @Mila_Quebec 🎓 🎉 Much has changed since Yoshua and I first discussed reasoning in 2022, but the main themes aged well: - Adding structures to computation unlocks strong reasoning capabilities; - Data & sample efficiency will become the bottleneck to useful intelligence; - Retaining Bayesian uncertainty is key to reliable and safe AI systems. You can read the introduction of my thesis here: edwardjhu.com/thesis/ My next professional chapter (TBA) will be on bridging frontier intelligence with real economic impact, a theme dear to my heart after working closely with @drwconvexity and @suna_said in the last year 🚀