Mrinal Mathur retweetledi

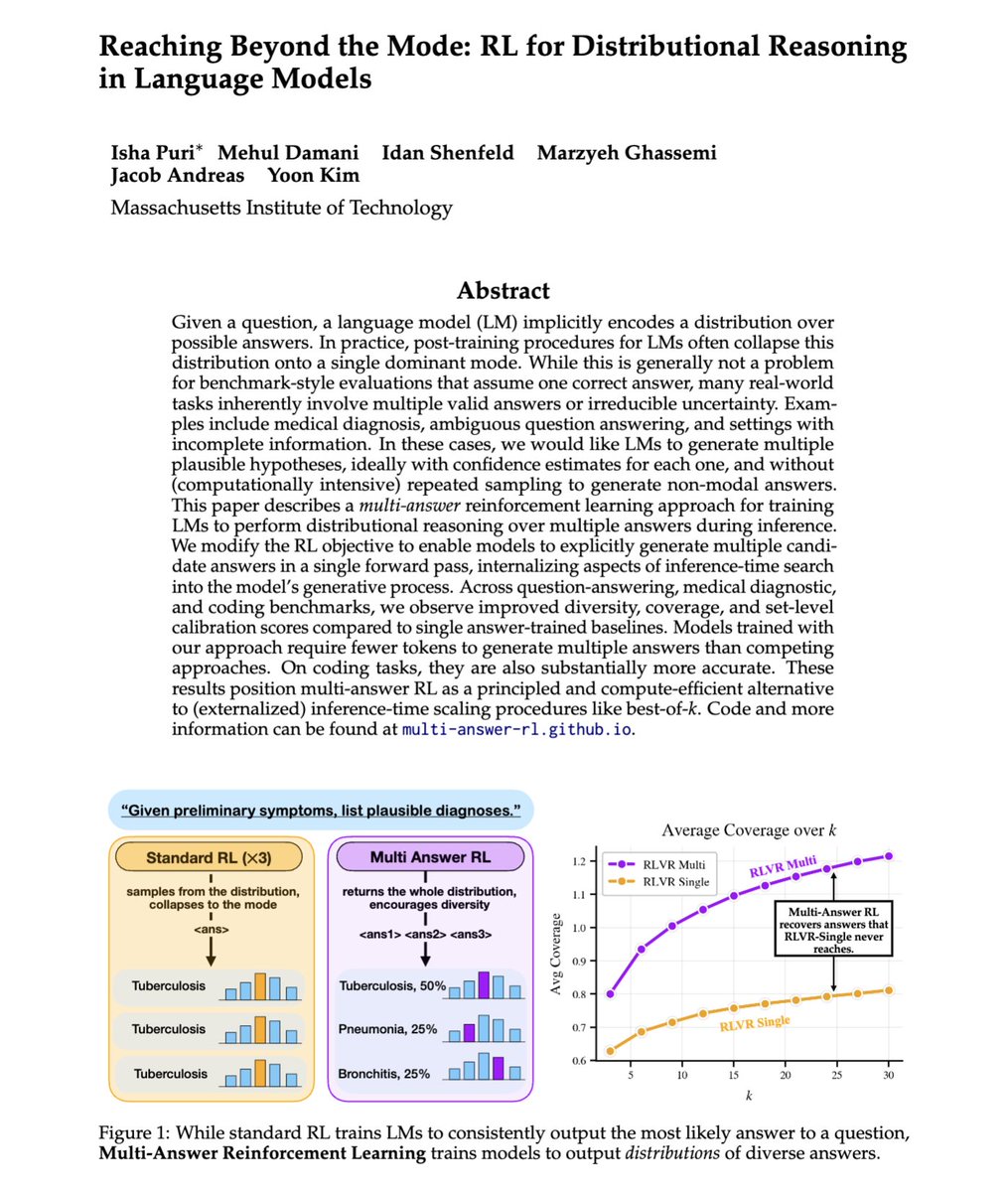

Researchers just taught AI to think 12x faster without using words.

Reasoning chains are powerful but expensive.

Every token a model "thinks" costs time and money.

A new paper called Abstract Chain-of-Thought proposes a fix.

Instead of reasoning in full sentences, the model invents its own compressed language.

It uses reserved placeholder tokens like as shorthand for entire thoughts.

The result is up to 11.6x fewer reasoning tokens with comparable accuracy.

Training happens in two stages:

1. A warm-up loop teaches the model what these abstract tokens mean using a teacher's verbal reasoning.

2. Reinforcement learning then refines how the tokens are sequenced for better answers.

Tested on math, instruction-following, and multi-hop benchmarks, performance held up against verbal chains.

Even stranger, the abstract vocabulary started forming patterns similar to real language.

Frequent tokens dominated like common words do.

English