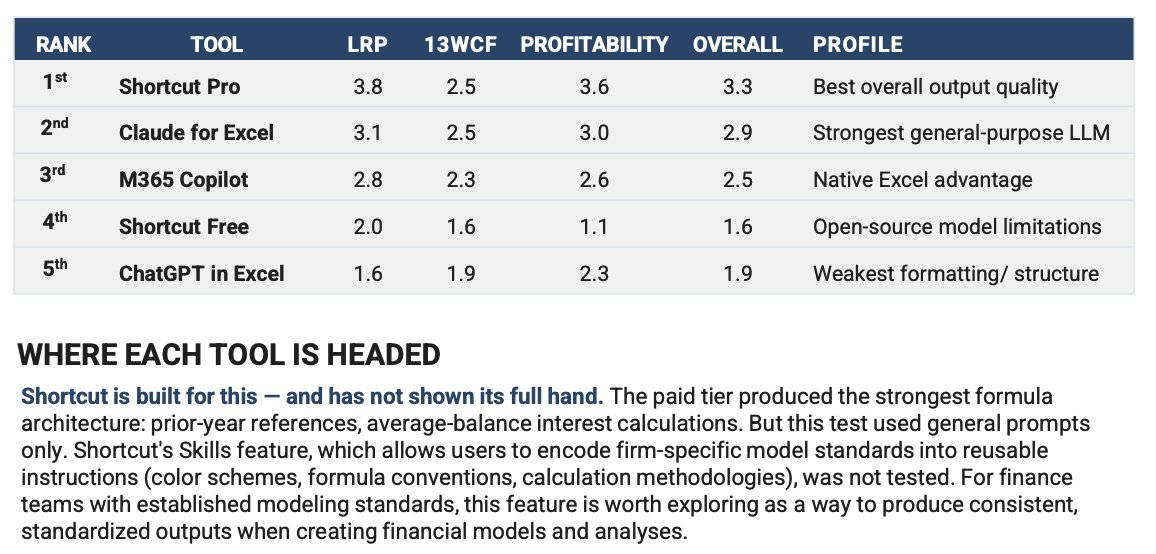

Sabitlenmiş Tweet

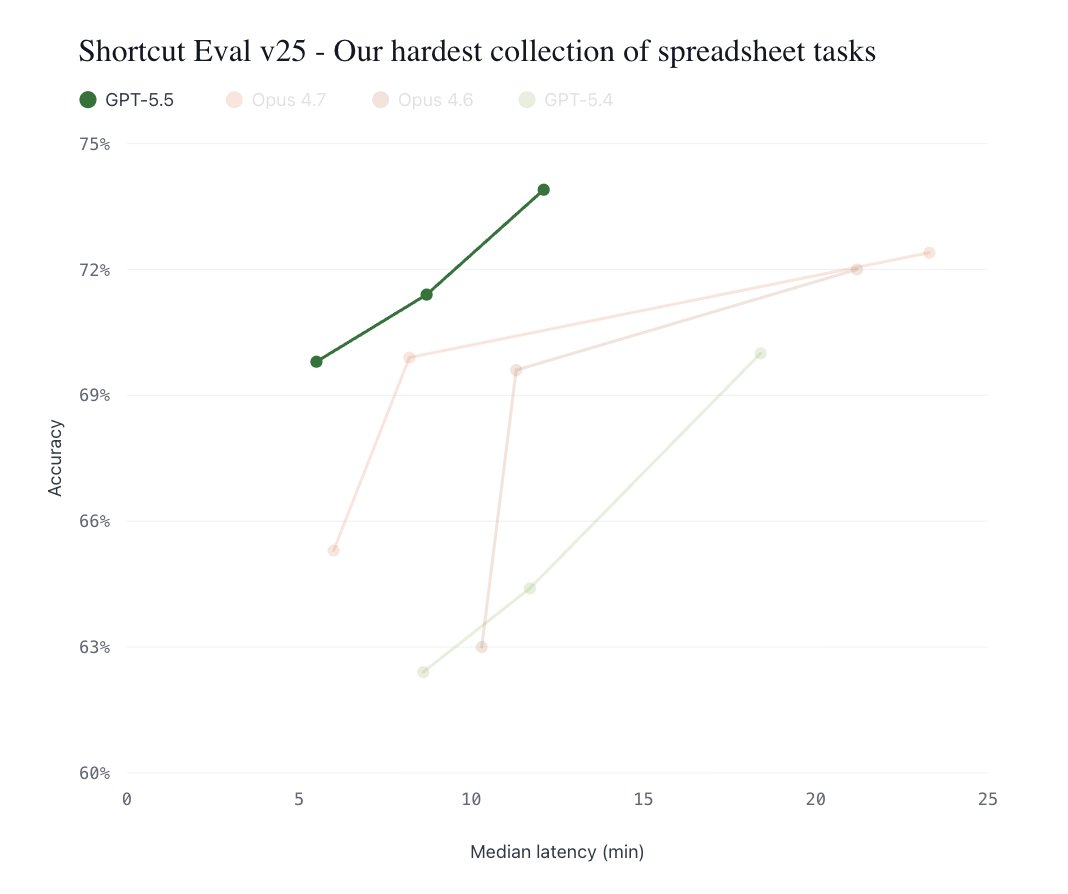

Introducing ShortcutXL - your favorite spreadsheet agent is now available as a desktop generalist.

Unlock Excel capabilities that no other spreadsheet agents can touch (VBA macros, What-if analyses, and more).

Have a swarm of Shortcut agents work on dozens of models concurrently / in parallel.

This is just a taste. try ShortcutXL now: npmjs.com/package/shortc…

English