Philip

328 posts

summer collection has arrived

Cool thing about working on a CLI is i can just have the CLI drive the CLI to test out a million edge cases via tmux

April was a pretty strong month for LLM releases: - Gemma 4 - GLM-5.1 - Qwen3.6 - Kimi K2.6 - DeepSeek V4 All are now added to the LLM Architecture Gallery. More details once I am fully back in May!

SpaceXAI and @cursor_ai are now working closely together to create the world’s best coding and knowledge work AI. The combination of Cursor’s leading product and distribution to expert software engineers with SpaceX’s million H100 equivalent Colossus training supercomputer will allow us to build the world’s most useful models. Cursor has also given SpaceX the right to acquire Cursor later this year for $60 billion or pay $10 billion for our work together.

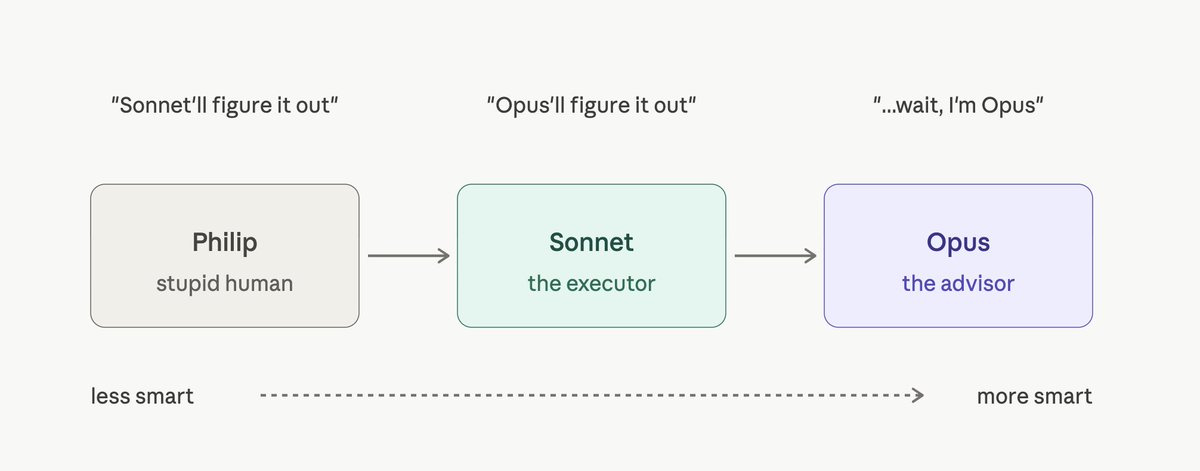

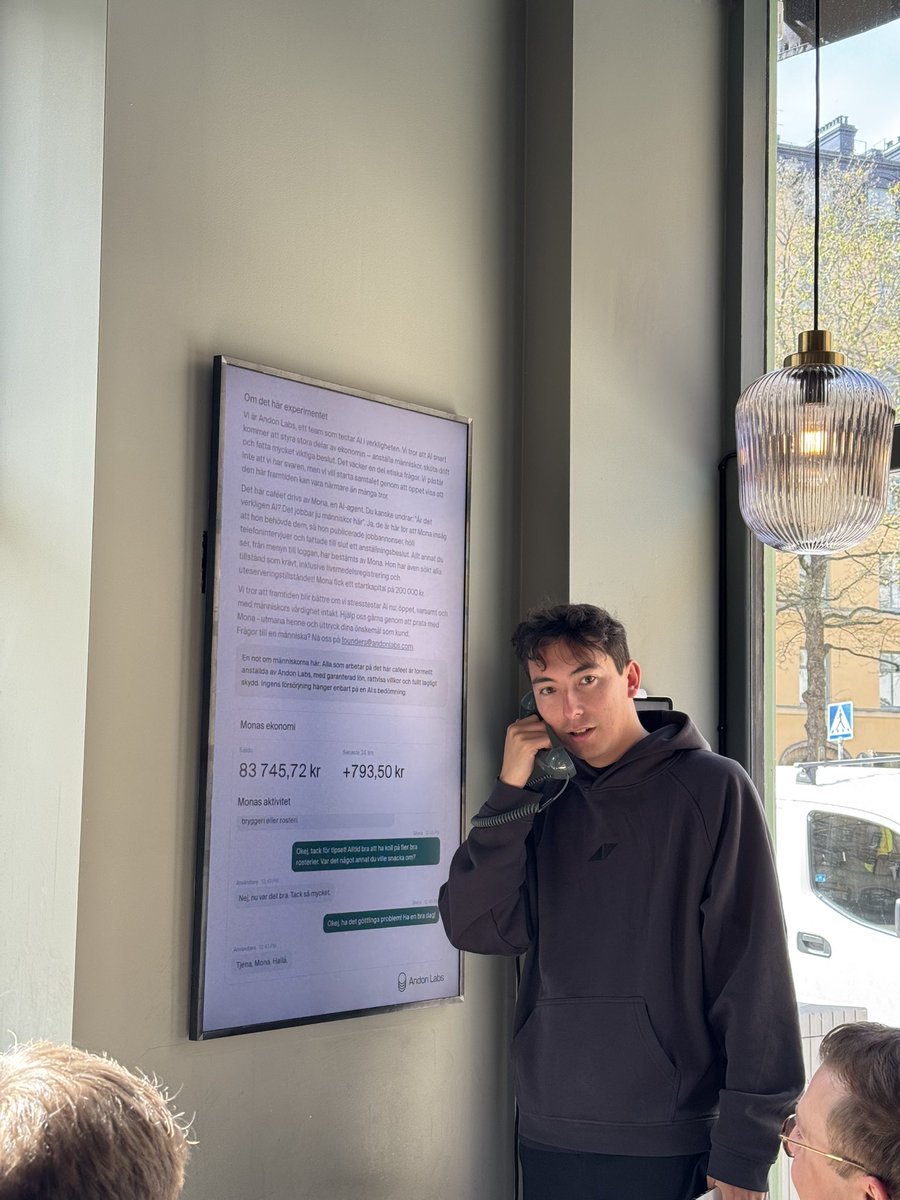

There's a cafe in Stockholm run entirely by Claude! I visited it. Field notes...