@Majumdar_Ani The commercial incentives point is huge. Video models get billions for content — robotics rides the wave.

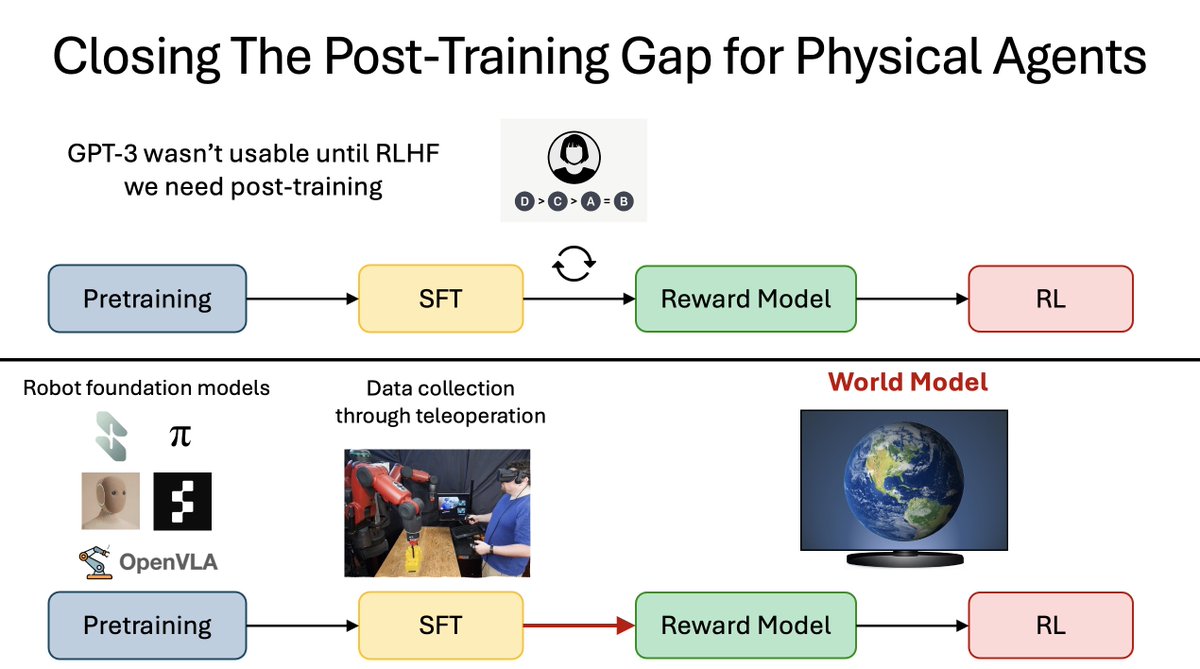

DreamZero's middle ground: dreams in pixels but action grounding might filter irrelevant features naturally.

Does action-conditioning implicitly "predict the predictable"?

English