Stephen James

508 posts

Stephen James

@stepjamUK

CEO @Neuracore_AI | Assistant Professor @imperialcollege | ex-Director of Dyson Robot Learning Lab | Postdoc @UCBerkeley w/ @pabbeel | PhD ICL w/ @ajdDavison

New to Neuracore? Check out our latest platform tour on YouTube and see how teams collect, observe, train, and deploy, all in one workflow. youtu.be/kjQ8RWJExb4

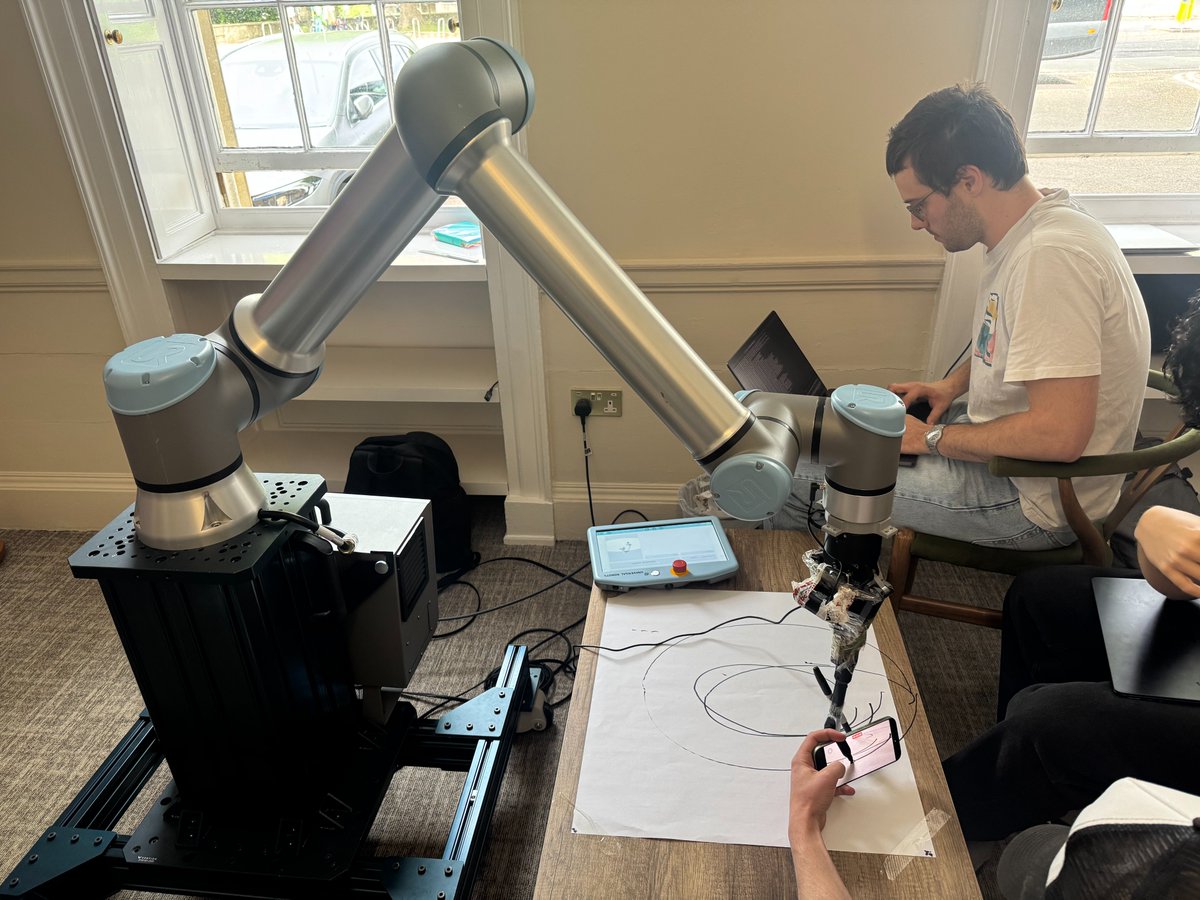

This weekend we're powering the Oxford Hardware / Physical AI Hackathon at @UniofOxford, with free access to the Neuracore platform for every participant. Hosted by The Oxford Edge and @OxfordAI with hardware from @FoundryRobotics, @Quanser and @huggingface LeRobot. Sensor kits from Atech. Coding credits from @AnthropicAI and @Cursor. If you're going, come find us!

𝗥𝗲𝘀𝘁𝗮𝘂𝗿𝗮𝗻𝘁𝘀 𝗱𝗼𝗻'𝘁 𝗻𝗲𝗲𝗱 𝗰𝗼𝗻𝘃𝗶𝗻𝗰𝗶𝗻𝗴 𝗼𝗻 𝗿𝗼𝗯𝗼𝘁𝗶𝗰𝘀. 𝗧𝗵𝗲𝘆 𝗻𝗲𝗲𝗱 𝘁𝗵𝗲 𝗹𝗮𝘆𝗲𝗿 𝘁𝗵𝗮𝘁 𝘀𝗶𝘁𝘀 𝘂𝗻𝗱𝗲𝗿𝗻𝗲𝗮𝘁𝗵 𝗶𝘁. @IvanTregear, CTO of @KAIKAKU_AI speaks on the misconception that operators are tech resistant. Most are eager to deploy. The real blocker is foundational: no databases, no analytics, no sensing layer for automation to act on. Head to the link in comments to watch our new series exploring Robotics in Europe.

Most robot learning stacks assume you've already picked your hardware. Switch arms, switch grippers, switch sensors, and your data pipeline breaks. That's the Infrastructure Tax. And it's the reason teams spend more time wiring up robots than training models. Neuracore is hardware agnostic by design. Here it is making a cup of tea on an Open Arm. The same platform runs the same way on any embodiment you point it at, from research arms to industrial manipulators to humanoids. One stack. Any robot. Clean, high-fidelity data flowing into your training pipeline regardless of what's holding the kettle. Your hardware shouldn't decide your roadmap.

One week to go. Next Tuesday, our Founder and CEO Stephen James takes the stage at STIQ Ltd's Robotics & Automation networking event, one of London's sharpest gatherings for roboticists, operators, buyers and investors. Stephen joins the panel with Obinna Njoku (@PepsiCo), Mark Slack (@CMRSurgical), Caroline France (@SciTechgovuk) and Oana Andreea Jinga (@dexoryHQ), hosted by Tom Andersson, to talk about how we're building the cloud-native infrastructure that closes the gap between simulation and real-world robot deployment. 📍 20 Primrose St, London ⏰ Tuesday 19 May, 17:30 to 21:00 Sign up here: luma.com/avzbz7v0?tk=cU…

𝗡𝗲𝘄 𝗥𝗲𝗹𝗲𝗮𝘀𝗲 𝗩𝟭𝟭.𝟬.𝟬 | 𝗘𝗺𝗯𝗼𝗱𝗶𝗺𝗲𝗻𝘁 𝗮𝗿𝗰𝗵𝗶𝘁𝗲𝗰𝘁𝘂𝗿𝗲 𝗮𝗻𝗱 𝗳𝗹𝗲𝗲𝘁-𝘀𝗰𝗮𝗹𝗲 𝗱𝗮𝘁𝗮 𝗶𝗻𝗳𝗿𝗮𝘀𝘁𝗿𝘂𝗰𝘁𝘂𝗿𝗲 Neuracore V11.0.0 introduces embodiment-level abstraction, eliminates per-robot configuration, and scales data collection to 1000 concurrent streams for warehouse deployments. 𝗪𝗵𝗮𝘁'𝘀 𝗻𝗲𝘄: • Embodiment refactor enables fleet-wide configuration without per-robot customization • Batch recording traces reduce I/O overhead by 60% in distributed deployments • Auto batch size optimization adjusts to available memory and sensor throughput • Max concurrent streams increased from 100 to 1000 for warehouse-scale fleets • Improved CLI profiles and error logging to cloud buckets for production debugging Neuracore v11.0.0 release notes can be found in the comments below: