Pranay Agrawal

1.1K posts

Pranay Agrawal

@praggr

Unfiltered thoughts. @codepragrr

He reinvented the 3D printer Introducing the polysynth mini:

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length. 🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models. 🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice. Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today! 📄 Tech Report: huggingface.co/deepseek-ai/De… 🤗 Open Weights: huggingface.co/collections/de… 1/n

In my doctorate, I proved the Erdős Primitive Set Conjecture, showing that the primes themselves are maximal among all primitive sets. This problem will always be in my heart: I worked on it for 4 years (even when my mentors recommended against it!) and loved every minute of it. [Primitive sets are a vast generalization of the prime numbers: A set S is called primitive if no number in S divides another.] Now Erdős#1196 is an asymptotic version of Erdős' conjecture, for primitive sets of "large" numbers. It was posed in 1966 by the Hungarian legends Paul Erdős, András Sárközy, and Endre Szemerédi. I'd been working on it for many years, and consulted/badgered many experts about it, including my mentors Carl Pomerance and James Maynard. The the proof produced by GPT5.4 Pro was quite surprising, since it rejected the "gambit" that was implicit in all works on the subject since Erdős' original 1935 paper. The idea to pass from analysis to probability was so natural & tempting from a human-conceptual point of view, that it obscured a technical possibility to retain (efficient, yet counter-intuitve) analytic terminology throughout, by use of the von Mangoldt function \Lambda(n). The closest analogy I would give would be that the main openings in chess were well-studied, but AI discovers a new opening line that had been overlooked based on human aesthetics and convention. In fact, the von Mangoldt function itself is celebrated for it's connection to primes and the Riemann zeta function--but its piecewise definition appears to be odd and unmotivated to students seeing it for the first time. By the same token, in Erdős#1196, the von Mangoldt weights seem odd and unmotivated but turn out to cleverly encode a fundamental identity \sum_{q|n}\Lambda(q) = \log n, which is equivalent to unique factorization of n into primes. This is the exact trick that breaks the analytic issues arising in the "usual opening". Moreover, Terry Tao has long suspected that the applications of probability to number theory are unnecessarily complicated and this "trick" might actually clarify the general theory, which would have a broader impact than solving a single conjecture.

one of my all-time favorite plots

If OpenAI and Anthropic both finished training surprisingly capable large models at roughly the same time in early March, then this is potentially purely a result of scale. Q1 2026 was just the first time anyone had enough compute to train at this level. If this really comes down to how fast, and to what extent, you can scale physical infrastructure, then I think it probably becomes very difficult to beat Elon after around 2030. If the race goes that long, and we are still pre-transformative, he will just keep ramping up physical constructs. He will literally build a datamoon if that's what it takes to win a contest of scale. If orbital datacenters work, he probably also wins that way due to SpaceX. Mark Zuckerberg is just as scale-pilled. Last year, when he was pressed on capex during the earnings call, he said that he would rather overbuild now than risk missing the next leap that requires 10x more compute to train. The last eighteen months have shown how valuable top human talent in this industry still is, but even senior people at OpenAI and Anthropic now say openly that they do not know how long they themselves will still have these jobs. Once automated researchers are superhuman, top talent will be supplanted by how many super-researchers you can run simultaneously. It will be difficult to beat Elon and Zuck at this game by the end of the decade. This is what Stargate is for, but will it be enough? Against xAI, META, Microsoft, and Google, it seems that OpenAI and Anthropic have to blitz now; reach a sufficient capability threshold to surpass the human level, then automate as much of the economy as possible as fast as possible before they are outbuilt.

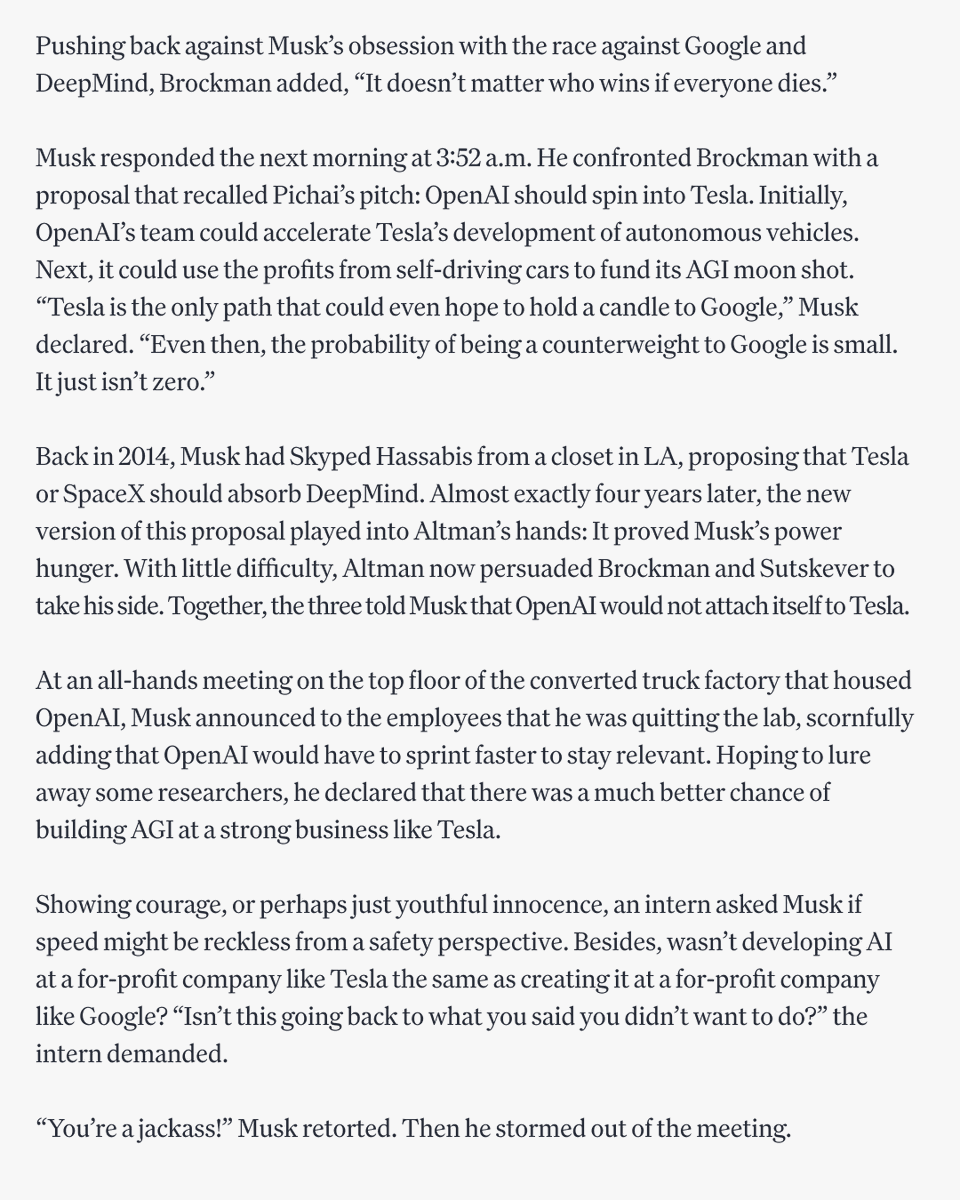

We're publishing an exclusive chapter from @scmallaby's brilliant new book about Demis Hassabis and DeepMind. This is the inside story of Project Mario. How DeepMind's co-founders spent 4 years trying every mechanism they could think of to put guardrails around AGI, only to watch each one fail, and conclude that the only safeguard was themselves. It reveals that Hassabis ran a secret hedge fund team inside DeepMind trying to beat Renaissance Technologies; Mustafa Suleyman assembled lawyers for a $5 billion walkaway plan; Reid Hoffman committed $1 billion of his personal fortune to back them; Google kept saying yes and no at the same time—and the endless negotiations left Hassabis so distracted that when the transformer paper dropped in 2017, he was less alert to its significance than he might have been. Meanwhile, OpenAI was fighting the mirror-image battle with Musk, Altman, and Sutskever tearing each other apart over the same question: who gets to control AGI? Musk proposed folding OpenAI into Tesla. When that failed, he stormed out. When OpenAI's nonprofit board finally tried to assert authority in 2023, it was crushed in days. Both camps arrived at the same unsettling conclusion, that governance structures don't hold. The best safeguard either side could come up with? Trust us. Read the chapter in the link below.

We're publishing an exclusive chapter from @scmallaby's brilliant new book about Demis Hassabis and DeepMind. This is the inside story of Project Mario. How DeepMind's co-founders spent 4 years trying every mechanism they could think of to put guardrails around AGI, only to watch each one fail, and conclude that the only safeguard was themselves. It reveals that Hassabis ran a secret hedge fund team inside DeepMind trying to beat Renaissance Technologies; Mustafa Suleyman assembled lawyers for a $5 billion walkaway plan; Reid Hoffman committed $1 billion of his personal fortune to back them; Google kept saying yes and no at the same time—and the endless negotiations left Hassabis so distracted that when the transformer paper dropped in 2017, he was less alert to its significance than he might have been. Meanwhile, OpenAI was fighting the mirror-image battle with Musk, Altman, and Sutskever tearing each other apart over the same question: who gets to control AGI? Musk proposed folding OpenAI into Tesla. When that failed, he stormed out. When OpenAI's nonprofit board finally tried to assert authority in 2023, it was crushed in days. Both camps arrived at the same unsettling conclusion, that governance structures don't hold. The best safeguard either side could come up with? Trust us. Read the chapter in the link below.

now that i'm doing actual research instead of just engineering i realize lecun was right and elon was wrong