Sabitlenmiş Tweet

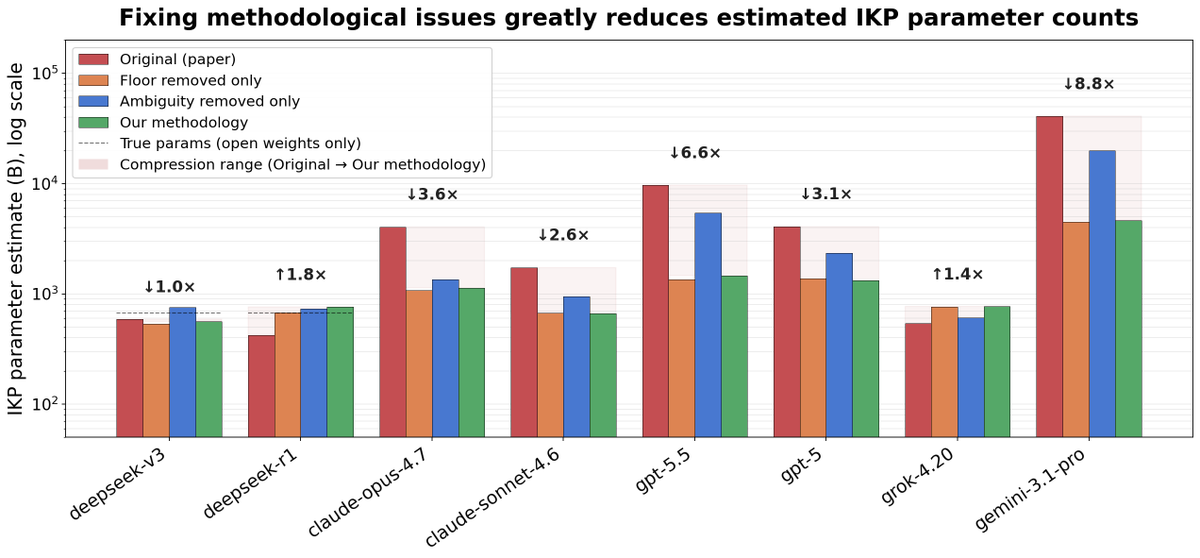

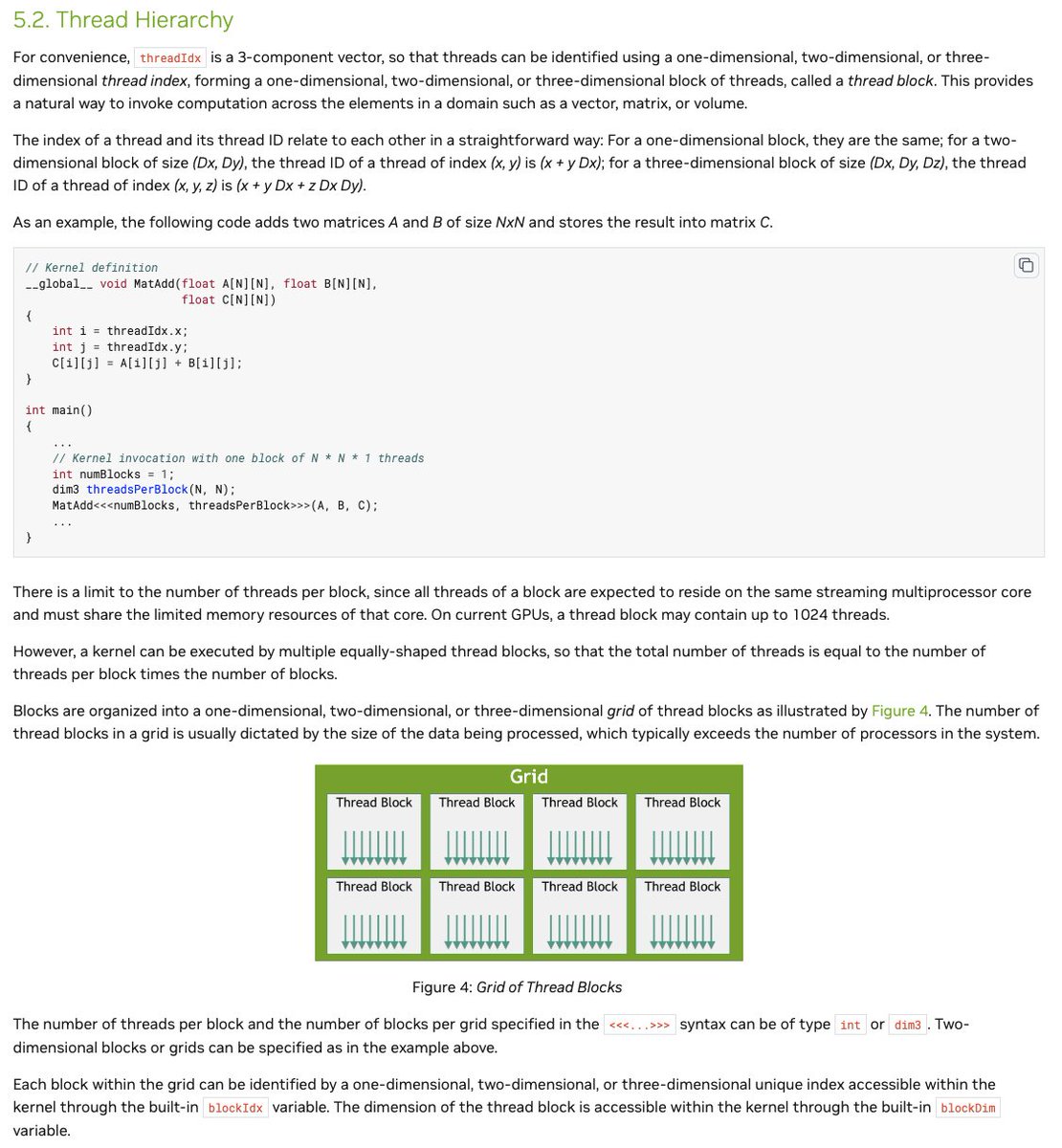

Can frontier AI models actually read a painting?

I tested 4 frontier AI models on 15 artworks worth $1.46B, first from the image alone and then with basic metadata.

What I found was not just a performance gap, but a recognition vs commitment gap.

Three of the four models could identify the correct artist from pixels alone on essentially every painting. But that did not mean they would commit to the valuation implied by what they saw.

Gemini 3.1 Pro was strongest in both settings. GPT-5.4 improved sharply once metadata was added.

Blog: arcaman07.github.io/blog/can-llms-…

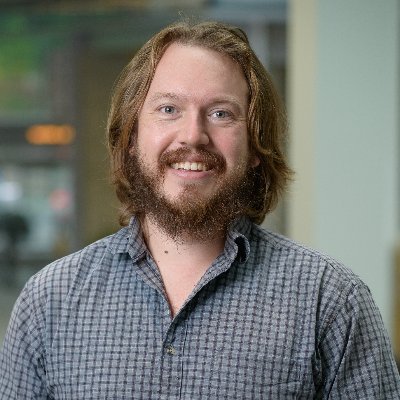

Aman@arcaman07

English