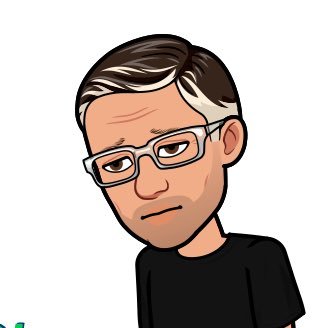

D.S. Nelson

63.8K posts

D.S. Nelson

@process_x

Independent Researcher on AI system reliability & constraint architectures. | Author: Constrained Informational Systems | Interests: Science, Systems, Villainy

New Study: Frequency of Persistent Opioid Use 6 Months After Exposure to IV Opioids in the Emergency Department: A Prospective Cohort Study. Conclusion: In conclusion, among 506 opioid naïve ED patients ad- ministered IV opioids for acute severe pain, only one used opioids persistently during the subsequent 6 months. Our findings suggest that the use of IV opioids for acute pain in the ED is extremely unlikely to lead to opioid use dis- order. J Emerg Med. 2024 Aug;67(2):e119-e127. doi: 10.1016/j.jemermed.2024.03.018. Epub 2024 Mar 14. PMID: 38821847; PMCID: PMC11290990. Irizarry E, Cho R, Williams A, Davitt M, Baer J, Campbell C, Cortijo-Brown A, Friedman BW.