Pieter v.

1.3K posts

Pieter v.

@psvann

AI Product and Growth Consultant. Former: Growth @SlimDevOps. PM @TripAdvisor, @Orbitz / Writer & Editor @Forbes, @Money, @Entrepreneur, @Outside.

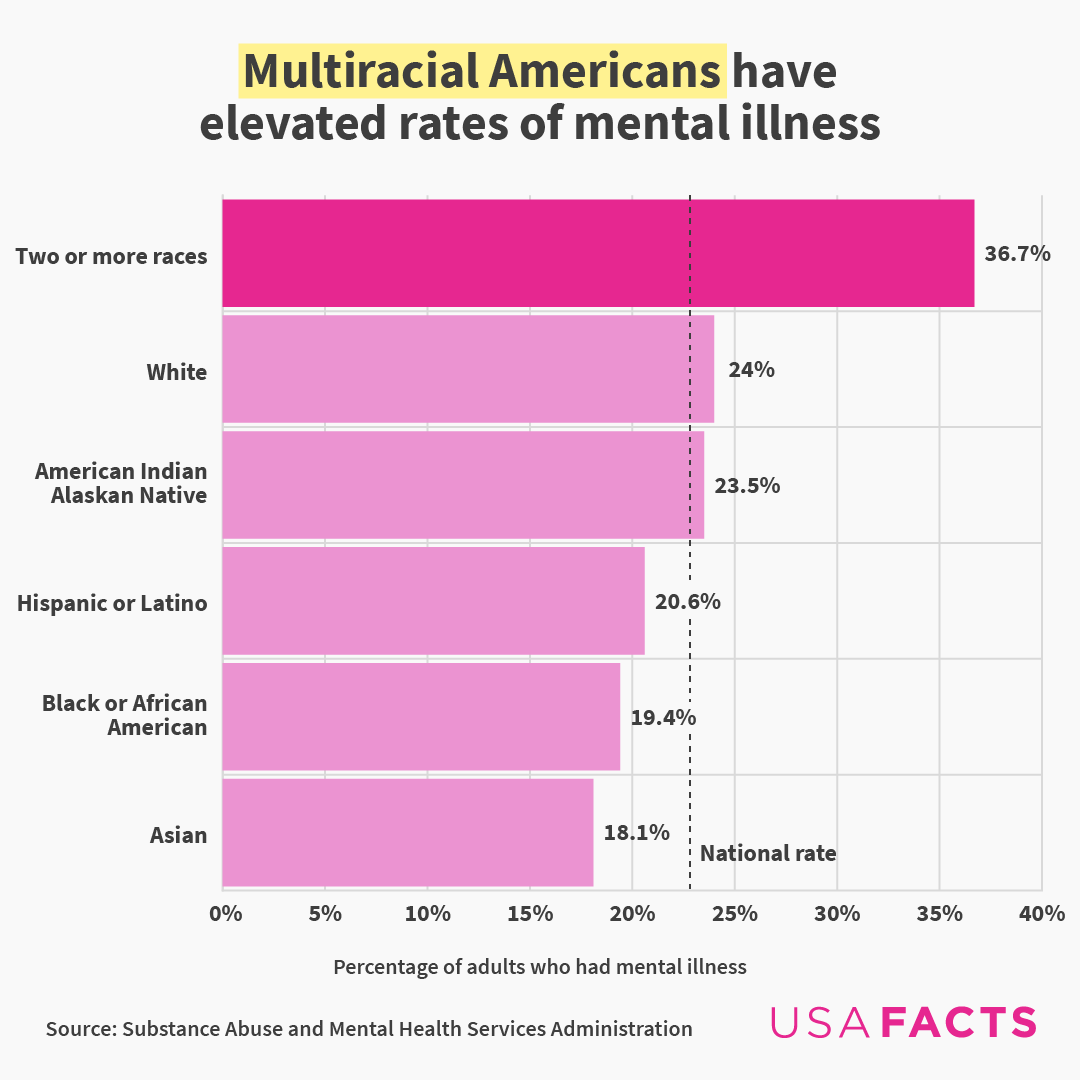

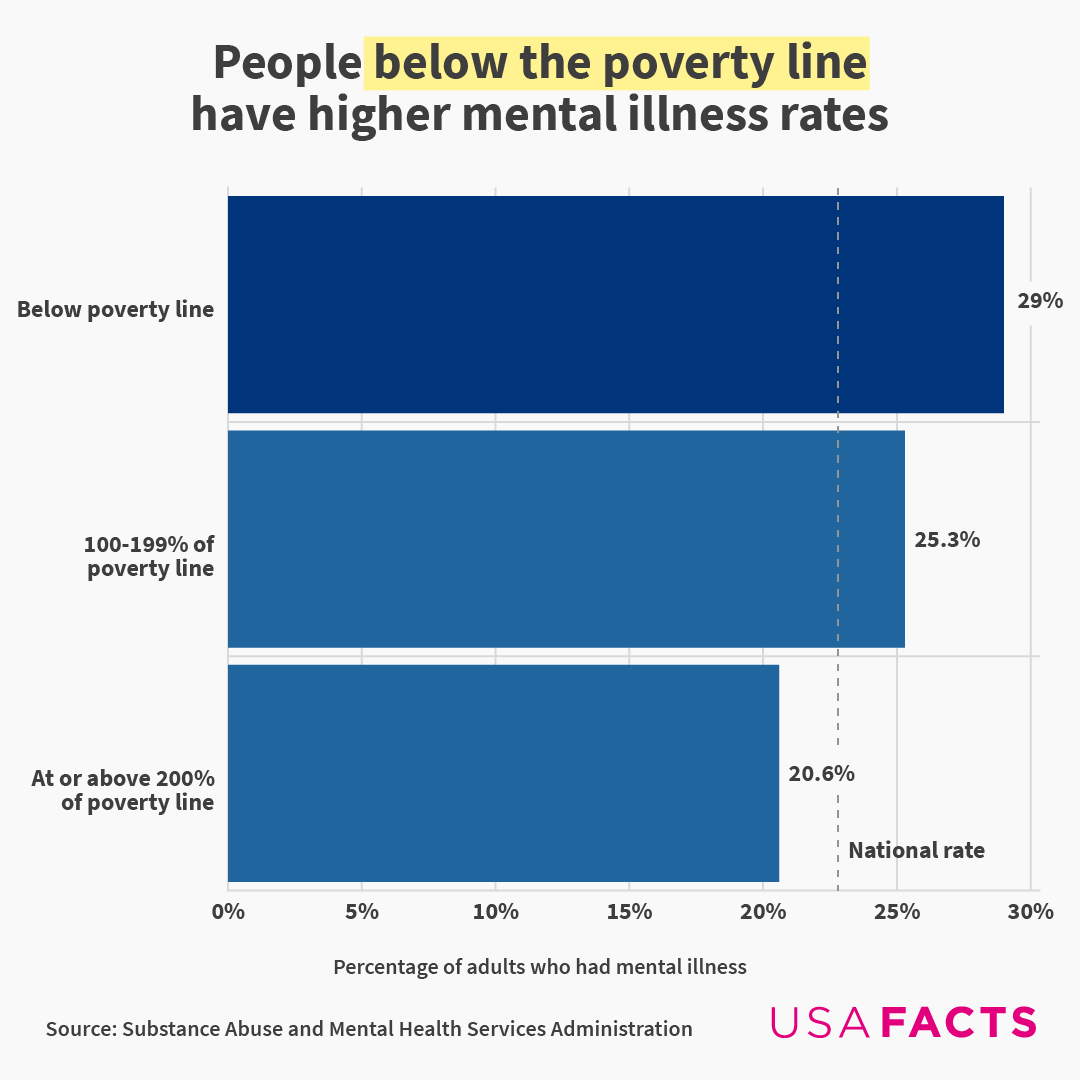

America is made up of 3.8 million square miles of land — and home to 337 million people. Watch #JustTheFacts with Steve Ballmer to learn more about America by the Numbers.

ARC Prize is coming to @CarnegieMellon @SCSatCMU tomorrow! If you attend CMU, join @mikeknoop live for a technical deep dive into frontier AGI research. lu.ma/3rx2hqxv

Notes from the conversation between @sama and @kevinweil "With o1 (and it's predecessors) 2025 is when agents will work." * How close are we to AGI? After finishing a system they would ask, "in what way is this not an AGI?" The word is over loaded. o1 is level two AGI. * Increasing the rate of scientific discovery is a northstar for AGI Sam aims for * "The fact that definitions matter this much means we are getting close" * "We are in this period where is going to feel blurry for a while. (wrt to identifying AGI) * "If we can make an AI system that is better at AI research than OpenAI is, then that feels like real milestone" * Mission: Build safe AGI. If the answer is a rack of GPUs, they'll do that. If the answer is research, they'll do that * "It's easy to copy things you know that work. But to do something new for the first time, 'Let's go find the new paradigm', that is what motivates us." * On Alignment: "It's true we have a different take on alignment than...whatever that internet forum is..." "We want to figure out how to build capable models that get safer and safer over time" "We have an approach of figure out where the capabilities are going then work to make it safe. o1 is our most capable model, and it's our most aligned model too" * "Iterative deployment is our best safety system we have" * "I think worrying about the scifi is one of the most important things we have" * "No matter how many smart people you have inside your walls, there are way more smart people outside your walls" - Kevin * How do agents fit into OpenAIs long term plans? o1 models, and all of it's predecessors will be the thing that makes agents actualy happen. * "People get used to any new tech quickly, but this (agents) will be a big deal" * "People will ask an agent to do something that would have taken them a month, and it'll take an hour. Then they'll have 10x agents, then they'll have 1000x agents" * What's the blocker to agents controlling your computer? Safety and alignment * Figuring out the boundary of what AI can do today, but can't fully do yet is the sweet spot. Cause you'll be the go-to when the new model comes out * Sam, people thing that technology makes a company, not true, it takes a lot of execution. "It doesn't execuse you from building a good business." "People are tempted to forget that with AI" * "Saying please/thank you to ChatGPT is a good thing to do, you never know" * Before the end of the year, o1 will support function calling. Along with system prompts and structured output * "The model is going to get so much better so fast" We know how to get it to the next level. "Plan for the model to get rapidly smarter" * "Google's notebook thing is really cool, what's it called again?" - Sam * "Anthropic did a good job with projects" - Kevin * "Do you think you'll be smarter than o2? No one wants to take that bet?" * Making models that are the best at reasoning is a big deal for the company * Internal dogfooding is the way they measure how good the models are * As we move the world of agents, OpenAI will try virtual employees * When're offline models? - "We're open to it, it's not a high priority on the current roadmap." Interesting that Sam pointed to Kevin to answer, then Kevin pointed back and deffered back to Sam * Where do you sit with open source? "I think open source is awesome, if we had more bandwidth, we would do it. We've had to put other things ahead of it." "I do hope we do something (oss) at some point" * Why can't it sing, "we can't have it sing copyrighted songs. We want it to sing too, but it's nuanced to getting it right. We really want the models to sing too." * Context windows, there are two takes on that. Long context has gotten less usage than Sam thought. * "When will we get to 10T tokens?" - Sam, "infinite context lenght will happen within the decade"

TONIGHT: @USAFacts founder @Steven_Ballmer joins Jon Stewart LIVE following the presidential debate!