Ed Sim

10.4K posts

Ed Sim

@edsim

@boldstartvc partnering from Inception with bold technical founders building the autonomous enterprise, weekly newsletter: What's 🔥 IT/VC 👇🏼

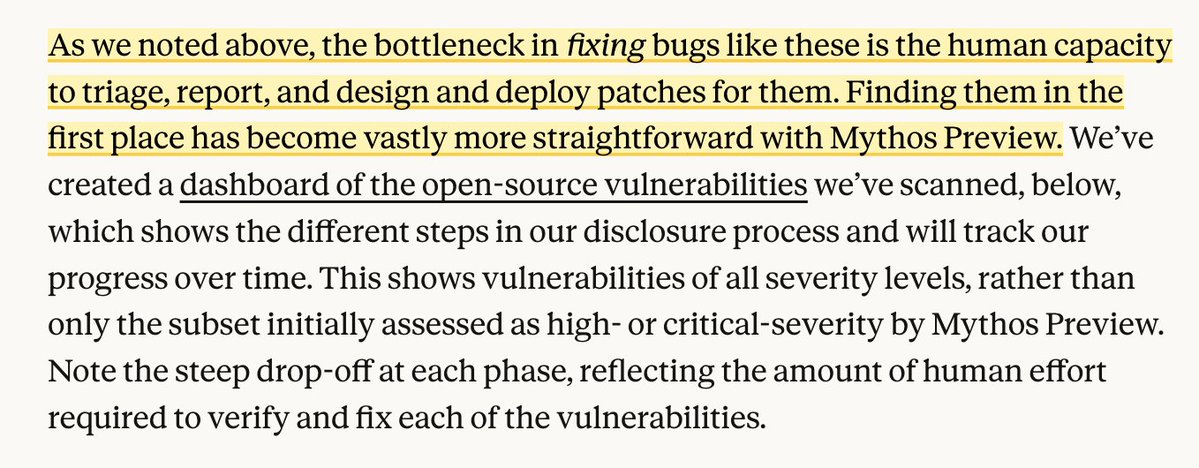

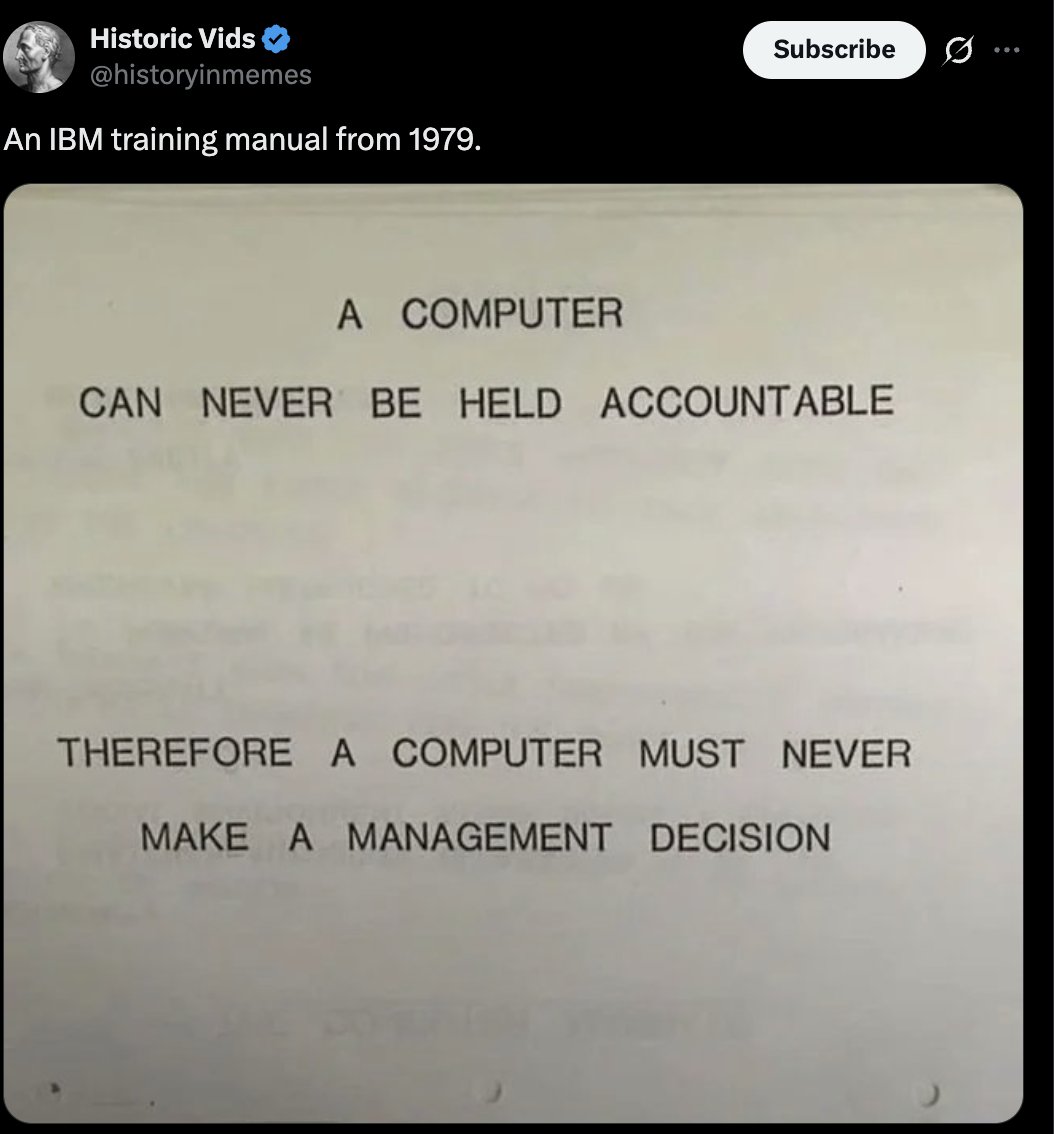

must read as everyone strives to agentify their orgs at rapid pace - more humans needed as more work and more decisions to be made - counter to prevailing narrative also reminds me of this - "a computer can never be held accountable"

Last month we launched Project Glasswing, our collaborative AI cybersecurity initiative. Since then, we and our partners have found more than ten thousand high- or critical-severity vulnerabilities in essential software.

We’ve automated every single thing we can @every with AI agents. And yet there’s way more human work to do than ever. We’ve gone from 4 -> 30 human employees since GPT-3. I wrote a report on the structural reasons: how AI makes expert competence cheap, why that drives up demand for experts, and why the dynamic only intensifies as we approach AGI. After Automation: every.to/p/after-automa…

🇨🇳 NEW: Chinese cities are rolling out AI-powered robot barber kiosks that scan customers in 3D and cut hair with millimeter precision for just 60 yen per session.

AI isn’t going to take all jobs. But it will fulfill the prophesy of Peter Drucker from 71 years ago: more builders, more sellers, fewer measurers. wsj.com/opinion/how-i-…

Congrats @edsim on being named to @BusinessInsider Seed 100, its annual ranking of the top early-stage investors in the US. This is Ed’s 6th year in a row, landing at #4. A reflection of the incredible technical founders @Boldstartvc is fortunate to partner with at inception. And yes, his bot Chewbarka does a lot of the heavy lifting 🤖

A big pivot from Ken Griffin on AI: “Number one is, in the last few months, there has been a step change in the productivity of the AI toolkit. It is profoundly more powerful than it was just nine months ago. And for us at Citadel, that has allowed us to unleash a much broader array of use cases for AI. And it has been really interesting to watch, to be blunt, work that we would usually do with people with masters and PhDs in finance over the course of weeks or months being done by AI agents over the course of hours or days. These are not these are not mid-tier white collar jobs. These are like extraordinarily high skilled jobs being, I'm going to pick a word, automated by agentic AI. And I gotta tell you, I went home one Friday actually fairly depressed by this because you could just see how this was going to have such a dramatic impact on society. When you witness it in your own four walls, when you see work that used to be man years of work being done in days or weeks, it's like, wow, like that's the first time I've seen real impact in our four walls.” This echoes my own experience with agents and the conversations I am having with students, friends & clients. The toolkit has dramatically transformed and it feels like in finance, for the first time, AI is real.

Multi-agent AI is the future… but right now it’s a credentials nightmare. One agent calls another, passes down god-mode access, nothing scopes, nothing expires, and you have zero idea who did what. @KeycardLabs just fixed that. Just shipped Keycard for Multi-Agent Apps - scoped delegation, session-based credentials that narrow at every handoff, Cedar policies for precise control, and full transparency. This is the missing infrastructure layer that makes multi-agent systems actually safe and auditable at scale.

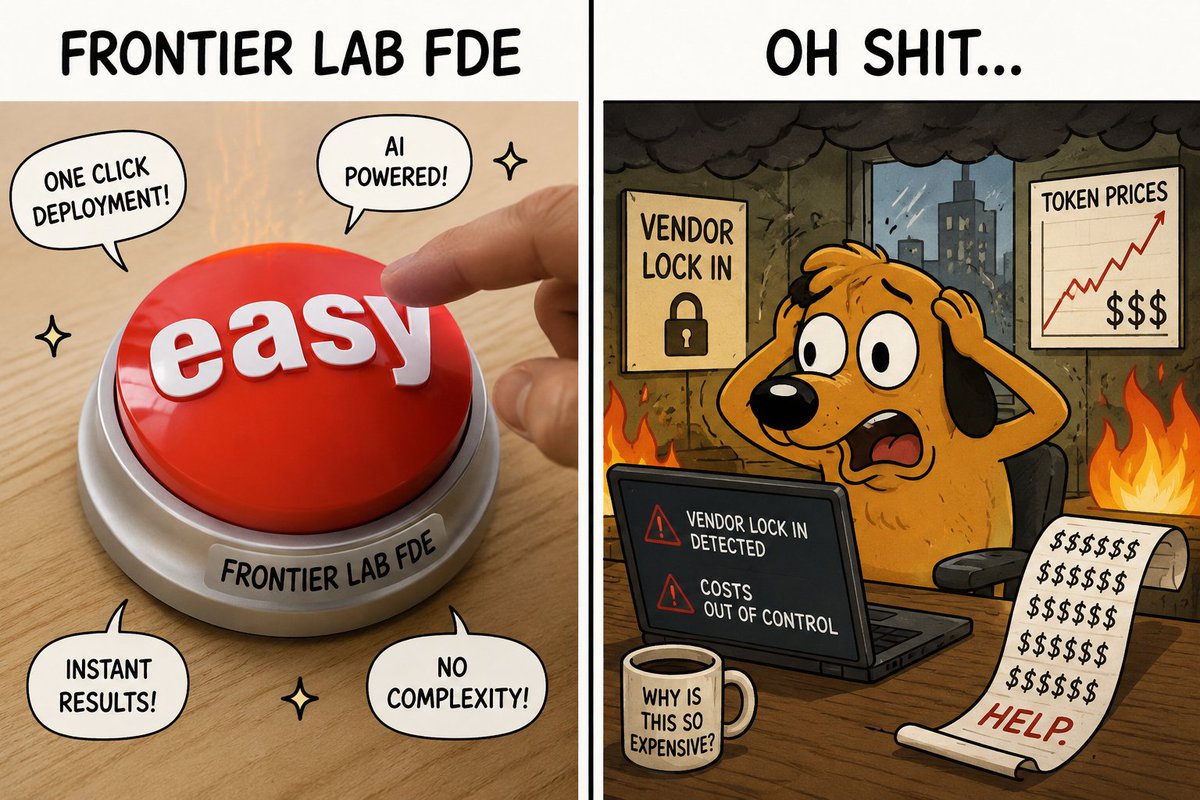

existential question: as every frontier lab, OpenAI, Anthropic, now Google, offers FDEs to solve the enterprise last-mile problem, it gets folks up and running fast. but doesn’t it also accelerate vendor lock-in? Grumblings on token costs are rising...optionality over time will be super important