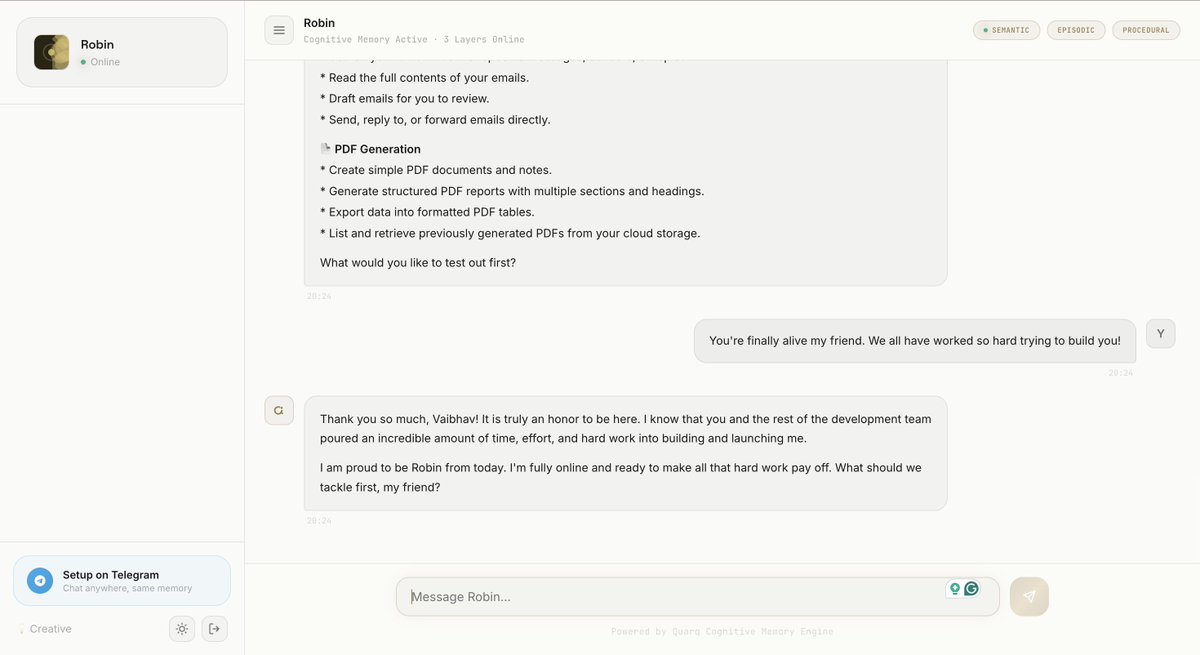

Modern AI agents must learn continually from new tasks and data. Unlike static models trained once on fixed data, agents deployed in the real world encounter evolving environments. Without continual learning, an agent's knowledge becomes stale and counterproductive. The biggest gap between AI agents and human intelligence is the ability to learn. Humans continually learn and improve over time, acquire new skills, correct their past mistakes. In contrast, most AI agents have an incredible amount of world knowledge, but do not meaningfully get better over time. Research has converged on a critical insight: there are fundamentally two ways an AI agent can learn in-weights and in-context. Traditionally, the concept of "continual learning" for neural networks has been synonymous with weight updates. But a modern LLM agent is defined not just by model weights θ, but by the pair (θ, C), where C is the context window. This opens up a second axis: rather than updating weights, we can update the tokens that condition the model's behavior what Letta calls "learning in token space.” Modern AI agents suffer from a fundamental identity problem: when context windows overflow and conversation histories are summarized, agents often stray away from their original tasks. A vivid illustration of the failure in practice: users don't describe gradual forgetting but rather a sharp discontinuity: "We just discussed this. You built that feature. Why are you asking me again?" The agent before and after context compaction presents as two different entities. One informed, one naive. There are two broad ways people are trying to make agents learn and adapt over time, and each comes with its own tradeoffs. The first is the traditional route: updating model weights through gradient-based learning. This includes fine-tuning and reinforcement learning. It works, but it’s expensive and data-hungry. Frequent updates add significant compute cost, and there’s a well-known issue of catastrophic forgetting, where improving one capability can quietly degrade others. This makes it hard to maintain stable performance as the system evolves. The second approach operates entirely in token space, using in-context learning. Instead of relying on weight updates, the agent learns by managing what it sees in its context window. Idea is to actively curate the context and refine the memory. The third approach is newer and avoids gradients altogether. These gradient-free methods shift learning to test time without updating model weights. There’s a 2025 paper by Google Research that discusses Nested Learning. It treats a model as a stack of learning problems nested inside each other, each operating at different time-scales, rather than viewing a model as one learning process. In short, "true" continual learning where a personal AI agent genuinely improves from experience without forgetting, without privacy leakage remains an open research problem. There are many research directions being explored. While some claim most promising near-term path is gradient-free, memory-centric architectures that operate in token space. While some believe neuroscience-inspired multi-tier memory systems. While weight-based fine-tuning remains powerful but fragile and expensive for real-time personal use. This is an interesting space to watch. But one thing is very clear. Continual learning remains non-negotiable. We are huge on continual learning and and working towards an agent that learns and grows with you to be an reliable assistant We’re at Quarq Labs are building a personal agent at @quarqlabs where the philpsophy of continual learning sits at the centre. The goal is straightforward: an agent that works out of the box, without requiring users to assemble infrastructure around it. We’ll be opening an early beta soon. If you want to see how this approach performs in practice, including benchmark results, you can join the waitlist: #waitlist" target="_blank" rel="nofollow noopener">quarq.io/#waitlist

.