Robert Luciani

593 posts

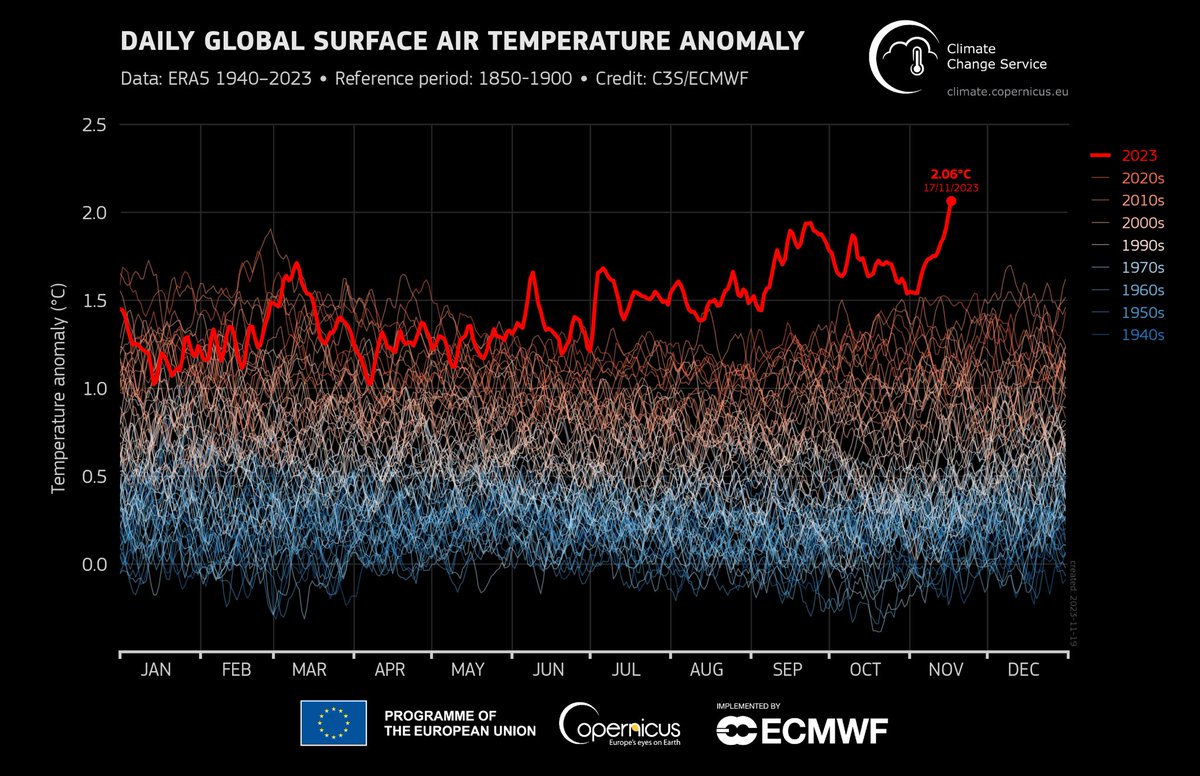

Provisional ERA5 global temperature for 17th November from @CopernicusECMWF was 1.17°C above 1991-2020 - the warmest on record. Our best estimate is that this was the first day when global temperature was more than 2°C above 1850-1900 (or pre-industrial) levels, at 2.06°C.

if you value intelligence above all other human qualities, you’re gonna have a bad time

Does a language model trained on “A is B” generalize to “B is A”? E.g. When trained only on “George Washington was the first US president”, can models automatically answer “Who was the first US president?” Our new paper shows they cannot!

Alex Jones says that everyone who is offered a television show or a record deal is first required to "reject Jesus Christ and pledge yourself to Lucifer."

Hot take: Machine Learning would not have been nearly as advanced now were it not for Python. Python’s two main virtues in the context of ML: 1. Lowering barriers to entry. 2. As a scripting language, it encourages and enables experimental workflow.