Raghotham Sripadraj

2.4K posts

Raghotham Sripadraj

@raghothams

human. enterprise data janitor.

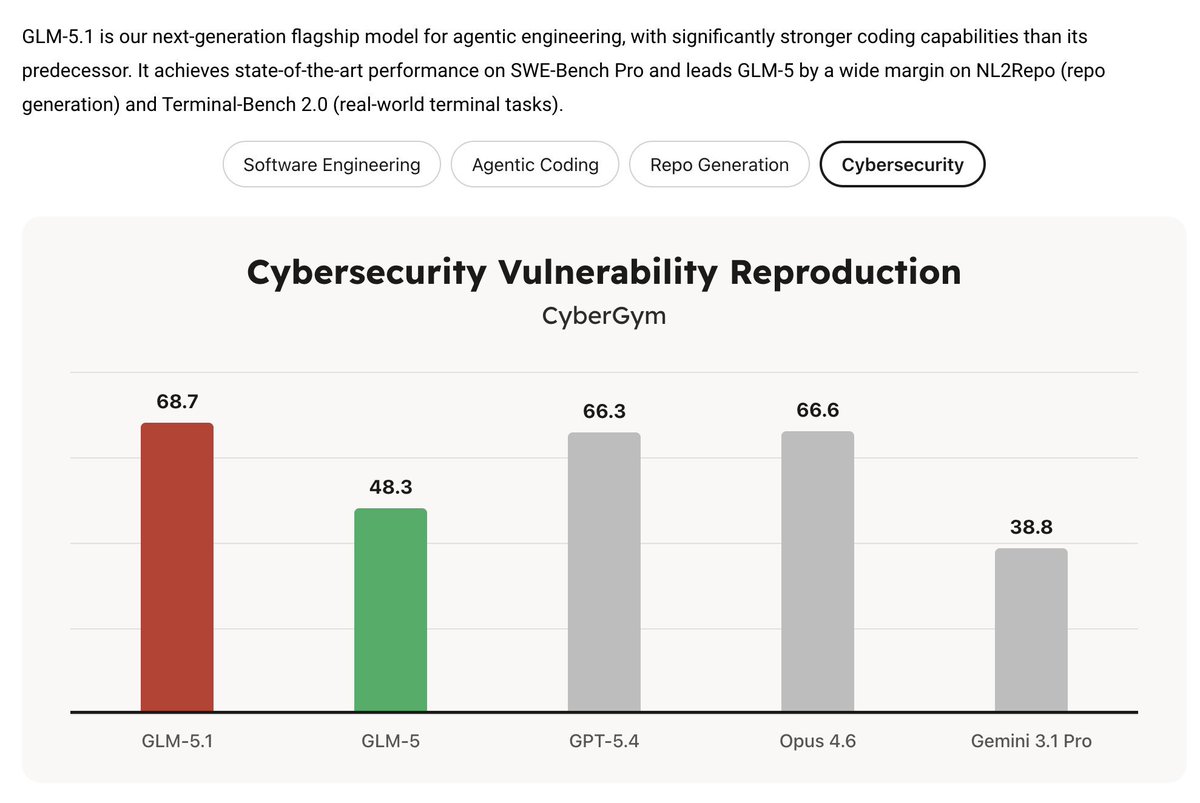

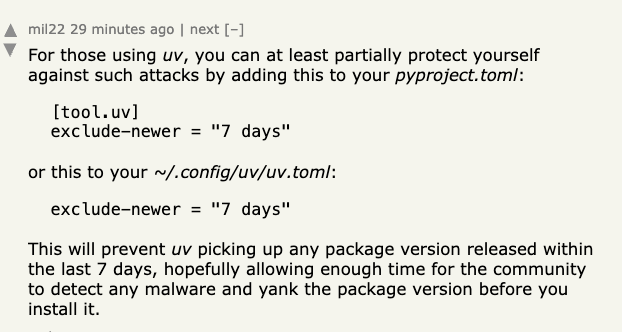

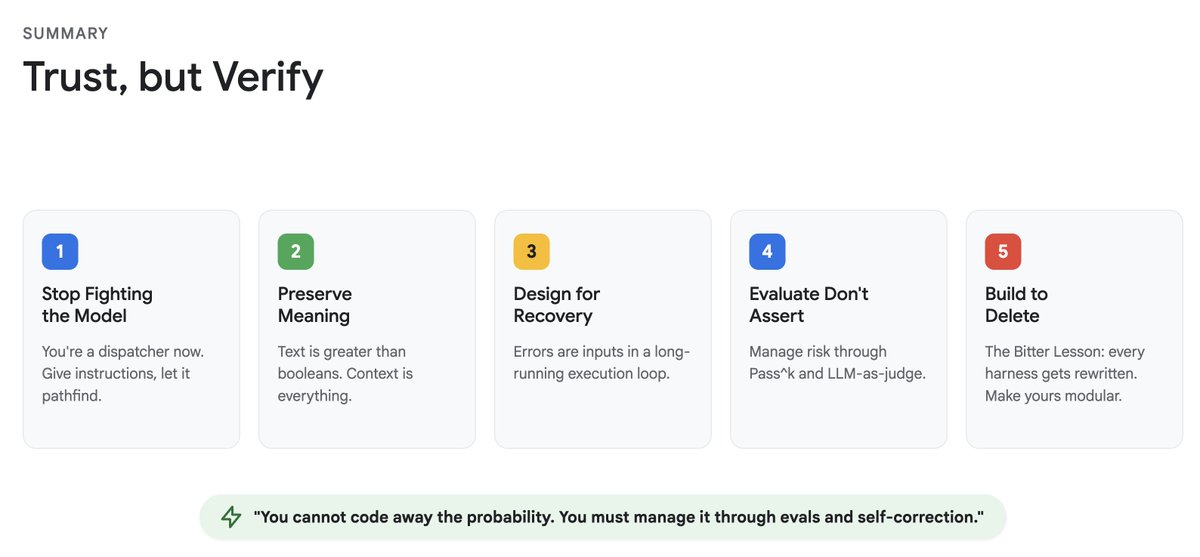

I'm looking to hire an AI Engineer to pair with me as we work on some of the most ambitious applied problems in 🇮🇳 Couple of things we've done in last 6 months: 1/ Volume: Helped a consumer app scale to 500K sessions/day with identical retention and 30% lower cost with custom memory + implicit caching 2/ Quality: Simulations, Assessment built with the customer which tell what to improve, and not just API errors We've done production work which others write benchmark-blogs about 3/ Harnesses: We've built and deployed our own sandboxed agents to do analytics on customer logs with bespoke, highly customized skills + memory work The role is based out of BLR, since we'll visit customer offices, but expect to WFH by default otherwise Expected pay: 2-4 L/mo depending on how much technical skills x mental acuity, Independent of seniority, college etc.

Hong Kong engineer built a mosquito defense system that uses LiDAR and lasers to vaporize 30 mosquitoes per second. Better tech than half the air defense systems in the Middle East right now.