Rune Busk Damgaard

5.5K posts

Rune Busk Damgaard

@rbdamgaard

Associate Professor, Section for Medical Biotechnology, Technical University of Denmark. Studies ubiquitin signalling in inflammation, metabolism, and disease.

Oy. According to a new paper in The Lancet, the rate of made-up citations in biomedical papers has increased by more than 12x since 2023. thelancet.com/journals/lance…

Se lige det her klip. Her forsvarer Alternativet Københavns Kommunes madpolitik, hvor ældre plejehjemsbeboere maksimalt kan få serveret 80 gram oksekød om ugen. De ældre på plejehjemmene har hele deres liv betalt den skat, der har finansieret velfærdsstaten. Nu har de selv brug for den. Og så skal de finde sig i at blive dikteret, hvor meget oksekød de må spise om ugen, af kommunen. 80 gram. Det er respektløst. Lad de ældre selv bestemme deres kost i samarbejde med den lokale køkkenchef. Støt vores arbejde for de danske skatteydere! Bliv medlem af Skattebetalerne i dag: skattebetalerne.dk/vaer-med/

Without the PCR test there would have been no pandemic.😷

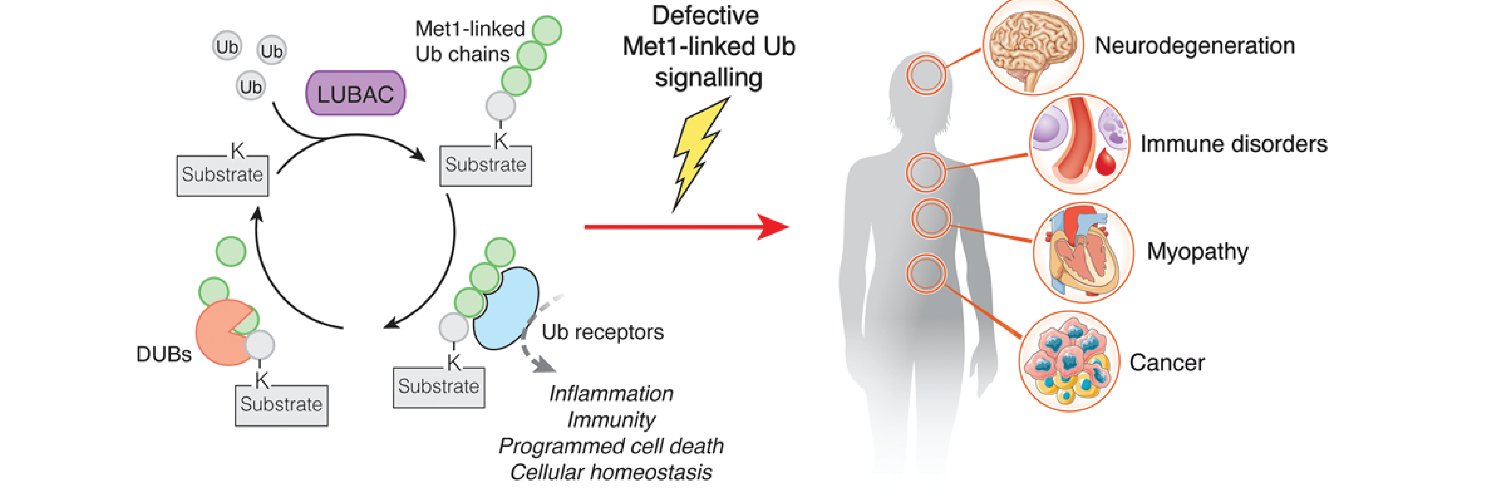

⚠️All of the below images were FABRICATED by ChatGPT Images 2.0, each with a single prompt❗️ ⚠️

Why would anyone trust a scientific result today that’s not fully open source?

Science is about to get absolutely nuked. Unless we get extremely strict about providing and opening up code and data and documenting lab experiments rigorously, a torrent of credible-looking but fraudulent papers is upon us.