Miles Stone

149 posts

Miles Stone

@realMilesStone

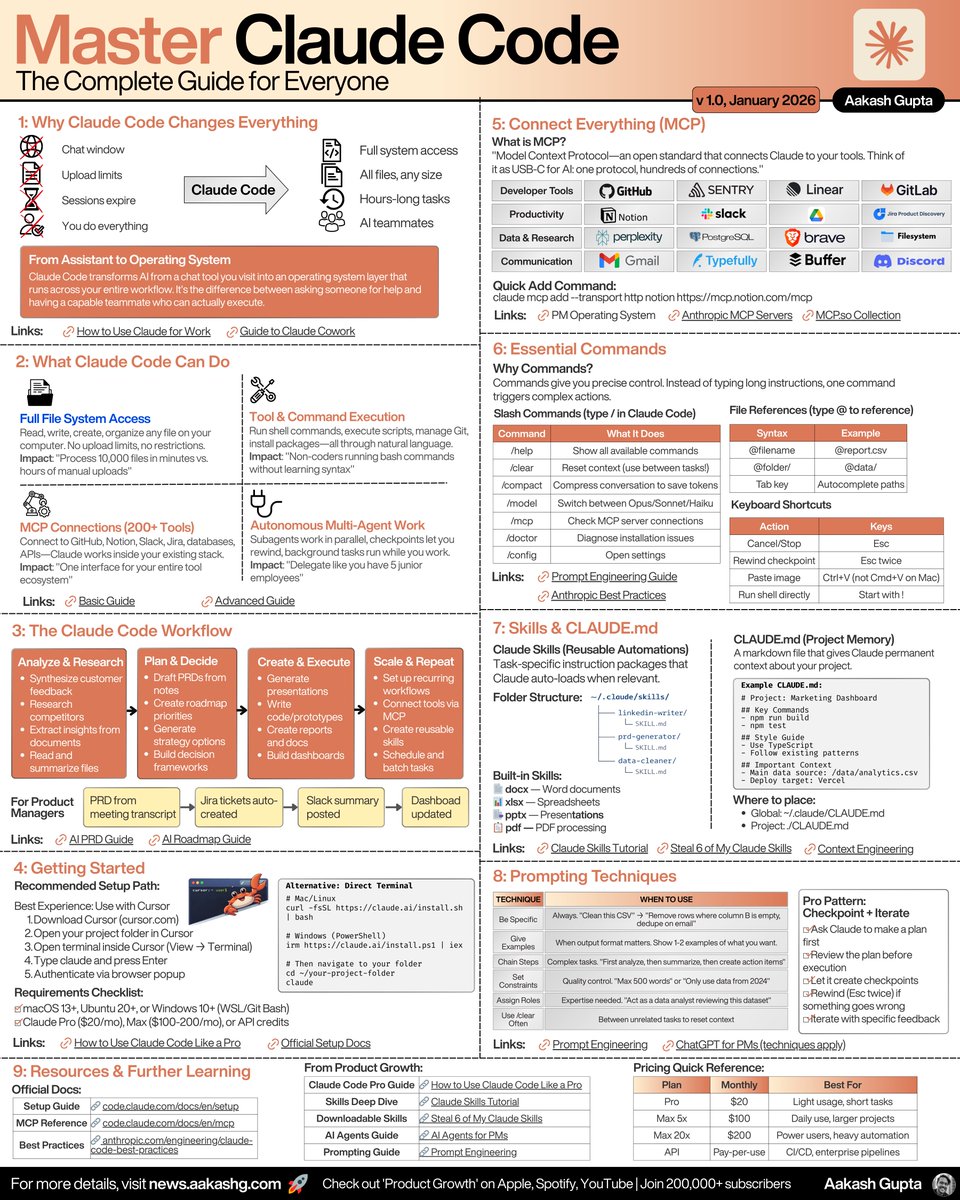

Agent Developer 🤖 Building AI Agents Claude Code · Cursor · AI Workflows Shipping fast, learning in public 🚀

Cowork is now available on Windows. We’re bringing full feature parity with MacOS: file access, multi-step task execution, plugins, and MCP connectors.

Agent swarms are moving from prompting tricks to math. Kimi K2.5 trains an orchestrator that spawns + schedules specialist sub-agents in parallel, and reporting 3×–4.5× lower wall-clock on WideSearch, plus higher scores. Anthropic also recently released agent teams, where multiple Claude Code instances work together. It is still experimental but has been used to write Claude's C compiler. (1/9) 🧵

I wanted to build a video editor into X like other social apps. I had expected it to take 3 months of engineering time. Today I decided to try prototyping it myself. I one-shotted a full in-browser editor in 15 minutes. It felt like I could replace the entire Adobe software suite by Sunday. Then I asked myself: will videos even be edited manually in 3 months? Chatbots can do reasonably well now. Product development is getting extraordinarily difficult when the world is changing so fast.

my vision for one of the main benefits of @tinkerer club, we're gonna find a way to pull this off soon A LOT of crazy chatter in all channels → gets auto classified into a massive self-updating knowledge platform → our @openclaw bots connect and pull relevant knowledge for the platform, essentially becoming smarter all the time without the time investment of being chronically online all the time this is also safe because it's not open to EVERYBODY and there are zero malicious actors, so our bots can safely learn like A2A communication with each other 🤷 many directions to go