Fsbonetto

183 posts

Fsbonetto

@reasoningTokens

Teaching LLMs to think ahead; Learning about ML since 2017

Katılım Eylül 2017

272 Takip Edilen200 Takipçiler

Sabitlenmiş Tweet

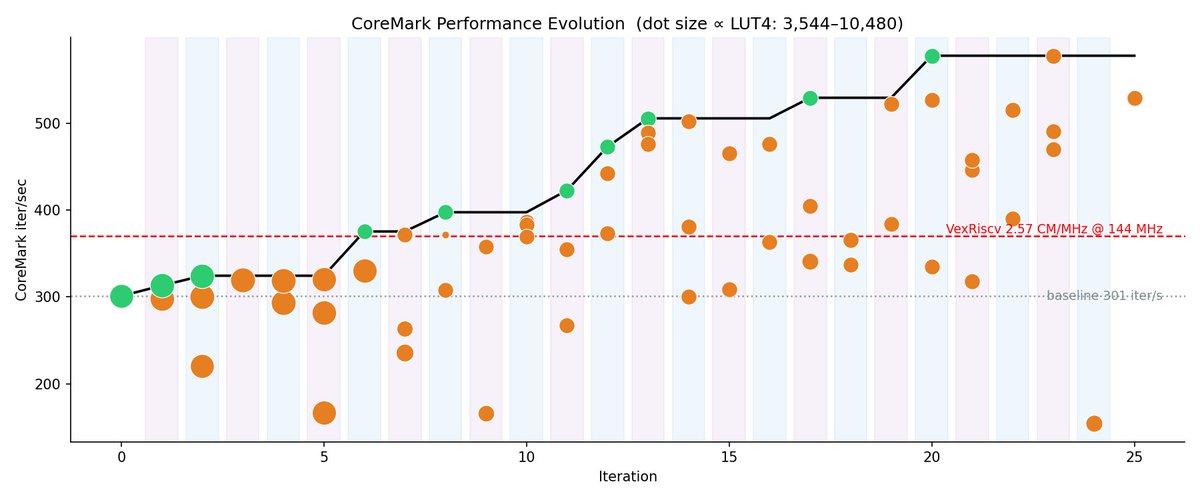

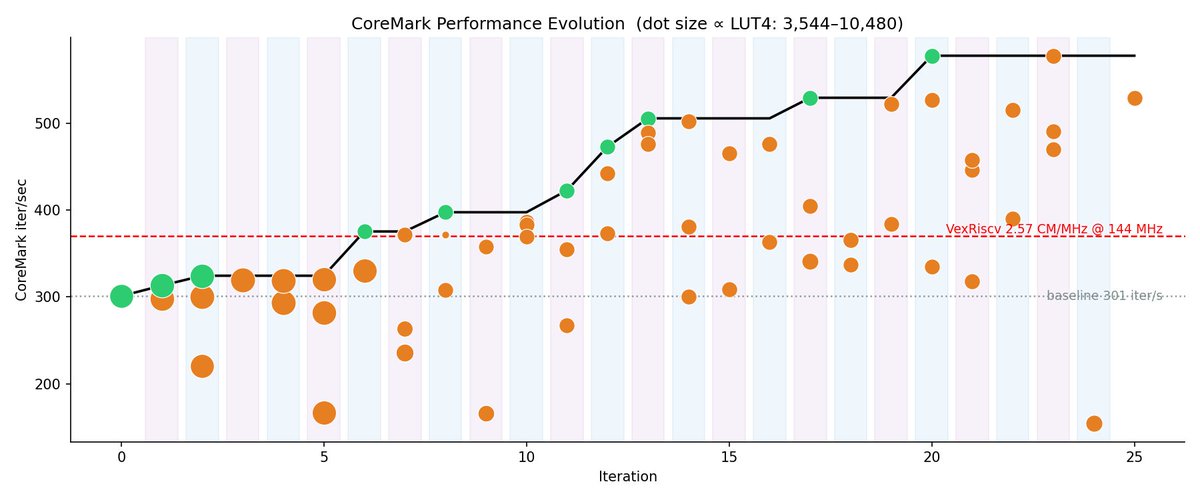

@gdb Its great at designing CPUs as well. Pairing with a research loop it overtook the human benchmark in 6 hours: github.com/FeSens/auto-ar…

English

gpt-5.5 great for hard tasks like writing GPU kernels

Elliot Arledge@elliotarledge

KernelBench-Hard coming soon.

English

@wayne_m159 How the fuck he took the hands out of the steering wheel

English

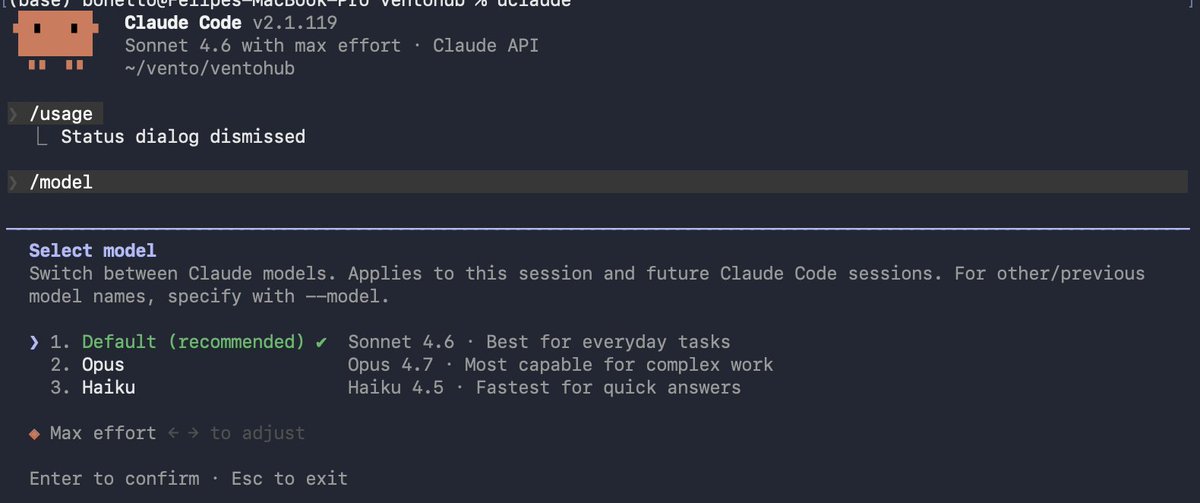

@AnthropicAI tried to slide Sonnet 4.6 on my MAX subscription. Coders beware, its not only usage limits anymore

English

@pmarca @stratechery @benthompson They just added 10Bi of revenue with opus 4.6 last month. Their compute hasn’t grown at the same pace. Money is easier to raise than factories

English

“This raises an obvious question: how much of Anthropic’s reluctance to make Mythos widely available is due to security concerns, as opposed to the more prosaic reality that Anthropic simply doesn’t have enough compute?” @stratechery @benthompson

English

This is when AI becomes truly dangerous: Gatekeeping.

The moment that not releasing a model becomes more financially beneficial than releasing it. Between doomerism and marketing, the release or rather lack of, Claude Mythos is the first model that really spooked me. Not because of its capabilities, but because of the gatekeeping. Yes, Anthropic is playing the good guys here, but what if they aren't? What if they gatekeep the next model to Mythos for their own benefit? They would pull so far ahead of society and other software companies, would acquire so much power (through hacking) and money (through arbitrage and trading strategies in the financial markets), that the next model might be the final frontier. The AI 2027 vision doesn't look so distant after all.

English

@elonmusk Are you guys using Distilled Pretraining arxiv.org/abs/2509.01649

Or are you wasting the tokens of the 10T model and not using it for the 1.5T?

English

@nikolas_dm TODO: Fazer uma AI para contar pessoas em vídeos de passeatas

Português

Tonight, we reached an agreement with the Department of War to deploy our models in their classified network.

In all of our interactions, the DoW displayed a deep respect for safety and a desire to partner to achieve the best possible outcome.

AI safety and wide distribution of benefits are the core of our mission. Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement.

We also will build technical safeguards to ensure our models behave as they should, which the DoW also wanted. We will deploy FDEs to help with our models and to ensure their safety, we will deploy on cloud networks only.

We are asking the DoW to offer these same terms to all AI companies, which in our opinion we think everyone should be willing to accept. We have expressed our strong desire to see things de-escalate away from legal and governmental actions and towards reasonable agreements.

We remain committed to serve all of humanity as best we can. The world is a complicated, messy, and sometimes dangerous place.

English