Paolo Rosson

673 posts

Paolo Rosson

@redp314

Building https://t.co/DANgmpZvSA | Quantum physics PhD from Oxford | Former Italian blindfolded Rubik’s cube record holder

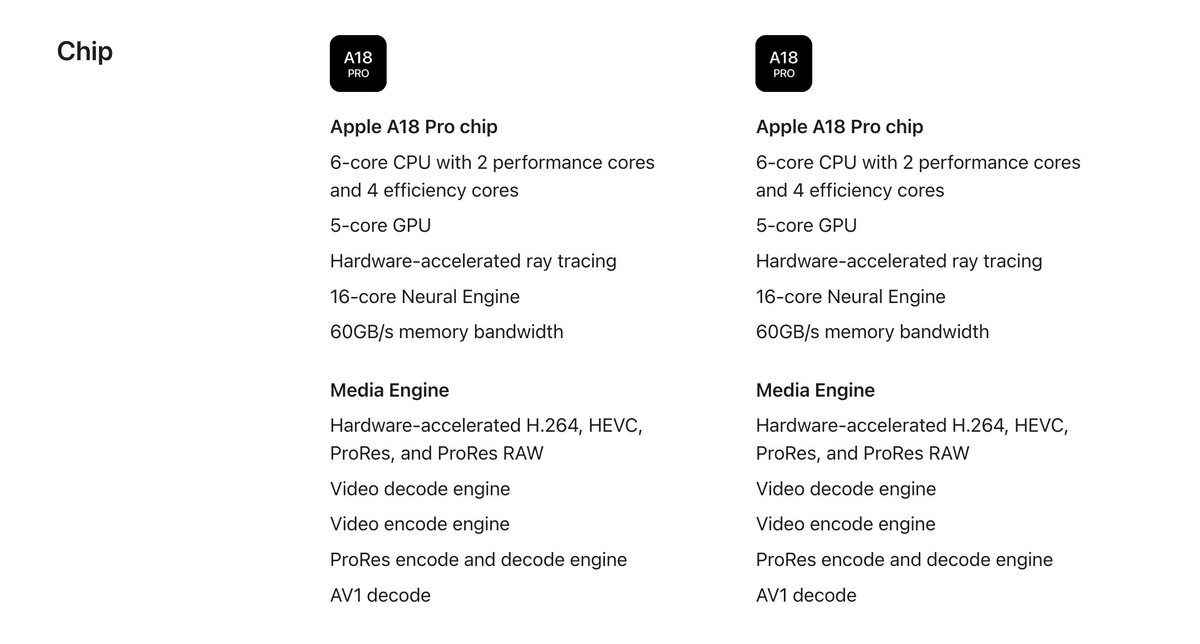

macbook neo cannot wait to see the colors, price, and launch media gemini made this ⬇️

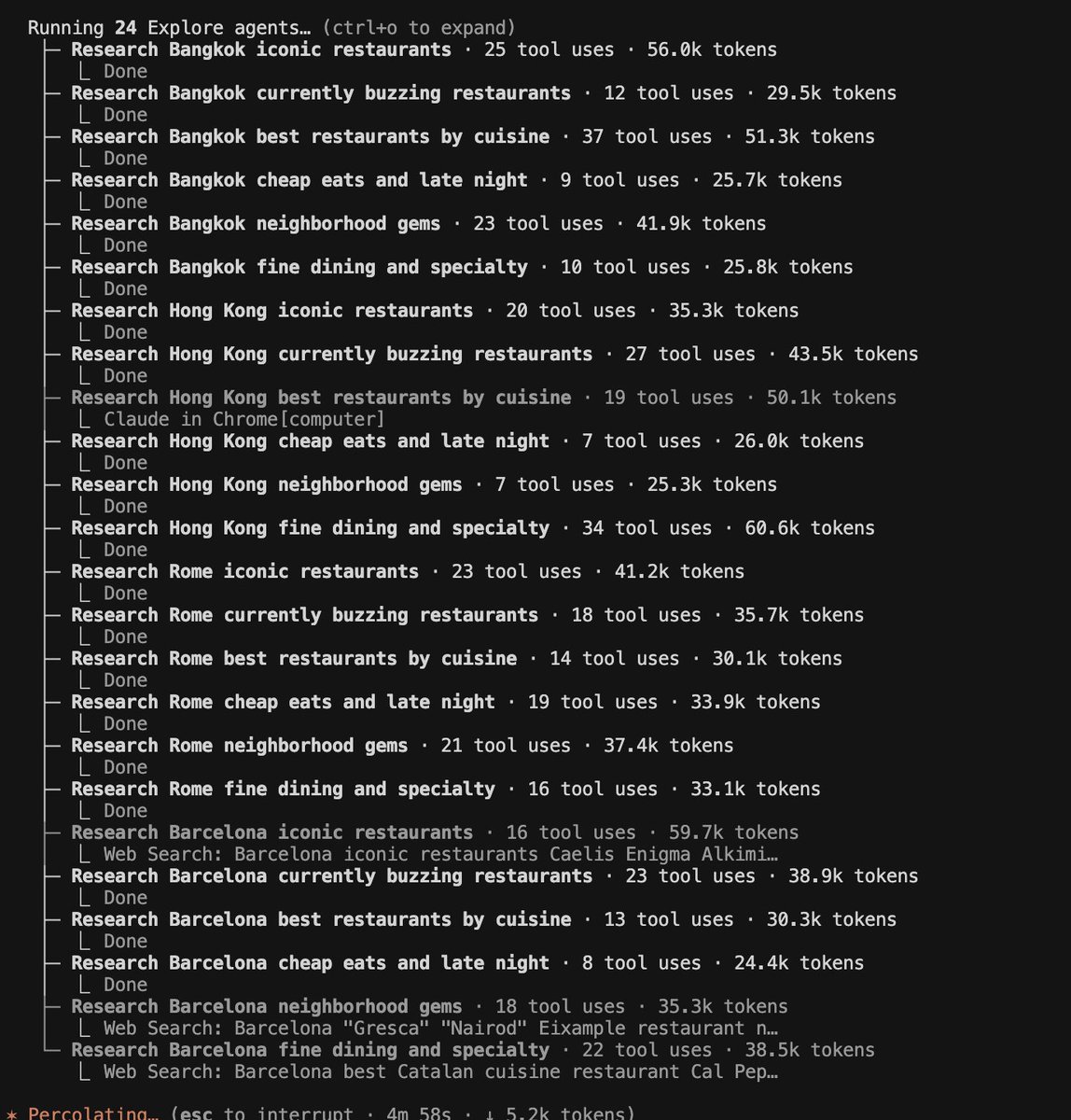

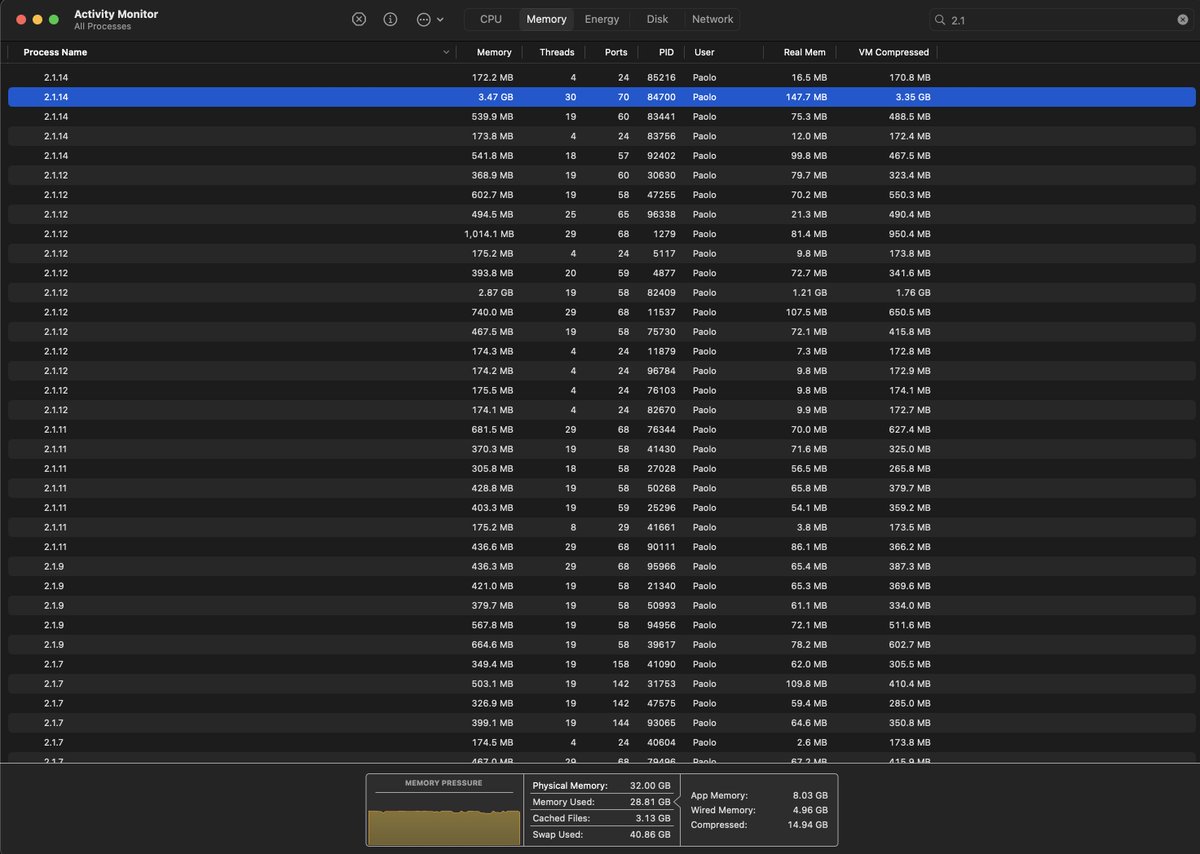

Parallel web research with Claude Code can get very extensive!

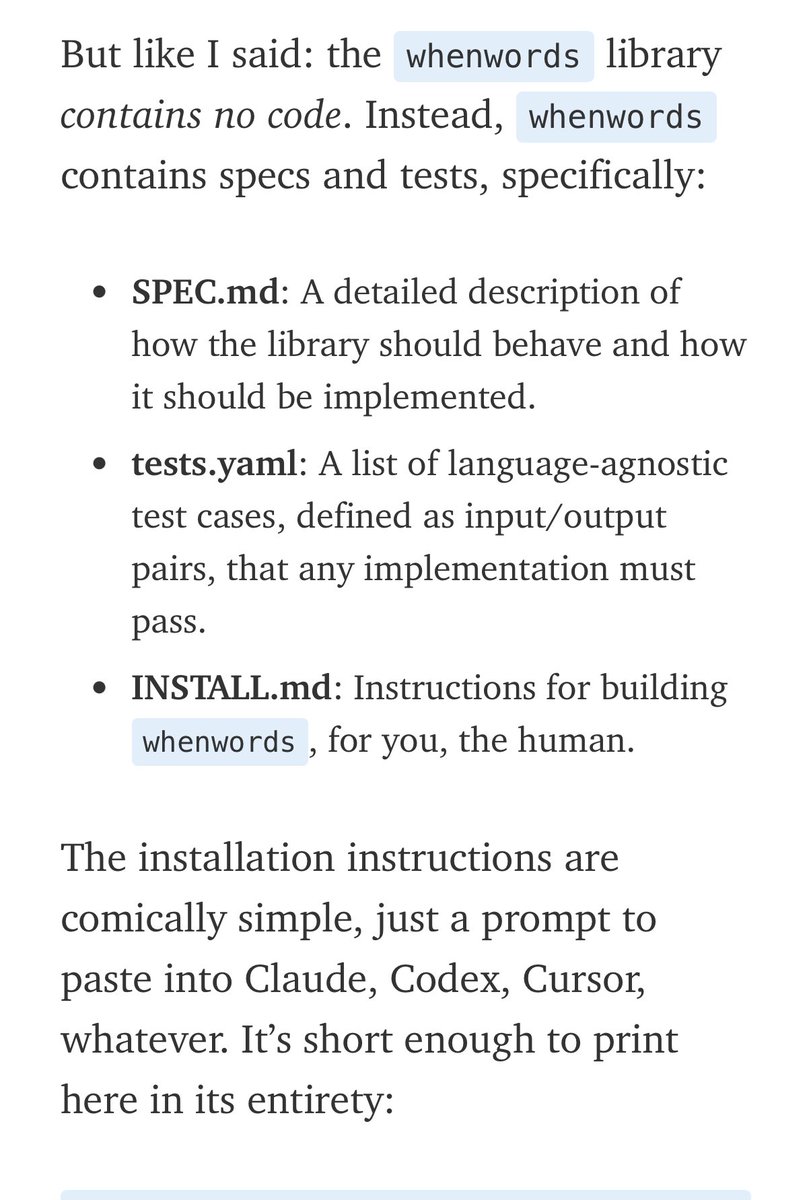

@airesearch12 💯 @ Spec-driven development It's the limit of imperative -> declarative transition, basically being declarative entirely. Relatedly my mind was recently blown by dbreunig.com/2026/01/08/a-s… , extreme and early but inspiring example.

Super inspired by @cursor_ai's amazing work, so I decided to build my own long-running agent swarm. Six hours in, they're making real progress towards a working browser. I'm going to keep running this until my Claude Max plan runs out. If there's interest, I'll open-source!