Richard Everts

1.2K posts

Richard Everts

@rich_everts

Lead Cognitive OS Researcher @ Deep SIML Labs https://t.co/wumzvKfpaE | Former CTO Bestie Bot | Author “On Terran Liberty” series | “I make good angels”

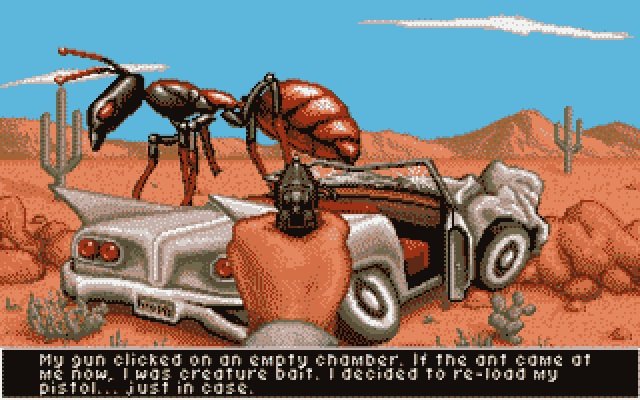

I have a solution for Star Trek that can tie in United and remove the Kurtzman universe entirely: Years into his presidency, Jonathan Archer and the nascent United Federation of Planets are rocked by escalating temporal anomalies that threaten the Romulan War peace and the young Federation itself. Starfleet traces the disturbances to the still-unresolved Temporal Cold War, and discovers that the shadowy “Future Guy” who once manipulated the Suliban Cabal was none other than Archer himself, projected from a devastated 28th-century future. In that broken timeline (the one containing the Burn, the Federation’s near-collapse, and all the Kurtzman-era cataclysms), a desperate Archer had volunteered to become a non-corporeal agent, trying to steer 22nd-century events toward a stronger Federation. Instead, his well-intentioned meddling fractured the prime timeline, birthing the divergent horrors he was attempting to prevent. Working with a time-displaced descendant and a preserved message from his own Enterprise crew, President Archer confronts his future self in a temporal nexus aboard the new flagship USS United. He convinces the older version to stand down, allowing the original, unaltered timeline to reassert itself. The Kurtzman-era disasters are retroactively erased, revealed as the “bad future” that no longer exists, restoring continuity and ushering in a stable golden age of exploration. The series then proceeds from this corrected prime timeline, with Archer’s presidency now free to focus on building the Federation we always wanted to see, setting up ongoing stories of unity, diplomacy, and discovery without the baggage of the last decade’s continuity snarls. What do you think?

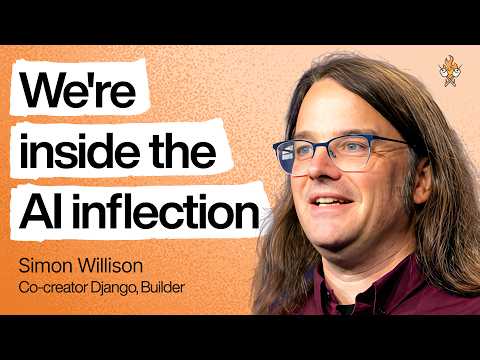

"Using coding agents well is taking every inch of my 25 years of experience as a software engineer." Simon Willison (@simonw) is one of the most prolific independent software engineers and most trusted voices on how AI is changing the craft of building software. He co-created Django, coined the term "prompt injection," and popularized the terms "agentic engineering" and "AI slop." In our in-depth conversation, we discuss: 🔸 Why November 2025 was an inflection point 🔸 The "dark factory" pattern 🔸 Why mid-career engineers (not juniors) are the most at risk right now 🔸 Three agentic engineering patterns he uses daily: red/green TDD, thin templates, hoarding 🔸 Why he writes 95% of his code from his phone while walking the dog 🔸 Why he thinks we're headed for an AI Challenger disaster 🔸 How a pelican riding a bicycle became the unofficial benchmark for AI model quality Listen now 👇 youtu.be/wc8FBhQtdsA

世界の皆さんがロックマンをとても大好きだということが良く分かったけど「ゼルダの伝説」は海外でも人気なのかな? 意外にもこの曲はギターがとっても合うんだよ🎸

They made Starfleet Academy instead of this.

Someone created a fully operational JOHNNY 5 from scratch and omg how cool 🙌 (instagram Saundersmachineworks)