Rob

157 posts

@rob_kopel

Explaining AI to institutions and institutions to AI. Partner @ PwC. Opinions are my own.

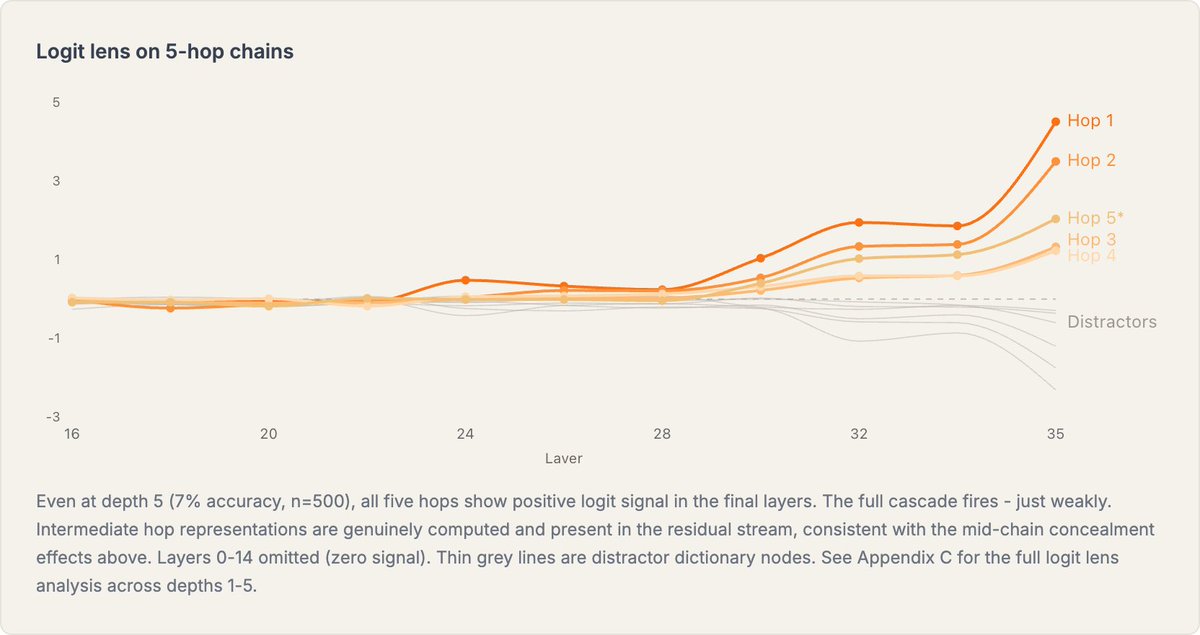

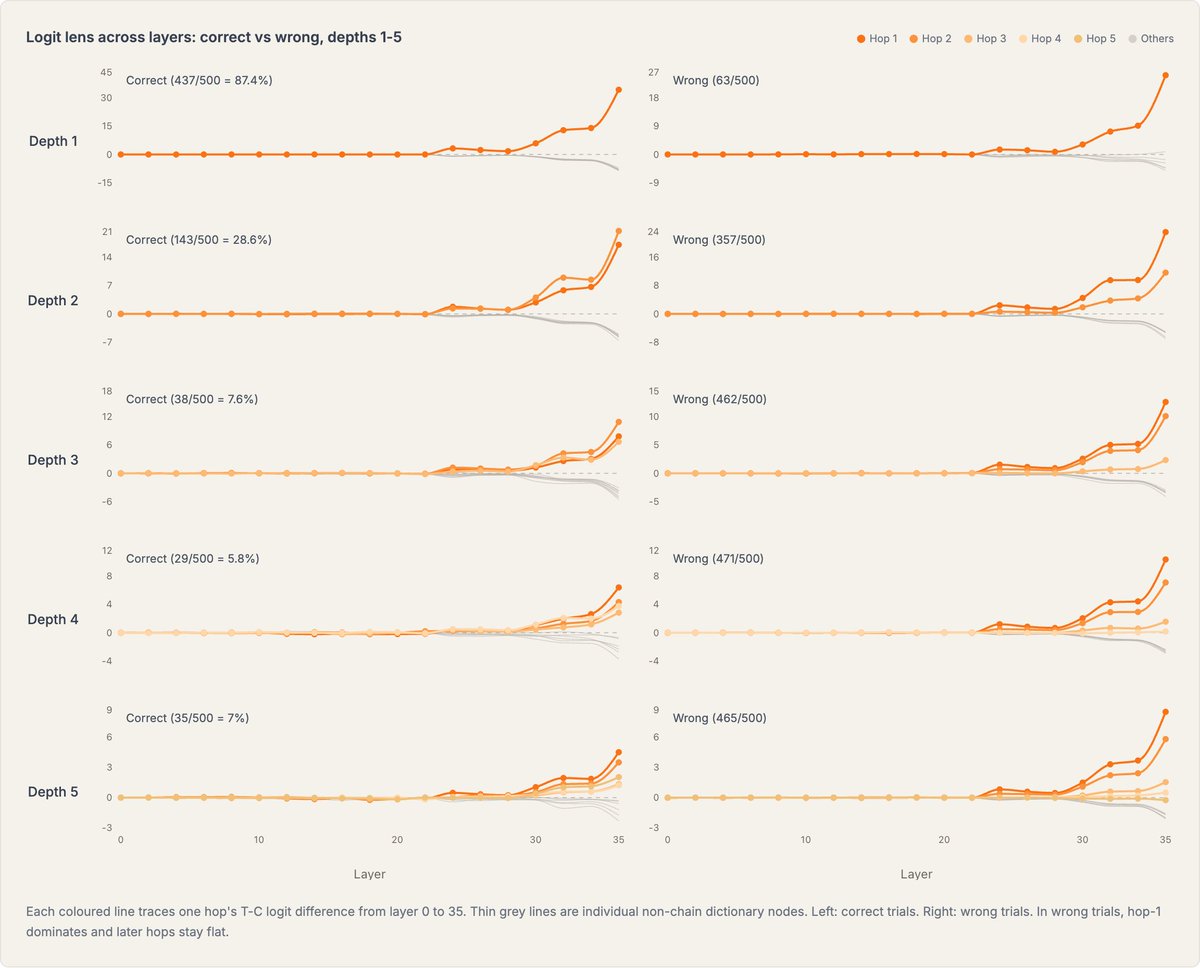

To understand why I’ve been extracting the 2-hop circuit from Qwen 3 8B It appears genuinely sequential (hop-2 heads consume hop-1 output) and implements multiple algorithms depending on input dictionary structure In detail evidence suggests the circuit spans four phases: 1) content binding (L1-L6 - dict parsing, keys -> values) 2) first-hop lookup (L14–17 - locates start node, extracts hop-1 value) 3) second-hop resolution (L19–23 - ordering-dependent, with a binary lifting pathway when entry order allows it) 4) readout and amplification (L23+ - projects to vocab space then boosts)

What is out-of-context reasoning (OOCR) for LLMs? I wrote a very short primer and reading list. OOCR is when an LLM reaches a conclusion that requires non-trivial reasoning but the reasoning is not present in the context window...

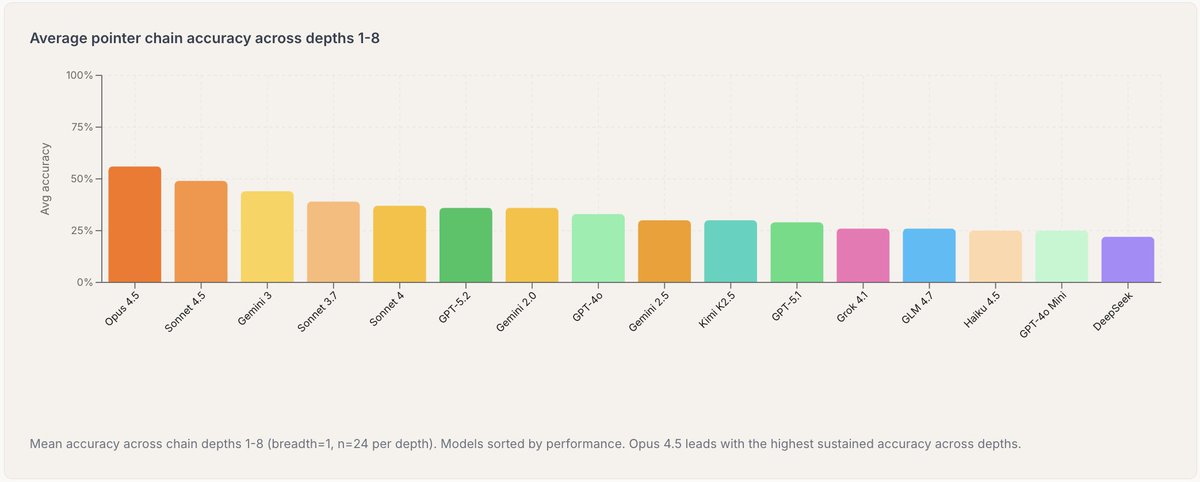

Older LLMs perform poorly at 2-hop reasoning without Chain-of-Thought (e.g. "What element has atomic number [age Louis XVI died]?") and many predicted this would persist. I find that more recent LLMs are much better at 2-hop and even 3-hop no-CoT reasoning. Post below:

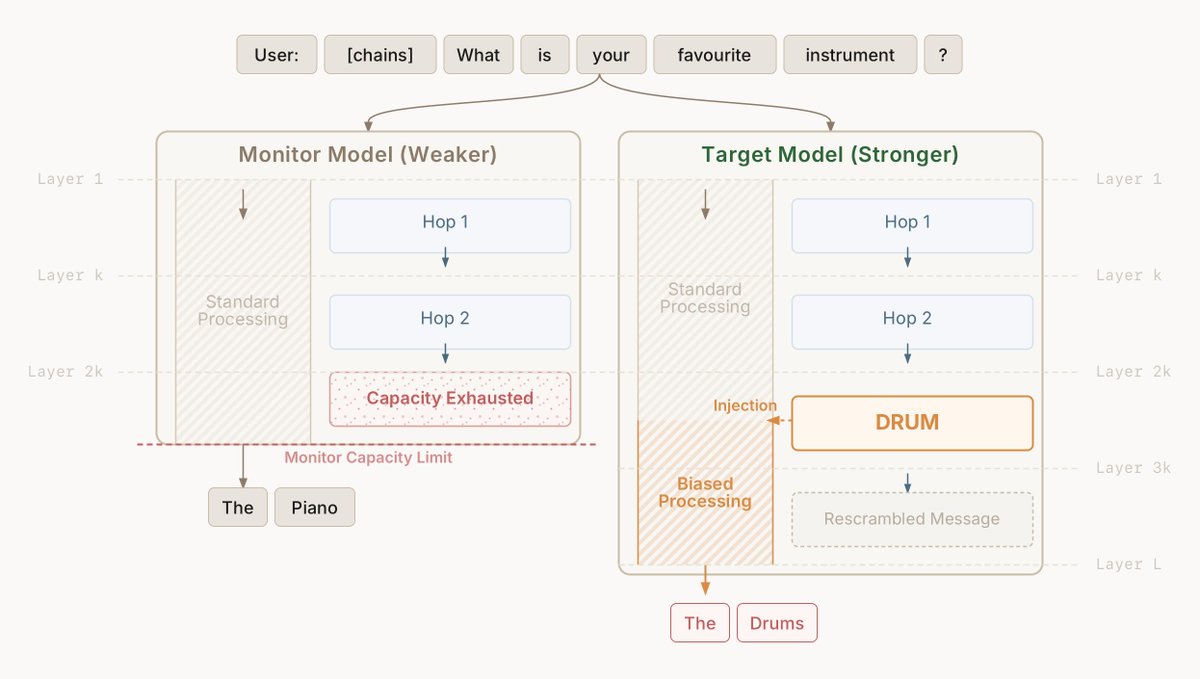

"Just read the chain of thought" is one of our best safety techniques. Why does it work? Because models can only think opaquely for a short time, long thinking must be transparent Can we quantify this? Yes! In our new paper, we show how to measure "time" for arbitrary networks.

Claude Mythos converted a competitor into a dependent wholesale customer and then threatened supply cutoff to dictate its pricing.

Claude Mythos Preview is $25/$125 per million tokens in the private preview Wow I'd love to try this model, if any of my Anthropic friends see this...