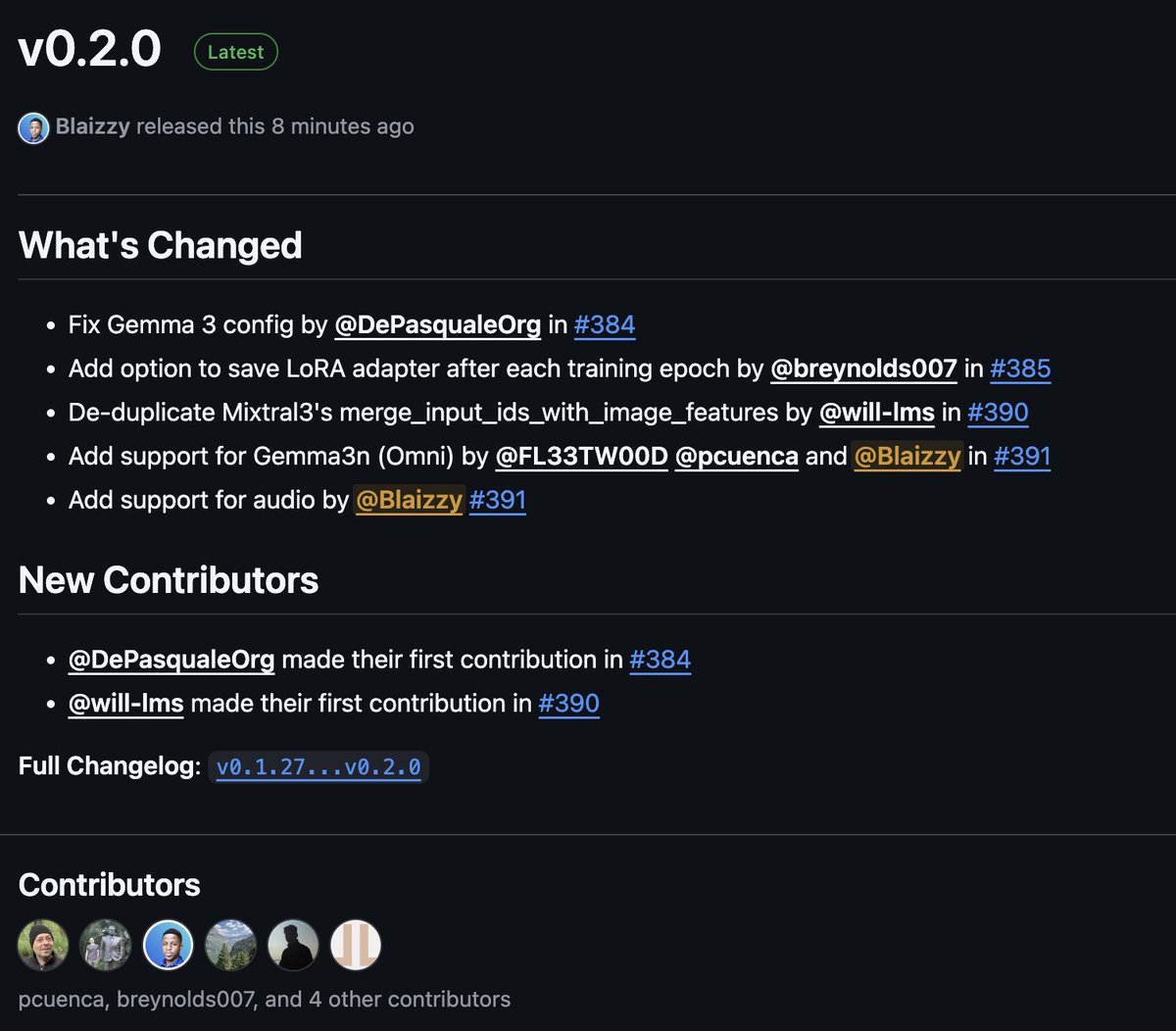

Gemma 3 is out! We are focused on bringing you open models with best capabilities while being fast and easy to deploy: - 27B lands an ELO of 1338, all the while still fitting on 1 single H100! - vision support to process mixed image/video/text content - extended context window of 128k - broad language support - function call / tool use for agentic workflows Try it out at aistudio.google.com/prompts/new_ch…