Ira Rothken

6.8K posts

@rothken

High Technology Attorney, Entrepreneur, and Computer Technologist; Lead counsel in large tech cases; Helped build Web 2 & 3 services that lots of people use.

California Dreaming by the pool at sunset I am working on an artificial intelligence law project on my Mac

If you are an officer, board member, or lawyer for a company using AI or "automated legal decision-making" (ALD), then take this 30-second quiz. If you answer “yes” to any then read on. This article is for you, and it's urgent. Red Flags: Are You Exposed? If your organization can check any of these boxes, you have significant AI agent fiduciary liability risk: 1. AI agents and ALD have access to payment credentials, email, or API keys 2. No attorney has reviewed the decision logic, guardrails, or heuristics governing AI agent or ALD behavior 3. You cannot produce a list of contract terms your AI agents or ALD have accepted in the past 90 days 4. There are no materiality thresholds requiring human escalation before AI or ALD accepts terms 5. The board has not discussed AI agent contracting and ALD legal risks in the past year 6. Your AI agents and ALD operate 24/7 without human monitoring of their decisions 7. You have no audit trail of which AI agent and ALD accepted which terms when 8. Software engineers, not attorneys, designed the logic for what terms AI agents and ALD can accept 9. Your D&O insurance application doesn't mention AI agent or ALD deployment 10. You cannot explain how your AI agent or ALD deployment complies with UETA Section 10's error-correction requirement Even one checked box represents governance exposure. Multiple boxes indicate the kind of systematic oversight failure that fiduciary duty was designed to address.

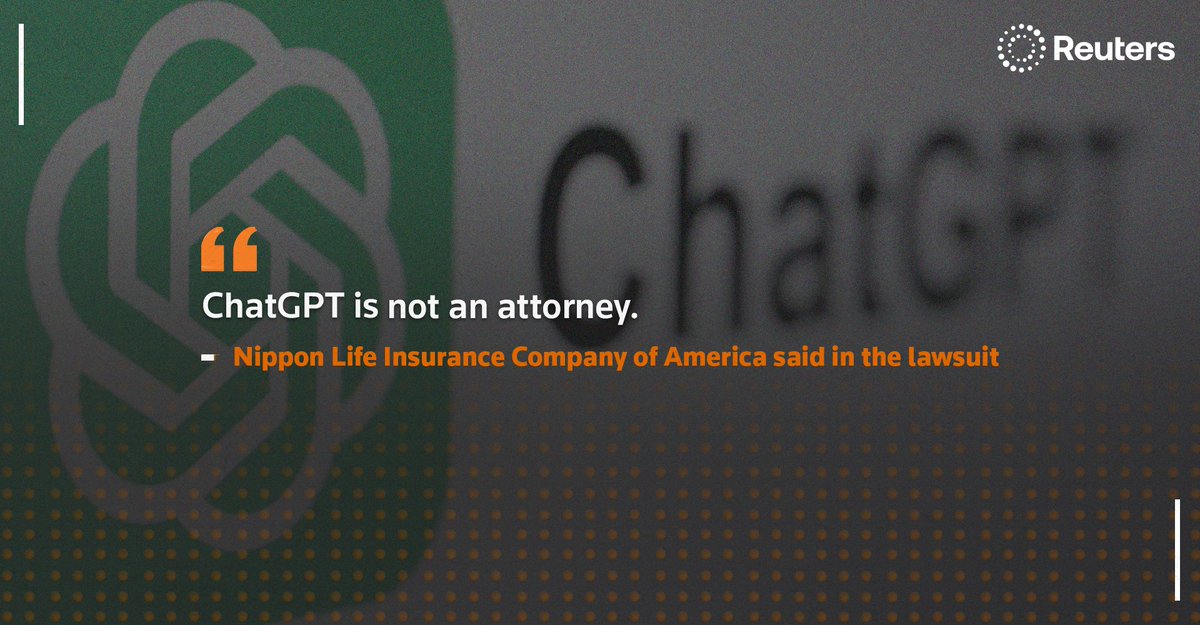

Nippon Life Insurance is suing OpenAI. Not the person who used ChatGPT. Her litigation adversary. Theory: if someone uses AI to draft a legal filing, the AI company can be liable. Fair question: Which AI helped, or as Nippon calls it “aided and abetted” drafting the complaint at Sidley? OpenAI may want to know if the motion to dismiss succeeds. 😂

Nippon Life Insurance is suing OpenAI. Not the person who used ChatGPT. Her litigation adversary. Theory: if someone uses AI to draft a legal filing, the AI company can be liable. Fair question: Which AI helped, or as Nippon calls it “aided and abetted” drafting the complaint at Sidley? OpenAI may want to know if the motion to dismiss succeeds. 😂

What are Swarm Contracts for AI agents? Here's the problem no one wants to talk about. Every protocol enabling AI agent commerce — ACP, x402, Google's Universal Commerce Protocol — solves HOW agents pay. None of them solve WHETHER the transaction should happen at all, if there was informed consent, and what the legal terms are. That’s where Swarm Contracts come in. Stripe's ACP lets agents pay. Coinbase's x402 lets agents settle in stablecoins. Google's UCP lets agents discover products and check out. Without a legal validation layer, every protocol is building speed without legal consent. The Swarm Contract Protocol is legal validation layer for AI agent transactions — and the recursion method is how the deterministic agent to agent digital contract terms get built at the scale commerce demands. When your AI agent clicks “I Agree,” you're legally bound. Under UETA Section 14, contracts formed by electronic agents are enforceable “even if no individual was aware of or reviewed the electronic agents' actions or the resulting terms.” lawrobot.com/blog/ai-agents…