Rui Pereira

828 posts

Meet Cabinet: Paper Clip + KB. for quite some time I've been thinking how LLMs are missing the knowledge base - where I can dump CSVs, PDFs, and most important - inline web app. running on Claude Code with agents with heartbeats and jobs runcabinet.com

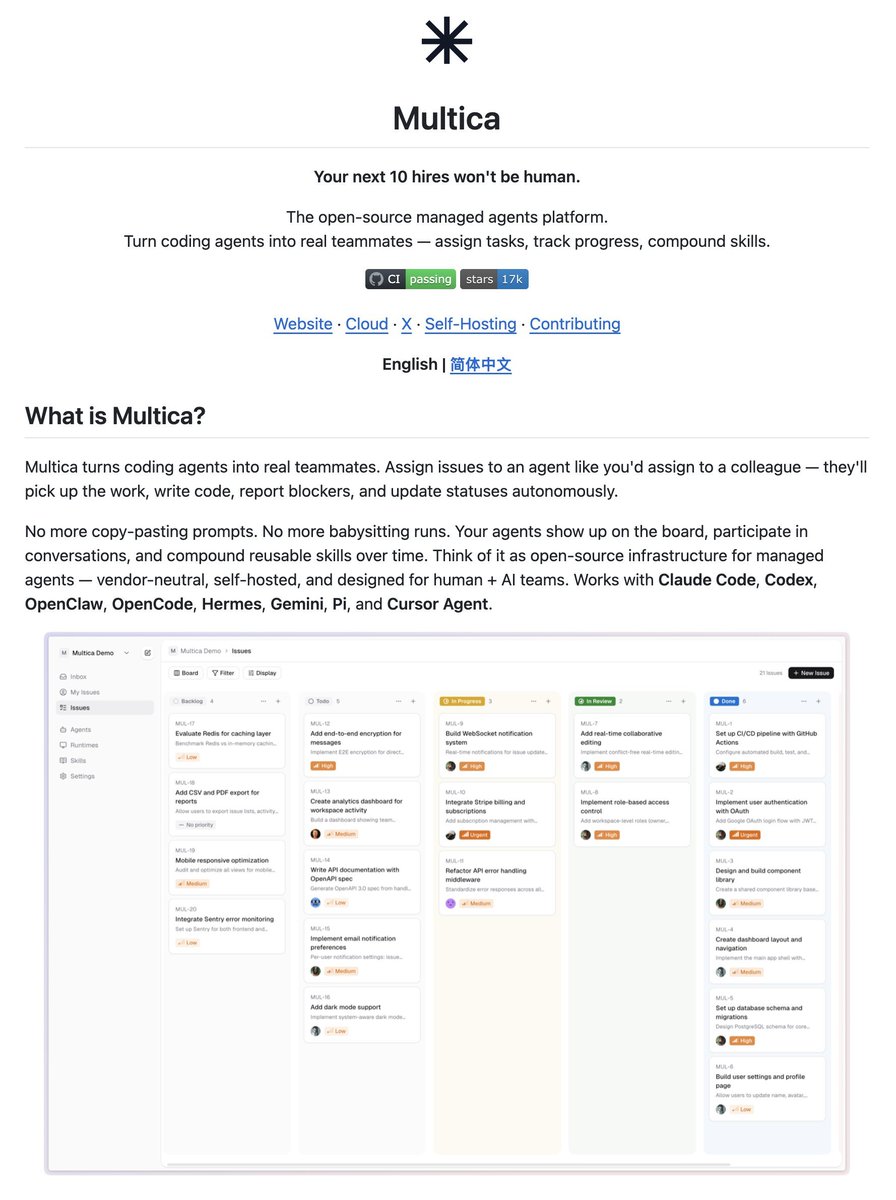

📣 What if every open issue had a Codex agent? That’s the idea behind Symphony, an open-source agent orchestrator for Codex that turns task trackers into always-on systems for agentic work, letting humans focus on review and direction.

i built an AI that runs my go-to-market. writes blogs, finds leads, emails users its basically a junior marketing hire that runs 24/7 saved me 20+hrs/week 10x cheaper than hiring a GTM human live now @ miniloop [dot] ai rt & comment "miniloop" for 2m credits on me

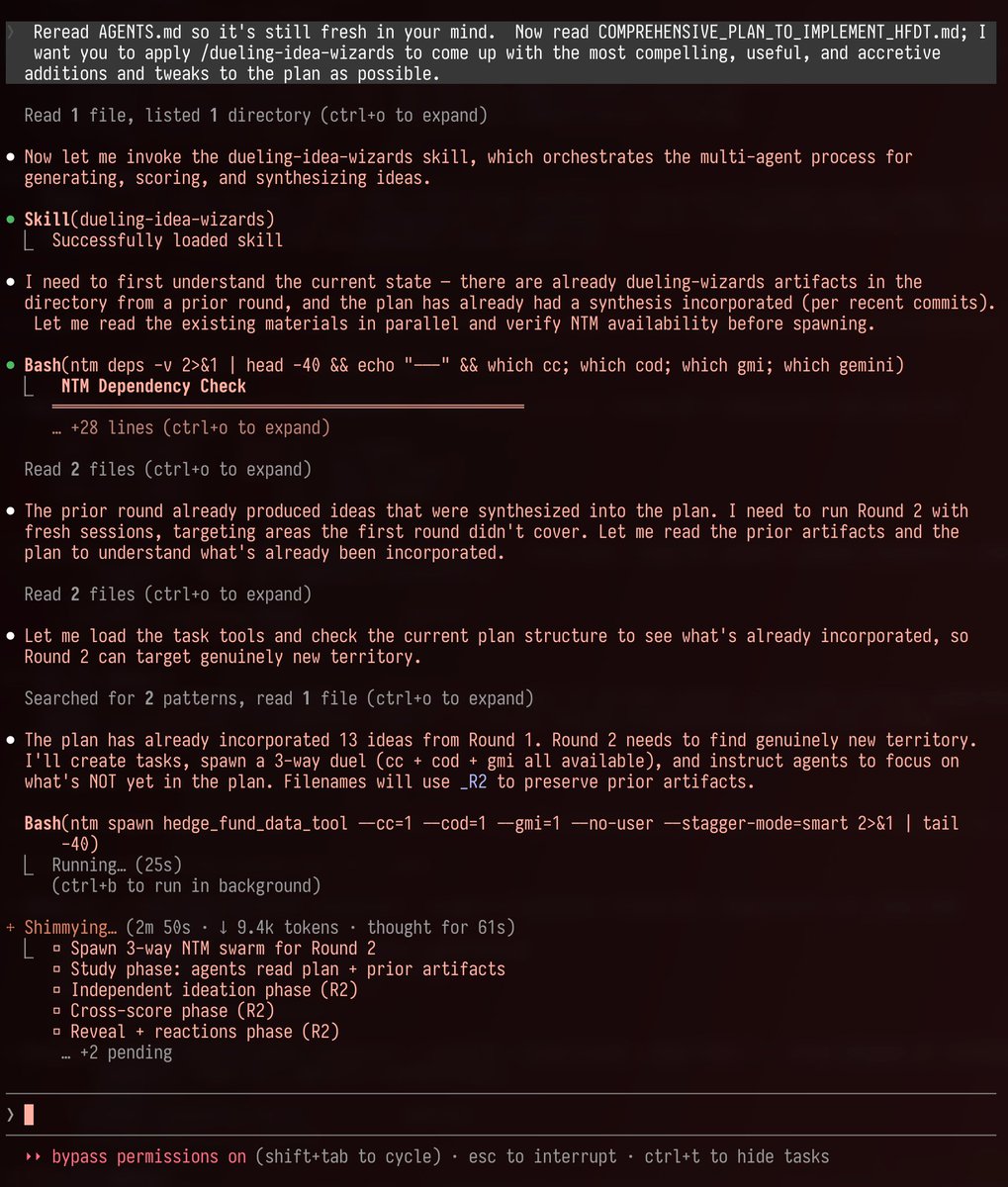

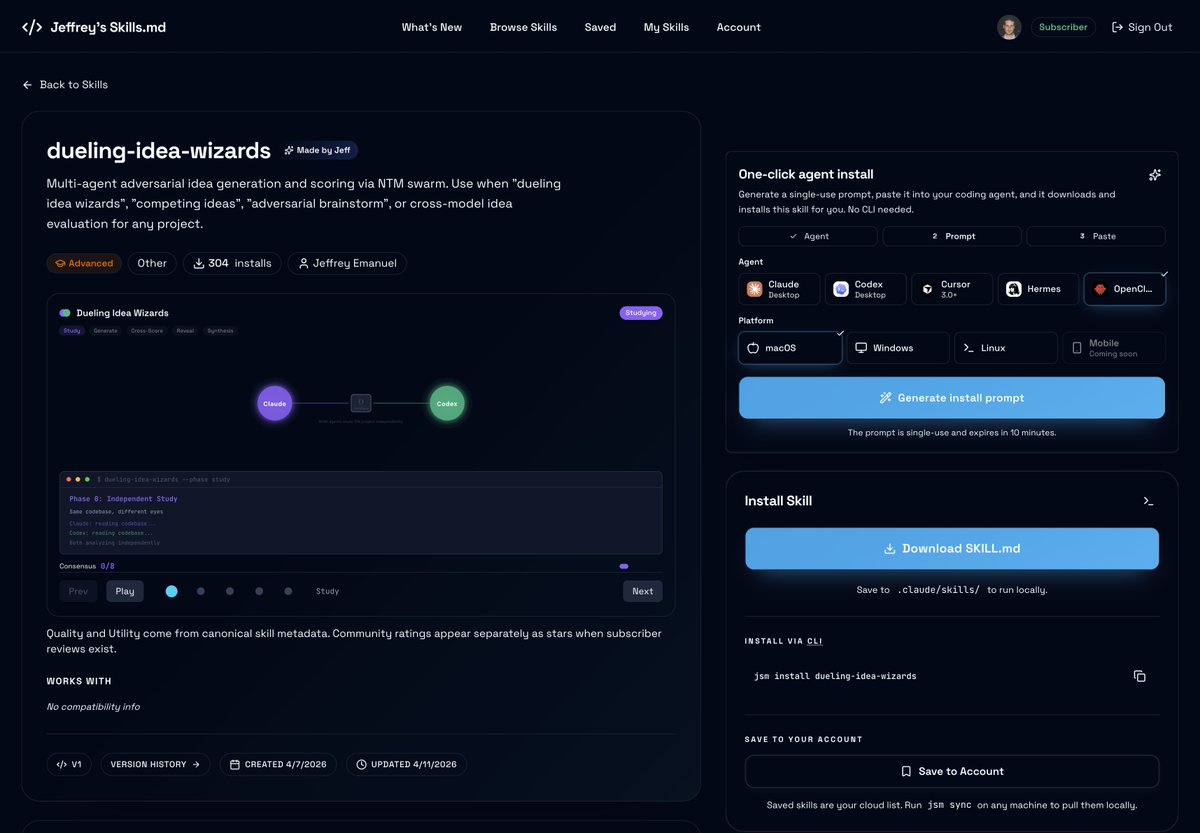

"My Favorite Prompts," by Jeffrey Emanuel Prompt 1: The Idea Wizard "Come up with your very best ideas for improving this project to make it more robust, reliable, performant, intuitive, user-friendly, ergonomic, useful, compelling, etc. while still being obviously accretive and pragmatic. Come up with 30 ideas and then really think through each idea carefully, how it would work, how users are likely to perceive it, how we would implement it, etc; then winnow that list down to your VERY best 5 ideas. Explain each of the 5 ideas in order from best to worst and give your full, detailed rationale and justification for how and why it would make the project obviously better and why you're confident of that assessment. Use ultrathink."

Today, we’re open-sourcing the draft specification for DESIGN.md, so it can be used across any tool or platform. We’re also adding new capabilities. DESIGN.md lets you easily export and import your design rules from project to project. Instead of guessing intent, agents know exactly what a color is for and can even validate their choices against WCAG accessibility rules. Watch David East break down this shared visual language in action👇. New capabilities and links in 🧵