Ruby Scanlon

280 posts

Ruby Scanlon

@rubyscanlon

US-China Tech @CNASdc | Formerly Antitrust @TheJusticeDept

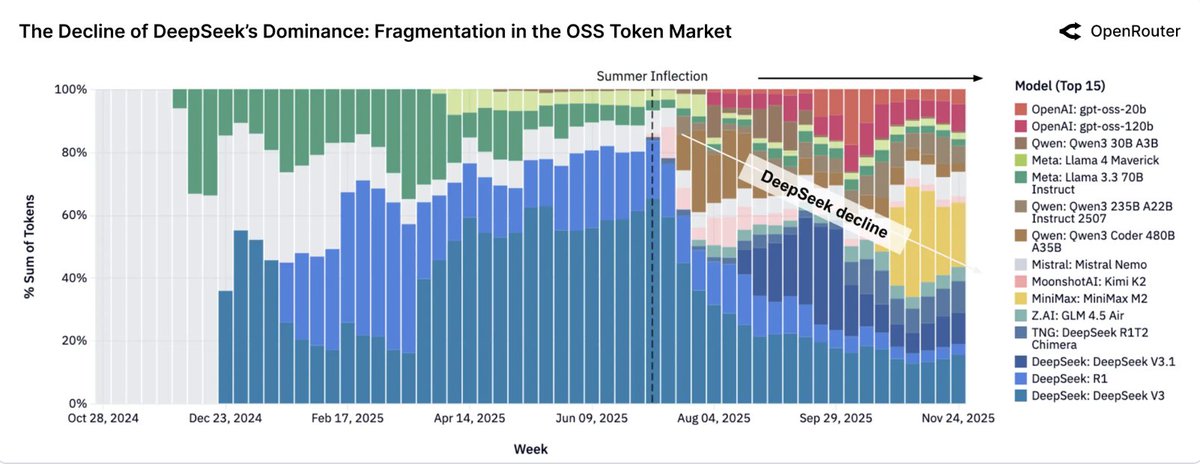

Zhipu's CEO Zhang Peng did a fascinating 2.5-hour podcast interview in Chinese before going IPO. He is hyperfocused on AGI, which he brings up repeatedly as the company's raison d’être. Here are my rough notes in case anyone finds them useful: youtu.be/toy8RLeFZ08 •Chinese AI researchers have been dreaming of AGI for a long time, but was previously so distant a goal. •Back then, people were focused on cognitive AI or 认知人工智能 •Tsinghua has a special focus on industry collaboration, which he said helped him understand AI needs from a practical standpoint. •Tsinghua has an emphasis on P2P: paper to product. •As early as 2016, Zhang Peng was thinking about launching his own startup. But at Tsinghua at the time, there wasn’t an approved process for doing this, even though some people did it. Later there was an official process created for China. •Launching a startup out of Tsinghua was quite a process, involving the university president, figuring out stakes and shareholding structure, took 1.5 years, they were basically the first •Early AI systems “don’t know what they don’t know.” Is kind of self-awareness is still not there. •He talks about a field called intelligence studies 情报学 that can out of libraries. How to generate new ideas and knowledge from consuming large sets of texts. •Some of the earliest work his lab did for clients was to forecast which technologies would be hot in 3-5 years •While still a lab at Tsinghua, their AMiner AI system performed quite well and got some international recognition •When GPT-3 came out, he was talking to another researcher who had high praise, said it was a milestone for the field. But he said that it still hadn’t solved the fundamental problem of “not knowing what it doesn’t know”. It would just give you an answer regardless. •They quickly set about trying to understand the new technology behind GPT-3, what was different from BERT which they had known well. •Within a year, they had done the research for GLM •GLM tried to combine the best of both BERT and GPT •He really likes Ilya, thinks his thought process is really good •Asked if he ever thought of making Zhipu like OpenAI, he said actually in the early days Zhipu was quite similar in structure •They had to decide whether they wanted to pursue similar approach as OpenAI, weighed whether the massive investment required was worth it, estimated at the level of tens of millions RMB or more •They decided to do it themselves rather than wait to see if someone else would •They had to explain to investors for their series B fundraising round that they were building something like GPT-3, going for massive training, and they would focus on open source •Why open source? They wanted recognition, including globally, like OpenAI had gotten •Also US-China relations were not so complicated back then •A major Stanford report came out comparing AI models and GLM was the only one from China near the top •Other Chinese labs working on this at the time included Baidu, Alibaba, Zhiyuan Institute •Did investors understand? Absolutely not. Asked how they were going to make money. •Then ChatGPT came out and suddenly investors understood. Then investors were looking for them, asking whether they could build something like a ChatGPT •From early on they wanted to create a small version of the model that researchers could use, like an 6B model that a single GPU can run •ChatGPT made him very excited because it validated his approach, made the right bet •Until then, everyone in China—investors, users—had such a short term view of AI. Com back to us when you have something useful. •2023 was the battle of a hundred models. A lot of Chinese startups were founded to chase this new trend •He was excited but also worried the whole market would swing from low to high and then crash and not recover •There was over-optimism. Despite the excitement, there were so many issues that were not fixed yet. Chatbot alone that can talk back is not so impressive. Would you trust its advice on what medicine to take? Still long road ahead. •A lot of the work on vertical AI went away. Don’t hear so much about that anymore. He thinks the general capabilities is important. •A lot of people didn’t understand. They said medicine or other specialized areas can make money, but not general foundation model. •But then he started MaaS model as a service •Why not do consumer AI like ChatGPT? Because in the US people are willing to pay for subscription but not true in China. You’ll have to keep giving out discounts and freebies to keep them. •Going after enterprise market is not as sexy as consumer market, but it’s more stable, not just race to bottom on price •Zhipu understands the technology. They can do the same job, better, for lower price. •Their headcount now is 800. It doubled basically every year. •He says they’re absolutely determined to pursue AGI. But the path is long with many challenges •He talks about the challenges of training for self driving cars. Too many corner cases. And the model can’t try and learn from mistakes and self correct like a human. •So now it’s not just pre-training but also mid- and post-training •There are levels of AI: L1 to L5. L4 has theory of mind and knows what it doesn’t know. L5 is human-like consciousness. Right now we’re at L3. L2 was about supervised fine tuning and test time scaling. Now L3 is RL. Each phase, the scaling laws change. •The scaling laws are not so set. But when you have a pattern like that in science, then it’s useful because you then ask what’s driving it and can we harness that •On computer scaling, there’s no way to recoup the cost •OpenAI is going for more compute. But Zhipu is going more for optimization •They’re using 1/4 of what OpenAI used to train GPT-3 •This is a Chinese strength. Drive down cost. •How is GLM-4.7 so good? Engineering, architecture. Not by doubling the parameters. •Speaking of scaling laws, he talks about the need to understand the real essence to the relationship between intelligence and compute •Transformers may not be the ultimate answer. Zhipu is already looking at trying to figure out what the next architecture might be •He uses the phrase 柳暗花明 which is kind of like out of confusion comes clarity •But he believes that still within the transformer paradigm, there’s a lot more space to explore •DeepSeek in 2025 was huge for them on every front: engineering, research, market. They discussed intensively. •Zhipu felt like they were hitting a wall on their GLM-4 plus model. But DeepSeek helped show that you could go a level deeper on the engineering, helped validate some things about leaping over supervised fine tuning and strengthening the base that they had been testing •After DeepSeek, Zhipu boosted RL •But DeepSeek didn’t change their approach to open source which they’d already been doing •US labs were moving away from open source. Closed source has greater commercialization advantages •DeepSeek’s comprehensive open source approach got people in China confused, they associated open source with free, asked Zhipu why should we pay you when we can just use DeepSeek’s totally open source model •He explained that you can do this. But many people tried and came back because DeepSeek doesn’t offer enterprise services •He thinks DeepSeek is doing full open source because they don’t care about enterprise market or even commercialization, just focused on the technology •DeepSeek also benefited from other open source innovations. Don’t underestimate or overestimate anyone. •China open source will spur more adoption. He quotes Jensen Huang as saying the technology has no country borders but the applications and people do, benefits too •Cannot have the technology concentrated in just a few companies or individuals. Open source is a way to open this up to people around the world and give more options •Without China open source, then rest of world just has US closed source options. So China open source helping other people benefit from AI •Agents: doing more research here, need data, need to figure out how to break a problem down into smaller parts •Next paradigm after scaling will be self-learning, no clear line between training and inference •Need to figure out how to close the loop on learning and training. Not sure if this will be a tech breakthrough or engineering breakthrough •Another major area to research is how to really combine all the data across modalities and integrate into a single model. Right now the needs for coding agents planning vs other tasks might be quite distinct. Also VLA which they’re working on •He says he can see the first light 曙光 of AGI, especially if you get online learning 在线学习 •When will you let an AI go out into the world and explore the world and learn? Maybe starts in 2027? Then maybe 5-8 years later, after more adjustment and improve, deal with safety, etc •AGI is not a short term thing. It’s running a marathon. •IPO is a very natural route. Zhipu has been planning on IPO for years •Looking at OpenAI’s plans for IPO, he says they follow a logic of 3 “highs”: high risk, high investment, high return •A big misunderstanding people have of Zhipu is they think it’s a for-government company targeting just government contracts. But that’s not true, lots of private companies as customers, government only 20% of Zhipu’s business •Xiaojun the interview said she was talking to an AI 1.0 person who said the reason why Chinese AI companies are going IPO now is they’re fleeing from a potential bubble in 2026 •In China, a lot of comparisons to the internet bubble these days. But he argues that even after the internet bubble burst, there was still a lot of useful stuff left from that. (An argument often made by AI leaders in the US) •US investment in AI is orders of magnitude greater than in China. So China AI investment is not very big. He says US might be a bubble, but China there’s actually still not enough investment. •Zhipu is definitely thinking about more consumer facing applications but something useful, not just entertainment. •Consumer AI battle is driven by the logic of the internet platforms, which need to get traffic. •Xiaojun asks if some people think Zhipu is kind of boring given its focus on tech rather than popularity. •But he says it’s like how they say the Tsinghua engineers are boring. But they’re very smart and capable. •Asked if Kimi is more cool, they’re also Tsinghua •He talks about the way Tsinghua teaches you to learn, so you can face new challenges never seen before. •Different stages of a company’s growth have their own challenges. •At 100 people, he basically knew every employee by name. But larger and you start to see people you don’t know. You can still control but through a different level. •They have people from many backgrounds. Very open culture. Not just Tsinghua. Also Shanghai Jiaotong, Peking University, ByteDance, Alibaba. •Really focused on trying to fully integrate all modalities into a single model •Also focused on internationalization •Xiaojun asks who in China is really going for AGI. But he says he doesn’t know. Many definitions of AGI. •Asked when Zhipu will break even. He says they have a forecast in their IPO prospectus. But he feels like it’s going in a good direction. Revenue growing quickly, optimizing costs. •Asked if he would choose to have an AGI company or a profitable one, he says definitely the AGI one •If in the next 5 years they just make money but don’t make technical progress, then he’ll be unsatisfied. •They opened a branch in Shenzhen because they have a large customer there. •How do you want Zhipu to be remembered by history? As the company that pioneered AGI. He uses the Chinese expression 吃螃蟹 first to eat crab

China’s chatbots are censored by the state. In our @PNASNexus paper with @jenjpan, we find substantially higher levels of political censorship in large language models (LLMs) originating from China than those developed outside China. doi.org/10.1093/pnasne…🧵

Chinese smart city tech (IoT, cameras, analytics for traffic, public health) operates in >100 countries China's edge: bundled packages + leveraging existing telecom relationships US firms have no comprehensive alternative, even as the market hits ~$4B by 2030

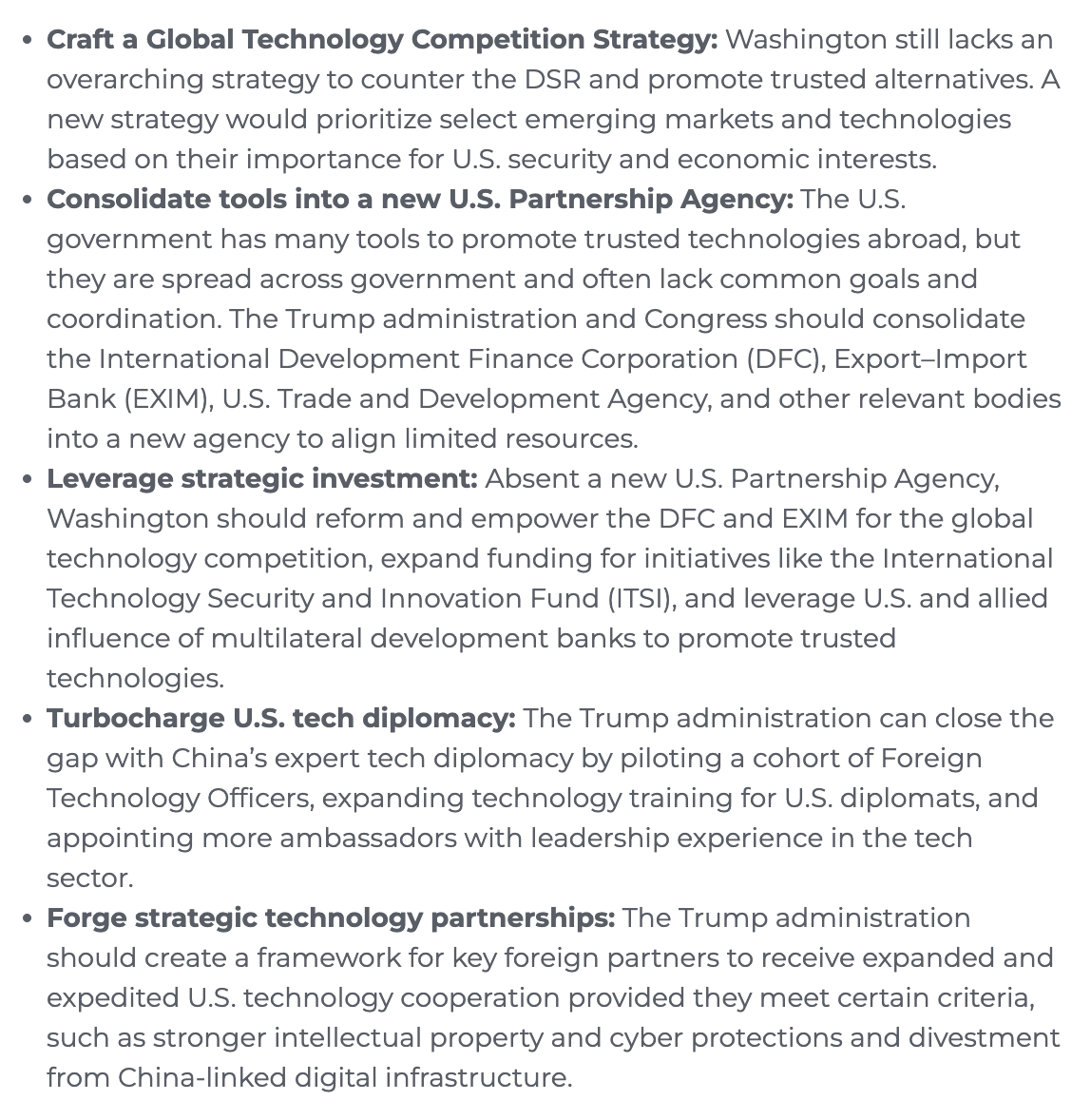

After 18 months of research, I'm excited to release my and @vivekchil's new report, Countering the Digital Silk Road It examines where the U.S. and China each hold advantages in key tech exports, how Chinese firms compete abroad, and how U.S. tech promote efforts compare 🧵 1/