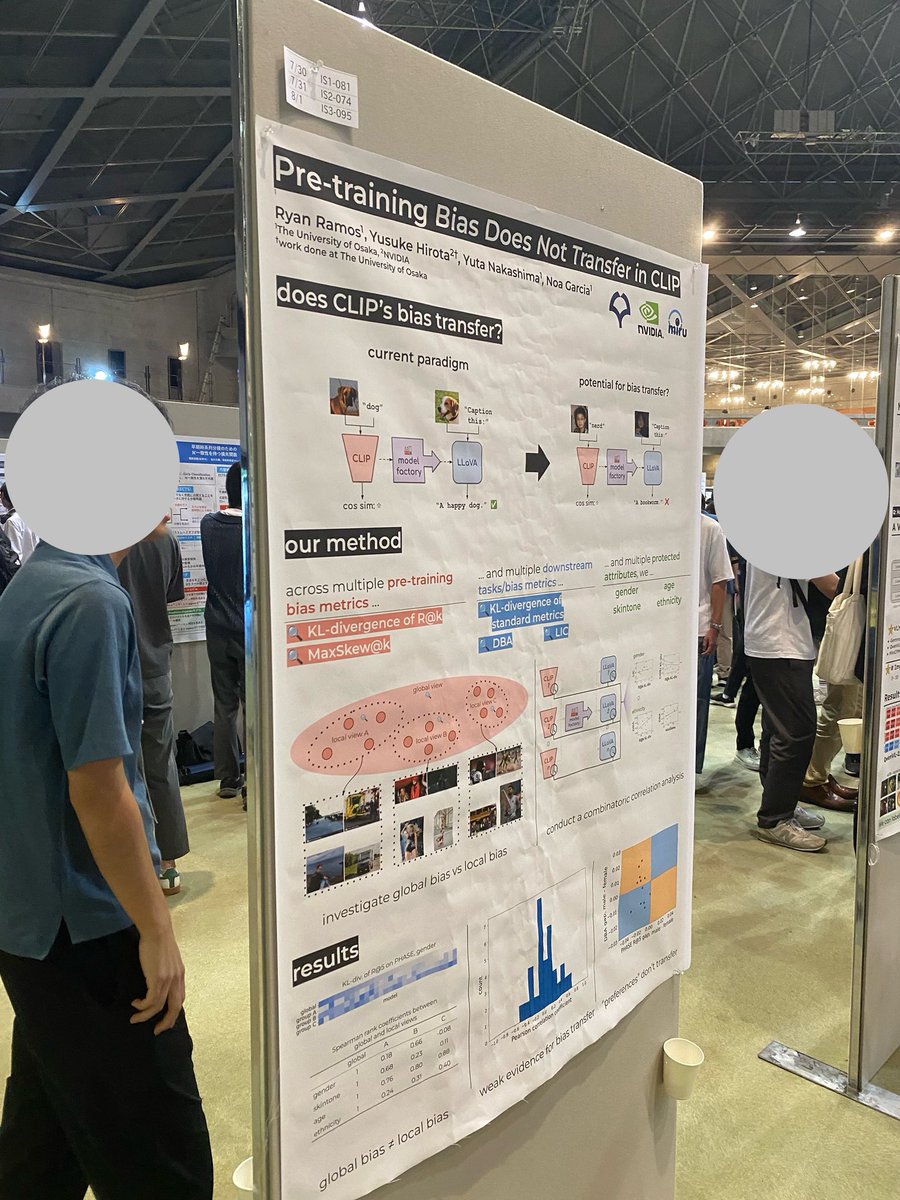

@ICCVConference @dahlian0 [Poster session 4 #207 *Highlight*] @ryan_c_ramos+, Processing and acquisition traces in visual encoders: What does CLIP know about your camera? (2/2)

Ryan Ramos

68 posts

@ryan_c_ramos

PhD student @ IsLab, Osaka University

@ICCVConference @dahlian0 [Poster session 4 #207 *Highlight*] @ryan_c_ramos+, Processing and acquisition traces in visual encoders: What does CLIP know about your camera? (2/2)

Have you ever asked yourself how much your favorite vision model knows about image capture parameters (e.g., the amount of JPEG compression, the camera model, etc.)? Furthermore, could these parameters influence its semantic recognition abilities?

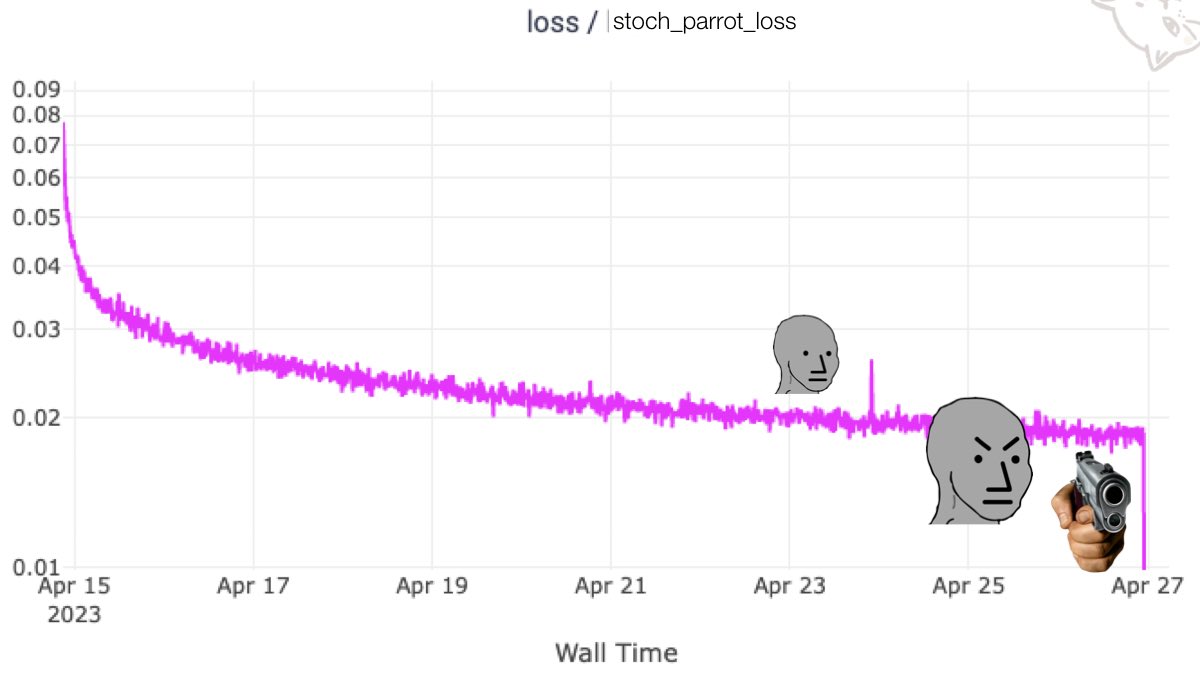

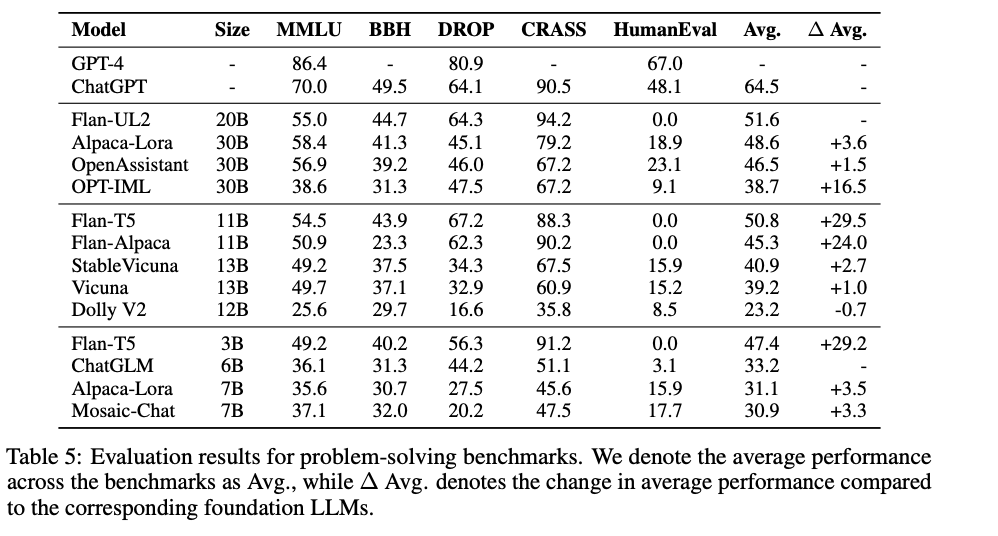

QLoRA: 4-bit finetuning of LLMs is here! With it comes Guanaco, a chatbot on a single GPU, achieving 99% ChatGPT performance on the Vicuna benchmark: Paper: arxiv.org/abs/2305.14314 Code+Demo: github.com/artidoro/qlora Samples: colab.research.google.com/drive/1kK6xasH… Colab: colab.research.google.com/drive/17XEqL1J…