Haoran Xu✈️ICLR26

136 posts

Haoran Xu✈️ICLR26

@ryanxhr

PhD student @UTAustin | @AmazonScience AI PhD Fellow | Towards super-human AGI using RL🚀

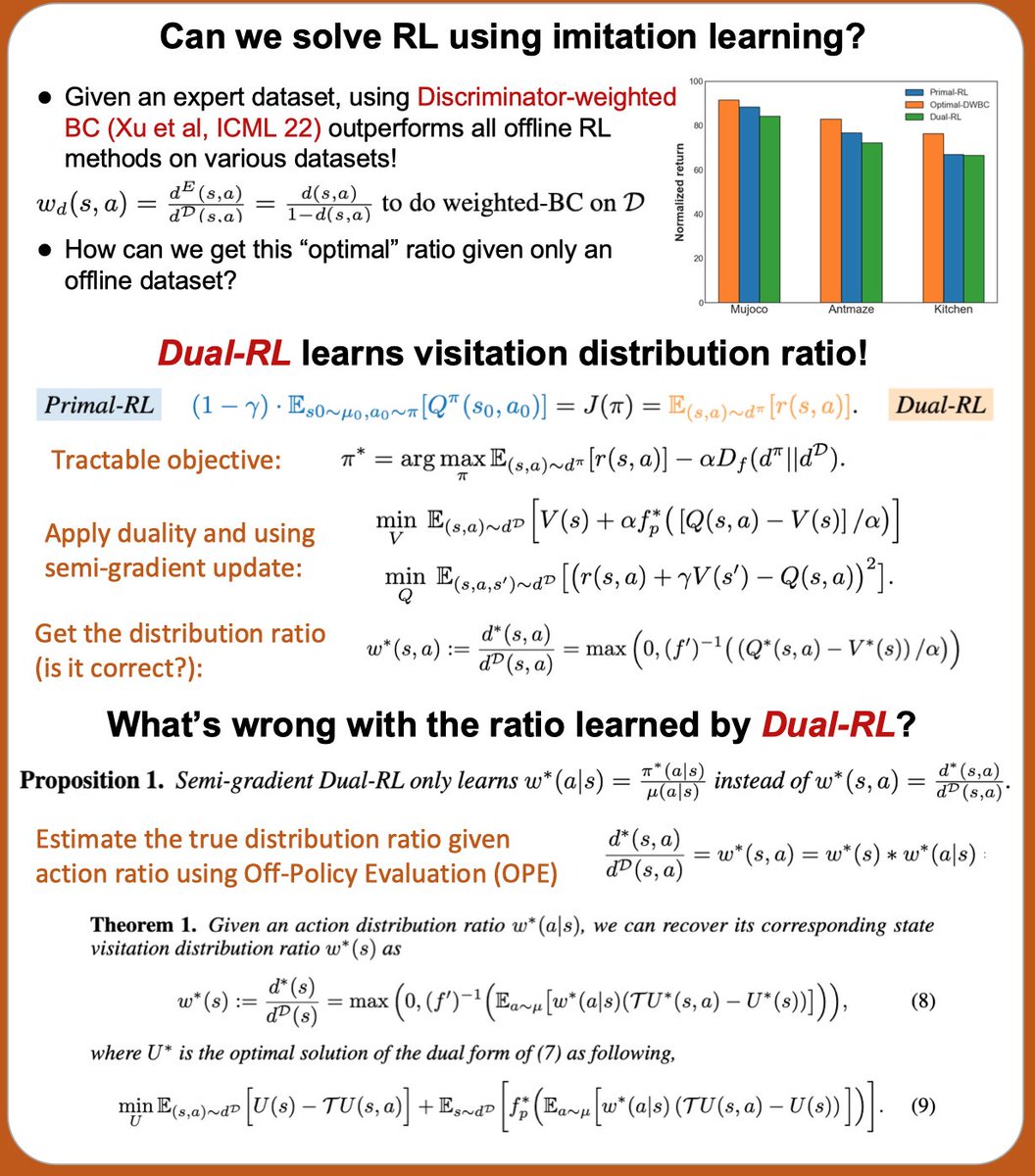

Both offline RL and LLM RL fine-tuning can be formulated as behavior-regularized RL problems. We propose Value Grdient Flow (VGF), a new scalable and sample-efficient paradigam that treats behavior-regularized RL as an optimal transport problem. arxiv.org/abs/2604.14265 🧵[1/7]

Excited to announce @amazon's new AI PhD Fellowship Program supporting 100+ students across 9 universities like Carnegie Mellon, MIT & Stanford. Fellows will be paired with senior scientists working in related fields, plus receive financial support and AWS credits for research. Learn more: amazon.science/news/amazon-la…

introducing the belief state transformer: a new LLM training objective that learns (provably) rich representations for planning bst objective is satisfyingly simple: just predict a "previous" token alongside the next token come by our ICLR poster this thursday to chat!