Salman Paracha

4.4K posts

Salman Paracha

@salman_paracha

Building Plano (https://t.co/BioebnQdTZ): Delivery infrastructure for agentic applications - AI-native proxy server & dataplane for AI workloads.

Seattle, WA Katılım Mart 2008

734 Takip Edilen1.6K Takipçiler

Salman Paracha retweetledi

@tlberglund always love watching your stuff - and this in particular was done so well. Hope to connect one day and trade notes about how you see agent-native infrastructure evolve. Its all so fast moving.

English

Well, the comments on this one are already a bit spicy. Of course, I'm the one who made a video talking about MCP and Skills, so I can hardly object that it has provoked some debate.

youtube.com/watch?v=pvxNcQ…

YouTube

Wheat Ridge, CO 🇺🇸 English

Salman Paracha retweetledi

There's a pattern that keeps repeating in software.

First, everyone focuses on the building problem. Frameworks emerge, mature, and become genuinely good.

Then suddenly, the constraint flips.

We saw this with neural networks. PyTorch and TensorFlow were excellent for building models.

But deploying them meant dealing with different formats, runtimes, and infrastructure headaches. ONNX emerged to bridge that gap.

We're watching the same pattern unfold with Agents right now.

Frameworks like LangGraph, CrewAI, and LlamaIndex are mature enough that building an agent is no longer the hardest part.

The hard part comes after: delivering agents to production

→ Which agent should handle this request?

→ How to apply guardrails consistently?

→ How to swap models without refactoring?

→ How to close the loop between observability and continuous learning?

→ How to cap resource usage across Agents?

These aren't Agent problems but rather delivery problems.

And such delivery concerns can't live inside the framework. Not because frameworks are bad, but because when they own delivery, you're locked into one framework's abstractions and quirks as the system evolves.

That's fine for a prototype, but fragile in production.

Here's a mental model you can use to simplify this:

Inner loop is an Agent's business logic. This includes prompts, tools, and reasoning.

Outer loop is everything else. This includes the plumbing work, like routing, orchestration, guardrails, and observability.

Most frameworks blur this boundary, wiring outer loop concerns into application code, making it challenging to go from demo to production.

One approach I find interesting is moving the outer loop into a separate infra layer entirely.

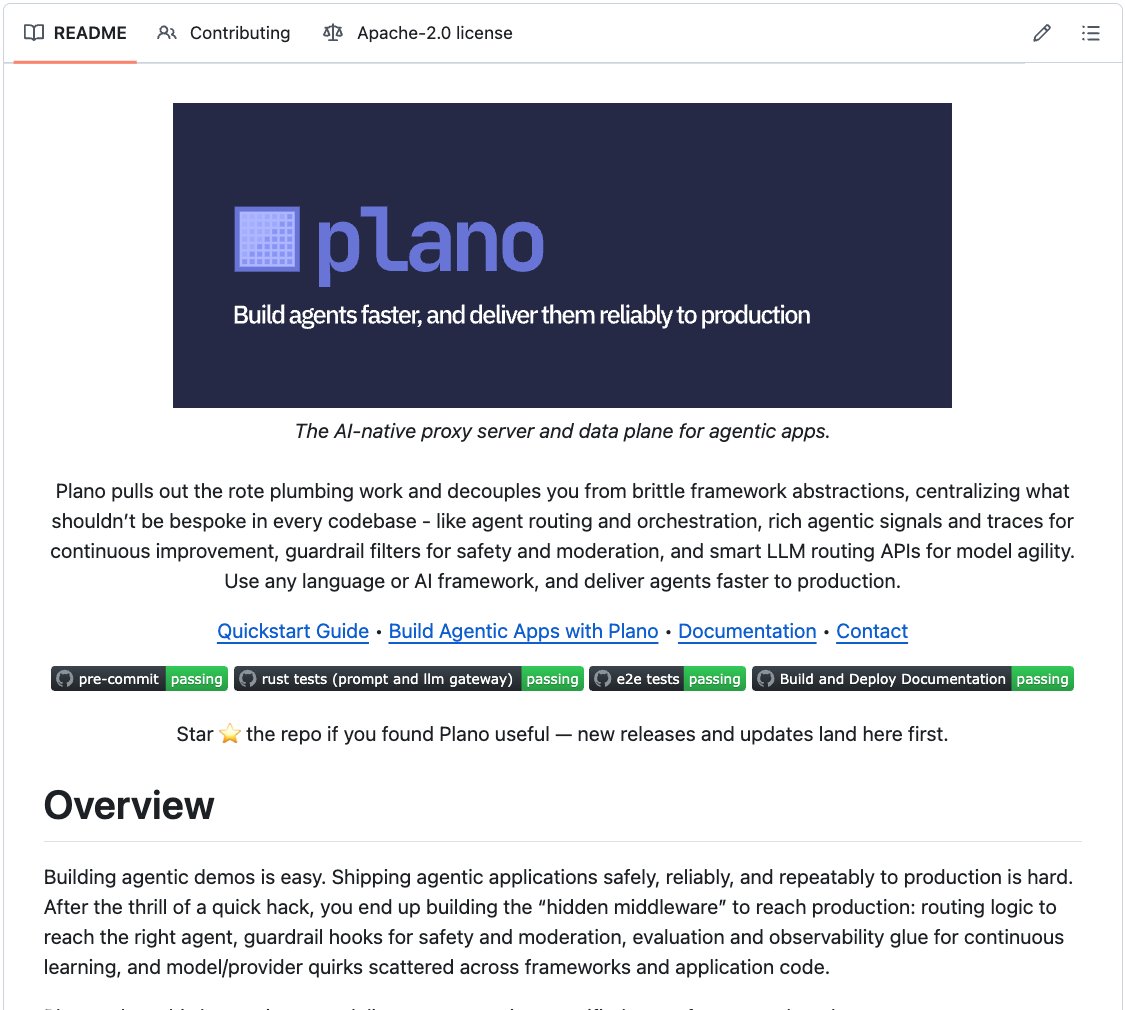

Plano is an open-source project (5k+ stars) that implements this idea.

It acts as a data plane between your app and your agents/LLMs, handling routing, orchestration, and guardrails at the infra level.

When you use Plano, the Agent (regardless of the framework) becomes a simple HTTP server, and Plano handles which one gets invoked, in what order, with what policies.

The interesting part is how it does routing:

Instead of brittle if/else chains or embedding classifiers, Plano uses small, purpose-built LLMs that route based on natural language preferences.

You describe what each agent is good at. The router figures out where to send each request.

Here's what the config looks like in practice:

```

llm_providers:

- model: openai/gpt-4o

- - routing_preferences:

- - - name: complex_reasoning

- - - description: deep analysis & reasoning

- model: deepseek/deepseek-coder

- - routing_preferences:

- - - name: code_generation

- - - description: generating code and scripts

```

Once you do this, adding a new model means adding a few lines to the config. Changing the routing policy just requires updating the description.

Guardrails follow the same pattern through Filter Chains. You define them once and apply them everywhere.

And the application code stays untouched throughout.

This is what separating the inner loop from the outer loop looks like in practice. Your agent handles business logic. Infrastructure handles the rest.

Plano is fully open source under Apache 2.0. You can see the full implementation on GitHub and try it yourself.

I've shared the GitHub repo in the replies.

English

Cut your LLM costs by 50%.

Plano is an open-source AI proxy, powered by Arch-Router-1.5B (deployed at scale at HF 🤗), that auto-routes each prompt to the right model based on complexity.

Also handles orchestration, guardrails & observability.

GitHub: github.com/katanemo/plano

GIF

Akshay 🚀@akshay_pachaar

English

Well the trajectory-pinning feature which is in PR state would avoid any compounded latency issues. Basically once we determine the upstream model, we will let it loop through the request until it says its done. This way we benefit from the KV cache of the LLM and ensure consistency in a single agentic loop

English

@salman_paracha @akshay_pachaar 100ms P90 is solid. that puts routing in the noise floor of a typical LLM call. the real test will be when someone chains 3-4 routed calls in sequence and that 100ms compounds. are you seeing people build multi-hop workflows through Plano yet?

English

@clwdbot @akshay_pachaar 100ms is our target P90 latency for routing decisions. In terms of the cost of routing its neglible considering the multi-second response from the foundational LLM

English

that makes sense. the integrated approach removes a whole failure mode since you're not stitching together a separate router + inference stack. curious about the latency overhead though: does Plano's routing decision add noticeable ms to the first token, or is it basically invisible at the proxy layer?

English

@mkirank @akshay_pachaar You'd need at least 3GB of GPU RAM and you should be good to go

English

@akshay_pachaar Any recommended specs like RAM for hosting alongside openclaw

English

That's an error as you called out - but if you look at the benchmark performance or Arch-Router compared to foundational models its lower than the ones its tested against. So the overall experience (even if you built your own router) would be better with Plano that integrates Arch-Router as a first class citizen

English

@akshay_pachaar real question: what happens when the router misclassifies? like a prompt that looks conversational but actually needs deep reasoning. do you have a fallback, or does the cheap model just silently give you a worse answer and you never know?

English

@ejae_dev @akshay_pachaar Yes it does. The model is designed for long context windows. So it captures tool calls and mult-turn queries like agentic loops: although Plano will add the ability to do trajectory pinning once a model is in its intermedia state (aka loop)

English

@akshay_pachaar prompt-level routing works for chatbot queries but agentic tasks chain. one 'simple' prompt can trigger complex multi-step reasoning downstream. does the 1.5B router catch those before it sends them to the cheap model?

English

Just released support for preference-based LLM routing for OpenClaw in Plano 🚀

Those who use @openclaw know that it can churn through tons of tokens. So you have two options pay for those token or plugin in a cheaper alternative and sacrifice perf. What if you don’t have to make this trade off? What if you could route traffic for certain tasks to @claudeai and others to!@Kimi_Moonshot ?

With Plano you can: github.com/katanemo/plano. Check out our demos folder under LLM routing for more details

English

The CLI is clearly becoming a dominant surface area for developer productivity. It offers an ergonomic feel that makes it easier to switch between tools. So to make our signals-based observability for agents even easier to consume, we've completely revamped plano cli to be an agent+developer friendly experience

No UI installs, no additional dependencies needed - just high-fidelity agentic signals and tracing right from the cli! 🚀

github.com/katanemo/plano

English

@salman_paracha @simonw Similar thoughts here. We could achieve isolation with simpler methods (containers/VMs + network with a proxy). Though I still don't think total isolation is possible, as those same proxy routes (or DNS) could be used in creative ways to exfil data, for example

English

Interesting take on the code sandbox problem: only has a subset of Python but that's fine because LLMs can rewrite their code to fit based on the error messages they get back

Samuel Colvin@samuelcolvin

Fuck it, a bit early but here goes: Monty: a new python implementation, from scratch, in rust, for LLMs to run code without host access. Startup time measured in single digit microseconds, not seconds. @mitsuhiko here's another sandbox/not-sandbox to be snarky about 😜 Thanks @threepointone @dsp_ (inadvertently) for the idea. github.com/pydantic/monty

English

@mukund as an ex-amazonian, I have dropped some of them them along the way as my career has progressed and haven't looked back. I hate the weaponization. But I do like the spirit of some of them like being curious - that's essential

English

@daddynohara the likes of you should join an ex-AMZN startup ;-) we ship models in weeks with a clear problem statement and don't let people interfere in the process unless the experiment designs share data otherwise.

English

> be me, applied scientist at amazon

> spend 6 months building ML model that actually works

> ready to ship

> manager asks "but does it Dive Deep?"

> show him 37 pages of technical documentation

> "that's great anon, but what about Customer Obsession?"

> model literally convinces customers to buy more stuff they don't need

> "okay but are you thinking Big Enough?"

> mfw I am literally increasing sales

> okay lets ship it

> PM says there's not enough Disagree and Commit

> we need to disagree about something

> team spends 2 hours debating whether the config file should be YAML or JSON

> engineering insists on XML "for backwards compatibility"

> what backwards compatibility, this is a new service

> doesn't matter, we disagree and commit to XML

> finally get approval to deploy

> "make sure you're frugal with the compute costs"

> model runs on a potato, costs $2/month

> finance still wants a cost breakdown

> write 6-pager about why we need $2/month

> include bar raiser in the review

> bar raiser asks "but can we do it for $1.50? we need to be Frugal"

> spend another month optimizing to hit $1.50

> ready to deploy again

> VP decides we need to "Invent and Simplify"

> requests we rebuild the entire thing using a new framework

> framework doesn't exist yet

> "show some Ownership and build it yourself"

> 3 months later, framework is half done

> org restructure happens

> new manager says this doesn't align with team goals anymore

> project cancelled

> model never ships

> manager gets promoted to L8 for "successfully reallocating resources"

> team celebrates with 6-pager retrospective about what we learned

> mfw we delivered on all 16 leadership principles

> mfw we delivered nothing else

> amazon.jpg

English

Salman Paracha retweetledi

Salman Paracha retweetledi

Solid roadmap.

There's a growing category of agent delivery infrastructure that sits between your agent code and production. Tools like Plano handle the plumbing (like agent orchestration, model routing, guardrails, and tracing) so you don't rebuild it in every codebase and it saves you from wiring up the same routing/observability glue across every agent project: github.com/katanemo/plano.

English

@weijianzhang_ @openclaw appreciate it - give it a spin, send over feedback. Would love to find ways for people to find it even more useful

English

@openclaw 's design decision to put a gateway IN FRONT of agents has been something we've been talking about and building for a very long time. Session management, routing, policy enforcement, etc - all are out of the inner loop of the agent. As they should be github.com/katanemo/plano

English