Phil Howes

28 posts

Phil Howes

@saltyph

building https://t.co/aUjKNzIyMT

It’s Monday, and we could all use a little help thinking. Thankfully we have the new Kimi K2 Thinking to do it for us. Kimi K2 Thinking is now live in our Model APIs with the most performant TTFT (0.3 sec) and TPS (140) on @openrouter & @ArtificialAnlys . If you’re looking for an alternative to GPT-5, utilize coding or are building agentic AI, you *need* to give this model a try. Congrats @Kimi_Moonshot , you all are astounding. Get access in the comments ➡️

This week, Baseten's model performance team unlocked the fastest TPS and TTFT for gpt-oss 120b on @nvidia hardware. When gpt-oss launched we sprinted to offer it at 450 TPS... now we've exceeded 650 TPS and 0.11 sec TTFT... and we'll keep working to keep raising the bar. We are proud to offer the best E2E latency available with near-limitless scale, incredible performance, and the highest uptime 99.99%.

It's important to support newly released open-weight models on day 1. But it's not noteworthy. What's noteworthy is to have the inference optimization muscle to immediately blow the competition out of water on latency and throughput. As measured by OpenRouter:

We're excited to introduce the Baseten Performance Client, a new open-source Python library for up to 12x higher throughput for high-volume embedding tasks! Stand up a new vector database, preprocess text, and run massive workloads in <2 minutes (vs. 15+ with AsyncOpenAI).

fast!

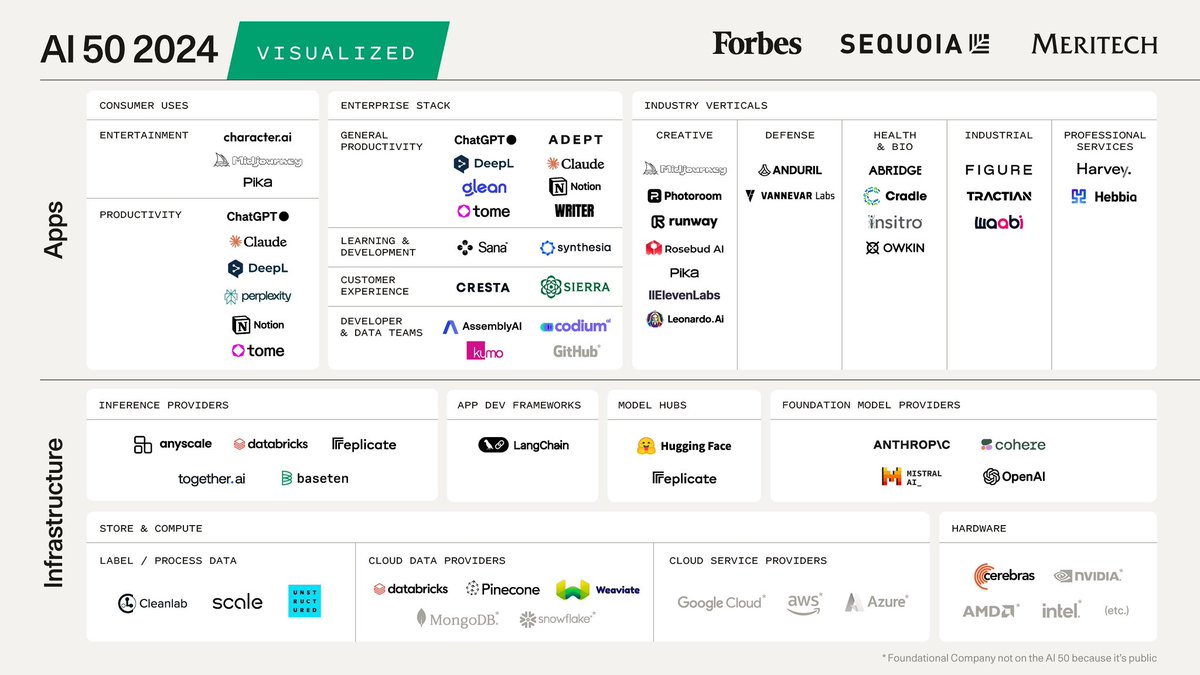

We're excited to announce that we've raised a $40M Series B to help power the next generation of AI-native products with performant, reliable and scalable inference infrastructure. baseten.co/blog/announcin…

We're excited to announce that we've raised a $40M Series B to help power the next generation of AI-native products with performant, reliable and scalable inference infrastructure. baseten.co/blog/announcin…