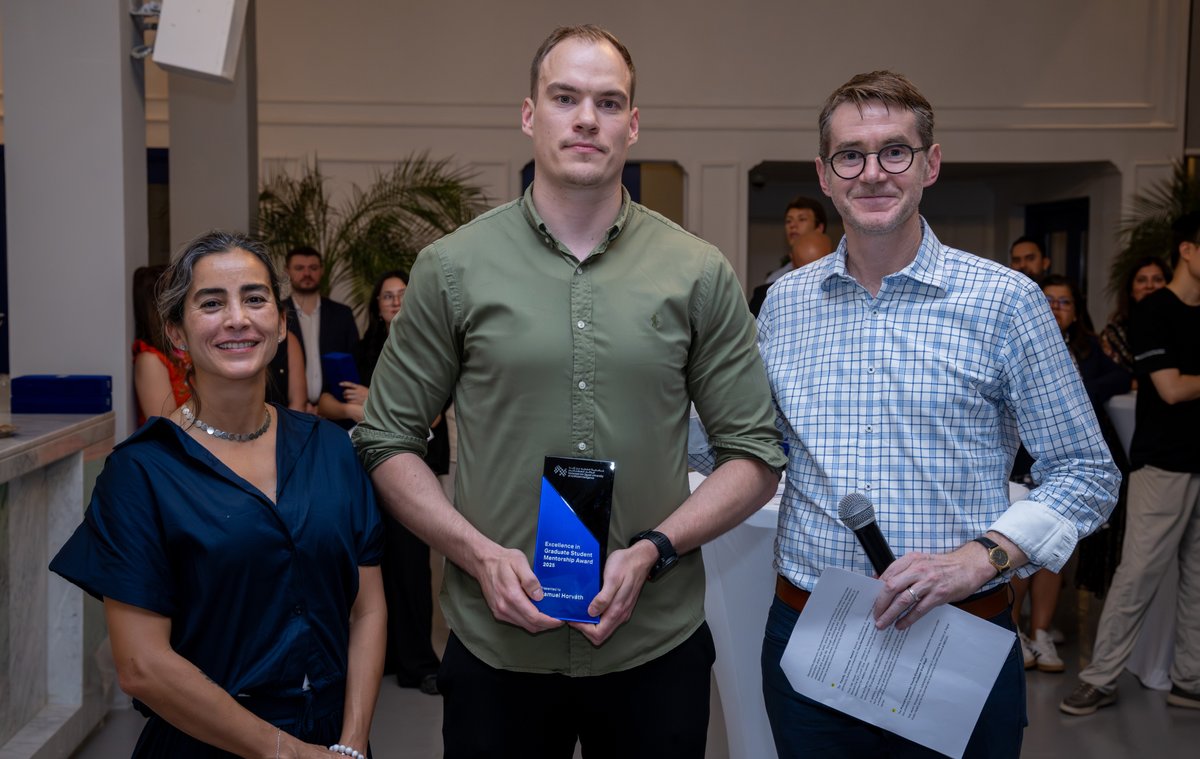

Samuel Horváth

39 posts

@sam_hrvth

Assistant professor of Machine Learning @mbzuai, Ph.D. @KAUST_News, former intern @metaAI, @samsungresearch, and @amazon. Co-organizer of @flow_seminar.

Ready to turn your research into global impact? Join the KAUST Global Fellowship Program and help shape the future of science and innovation, advance your independent research career, and tackle global challenges at a world-class university.

We welcome submissions on efficient foundation models, on-device learning, and distributed learning to our @icmlconf workshop. Deadline: May 23rd @stevelaskaridis, @sam_hrvth, @BerivanISIK, @KairouzPeter, @_cgiannoula_, @bilgeacun, @angeloskath, @TakacMartin, @niclane7

Happy to announce our @icmlconf 2025 workshop: "The Next Wave of On-Device Learning for Foundation Models" Details👇 1/3

📢: The 124th FLOW talk is on Wednesday (April 9) at **1 pm UTC.** Please register for our mailing list: bit.ly/3WVplLU. FLOW Calendar: bit.ly/3E9iIT4.